Neural-Network-Introduction神经网络介绍大学毕业论文外文文献翻译及原文

神经网络(NeuralNetwork)

神经⽹络(NeuralNetwork)1 引⾔机器学习(Machine Learning)有很多经典的算法,其中基于深度神经⽹络的深度学习算法⽬前最受追捧,主要是因为其因为击败李世⽯的阿尔法狗所⽤到的算法实际上就是基于神经⽹络的深度学习算法。

本⽂先介绍基本的神经元,然后简单的感知机,扩展到多层神经⽹络,多层前馈神经⽹络,其他常见的神经⽹络,接着介绍基于深度神经⽹络的深度学习,纸上得来终觉浅,本⽂最后⽤python语⾔⾃⼰编写⼀个多层前馈神经⽹络。

2 神经元模型这是⽣物⼤脑中的基本单元——神经元。

在这个模型中,神经元接受到来⾃n个其他神经元传递过来的输⼊信号,在这些输⼊信号通过带权重的连接进⾏传递,神经元接受到的总输⼊值将与神经元的阈值进⾏⽐较,然后通过“激活函数”处理以产⽣神经元的输出。

3 感知机与多层⽹络 感知机由两层神经元组成,输⼊层接收外界输⼊信号后传递给输出层,输出层是M-P神经元。

要解决⾮线性可分问题,需考虑使⽤多层神经元功能。

每层神经元与下⼀层神经元全互连,神经元之间不存在同层连接,也不存在跨层连接。

这样的神经⽹络结构通常称为“多层前馈神经⽹络”。

神经⽹络的学习过程,就是根据训练数据来调整神经元之间的“连接权”以及每个功能神经元的阈值;换⾔之,神经⽹络“学”到的东西,蕴涵在连接权与阈值之中。

4 误差逆传播算法⽹络在上的均⽅误差为:正是由于其强⼤的表达能⼒,BP神经⽹络经常遭遇过拟合,其训练过程误差持续降低,但测试误差却可能上升。

有两种策略常⽤来缓解BP⽹络过拟合。

第⼀种策略是“早停”:将数据分成训练集和验证集,训练集⽤来计算梯度、更新连接权和阈值。

第⼆种策略是“正则化”,其基本思想是在误差⽬标函数中增加⼀个⽤于描述⽹络复杂度的部分。

5 全局最⼩与局部极⼩若⽤Ε表⽰神经⽹络在训练集上的误差,则它显然关于连接权ω和阈值θ的函数。

此时,神经⽹络的训练过程可看作⼀个参数寻优的过程,即在参数空间中,寻找最优参数使得E最⼩。

神经网络(neural network)

神经网络(neural network)Neural networks are a type of artificial intelligence system that is inspired by the way biological neural systems work. They use multiple layers of neurons to process complex data and make predictions or decisions. Neural networks can be used in tasks such as image and speech recognition, natural language processing, autonomous navigation, and more. Neural networks can learn from data and make decisions that would otherwise require a human to interpret, enabling AI to be used in a wider range of use cases. Additionally, neural networks are able to adjust and adapt based on different inputs, meaning that they can become more accurate and efficient over time.about how AI can help diagnose diseases. AI can be used to diagnose diseases in a variety of ways. AI-assisted diagnostic systems can be used to analyze patient data, such as symptoms and medical history, to identify illnesses. AI-driven image analysis tools can also be used to detect and diagnose abnormalities in medical imaging. Additionally, AI can be used to examine genetic data to identify potential genetic markers of certain diseases, and develop personalized treatment plans. AI can also be used to provide personalized health advice to patients, or to recognize subtle changes in vital signs or biometrics that may indicate the presence of an illness or condition.about how AI can be used in autonomous vehicles? AI can be used in autonomous vehicles to help them navigate the roads. AI-driven algorithms can analyze data from sensors, cameras, and lasers to detect nearby cars or obstacles, anticipate other drivers’ behavior, and effectively navigate traffic. AI can also be used to provide predictions on road conditions such as weather or traffic levels, allowing autonomous vehicles to adjust their route accordingly. Additionally, AI-based cybersecurity systems can be used to protect vehicles from hackers and malicious actors. Finally, AI-powered voice and visual recognition systems can allow autonomous vehicles to understand and respond to commands from drivers.about how AI can be used in healthcare? AI can be used in healthcare to help diagnose, treat and manage illnesses. AI-assisted systems can analyze patient data, such as symptoms and medical history, and identify diseases. AI-driven image analysis tools can also be used to detect and diagnose abnormalities in medical imaging. Additionally, AI can be used to examine genetic data to uncover potential genetic markers of diseases and develop personalized treatment plans. AI can also be used to provide personalized health advice to patients. Finally, AI-powered voice and visual recognition systems can be used to streamline administrative tasks and research processes within the healthcare industry.。

神经网络的综述

1.绪论 (3)1.1 神经网络的提出与发展 (3)1.2神经网络的定义 (3)1.3神经网络的发展历程 (4)1.4 神经网络研究的意义 (6)2.BP神经网络 (7)2.1 BP神经网络介绍 (7)2.2 BP算法的研究现状 (7)2.3 BP网络的应用 (8)2.4基本结构与学习算法 (8)2.5 动作过程 (11)2.6 主要特点及参数优选 (13)3.BP网络在复合材料研究中的应用 (15)3.1 材料设计 (15)3.2 性能预测 (16)2.4损伤检测和预测 (17)2.5 结论 (17)致谢: (18)BP神经网络综述摘要:本文阐述了人工神经网络和神经网络控制的基本概念特点以及两者之间的关系,讨论了人工神经网络的两个主要研究方向神经网络的VC 维计算和神经网络的数据挖掘,着重介绍了人工神经网络的工作原理和神经网络控制技术的应用首先介绍了神经网络的发展历程,随后对BP神经网络的学习方法分为了导师知识学习训练和模式识别决策,并重点分析了导师知识学习训练的网络结构和学习算法,最后介绍了BP神经网络在性能预测中的应用。

关键词:人工神经网络;神经网络控制;应用;维;数据挖掘Abstract:It expounds the basic concepts, characteristics of the artificial neural network and neural network control and the relationship between them.It discusses two aspects: the Vapnik-Chervonenkis dimension calculation and the data mining in neural nets.And the basic principle of artificial neural networks and applications of neural network control technology are emphatically introduced. Key words:Artificial Neural Networks; Neural Network Control;this paper introduces the developing process of neural networks, and then it divides the learning methods of BP neural network into a inst ructor knowledge learning training and pattern recognition decisions, and focus on analysis of the network structure and learning algorith m of knowledge and learning mentors training .And finally it introduc es the applications of BP neural network in performance prediction.Application;Vapnik-Chervonenkis Mimension;Data Mining1.绪1.1 神经网络的提出与发展系统的复杂性与所要求的精确性之间存在尖锐的矛盾。

神经网络(NeuralNetwork)

神经⽹络(NeuralNetwork)⼀、激活函数激活函数也称为响应函数,⽤于处理神经元的输出,理想的激活函数如阶跃函数,Sigmoid函数也常常作为激活函数使⽤。

在阶跃函数中,1表⽰神经元处于兴奋状态,0表⽰神经元处于抑制状态。

⼆、感知机感知机是两层神经元组成的神经⽹络,感知机的权重调整⽅式如下所⽰:按照正常思路w i+△w i是正常y的取值,w i是y'的取值,所以两者做差,增减性应当同(y-y')x i⼀致。

参数η是⼀个取值区间在(0,1)的任意数,称为学习率。

如果预测正确,感知机不发⽣变化,否则会根据错误的程度进⾏调整。

不妨这样假设⼀下,预测值不准确,说明Δw有偏差,⽆理x正负与否,w的变化应当和(y-y')x i⼀致,分情况讨论⼀下即可,x为负数,当预测值增加的时候,权值应当也增加,⽤来降低预测值,当预测值减少的时候,权值应当也减少,⽤来提⾼预测值;x为正数,当预测值增加的时候,权值应当减少,⽤来降低预测值,反之亦然。

(y-y')是出现的误差,负数对应下调,正数对应上调,乘上基数就是调整情况,因为基数的正负不影响调整情况,毕竟负数上调需要减少w的值。

感知机只有输出层神经元进⾏激活函数处理,即只拥有⼀层功能的神经元,其学习能⼒可以说是⾮常有限了。

如果对于两参数据,他们是线性可分的,那么感知机的学习过程会逐步收敛,但是对于线性不可分的问题,学习过程将会产⽣震荡,不断地左右进⾏摇摆,⽽⽆法恒定在⼀个可靠地线性准则中。

三、多层⽹络使⽤多层感知机就能够解决线性不可分的问题,输出层和输⼊层之间的成为隐层/隐含层,它和输出层⼀样都是拥有激活函数的功能神经元。

神经元之间不存在同层连接,也不存在跨层连接,这种神经⽹络结构称为多层前馈神经⽹络。

换⾔之,神经⽹络的训练重点就是链接权值和阈值当中。

四、误差逆传播算法误差逆传播算法换⾔之BP(BackPropagation)算法,BP算法不仅可以⽤于多层前馈神经⽹络,还可以⽤于其他⽅⾯,但是单单提起BP算法,训练的⾃然是多层前馈神经⽹络。

神经网络

RBF神经网络学习算法需要求解的参数有3个:

基函数的中心、隐含层到输出层权值以及节点基 宽参数。根据径向基函数中心选取方法不同, RBF网络有多种学习方法,如梯度下降法、随机 选取中心法、自组织选区中心法、有监督选区中

心法和正交最小二乘法等。下面根据梯度下降法

,输出权、节点中心及节点基宽参数的迭代算法 如下。

讲 课 内 容

神经网络的概述与发展历史 什么是神经网络 BP神经网络 RBF神经网络 Hopfield神经网络

1.神经网络概述与发展历史

神经网络(Neural Networks,NN)是由大量的、 简单的处理单元(称为神经元)广泛地互相连接 而形成的复杂网络系统,它反映了人脑功能的许 多基本特征,是一个高度复杂的非线性动力学习 系统。 神经网络具有大规模并行、分布式存储和处理、 自组织、自适应和自学能力,特别适合处理需要 同时考虑许多因素和条件的、不精确和模糊的信 息处理问题。

4.RBF神经网络

使用RBF网络逼近下列对象:

2 F 20 x1 10cos2x1 x2 10cos2x2 2

4.RBF神经网络

RBF网络的优点:

神经网络有很强的非线性拟合能力,可映射任意复杂的非线 性关系,而且学习规则简单,便于计算机实现。具有很强 的鲁棒性、记忆能力、非线性映射能力以及强大的自学习 能力,因此有很大的应用市场。 ① 它具有唯一最佳逼近的特性,且无局部极小问题存在。

1.自学习和自适应性。

由于神经元之间的相对 决定的。每个神经元都可以根据接受 5.分布式存储。 独立性,神经网络学习的 到的信息进行独立运算和处理,并输 “知识”不是集中存储在网 出结构。同一层的不同神经元可以同 络的某一处,而是分布在网 时进行运算,然后传输到下一层进行 络的所有连接权值中。 处理。因此,神经网络往往能发挥并 行计算的优势,大大提升运算速度。

神经网络理论及应用

神经网络理论及应用神经网络(neural network)是一种模仿人类神经系统工作方式而建立的数学模型,用于刻画输入、处理与输出间的复杂映射关系。

神经网络被广泛应用于机器学习、人工智能、数据挖掘、图像处理等领域,是目前深度学习技术的核心之一。

神经网络的基本原理是模仿人脑神经细胞——神经元。

神经元接收来自其他神经元的输入信号,并通过一个激活函数对所有输入信号进行加权求和,再传递到下一个神经元。

神经元之间的连接权重是神经网络的关键参数,决定了不同输入组合对输出结果的影响。

神经网络的分类可分为多层感知机、卷积神经网络、循环神经网络等等。

其中多层感知机(Multi-Layer Perceptron,MLP)是最基本的神经网络结构。

MLP由输入层、若干个隐藏层和输出层组成,每层包括多个神经元,各层之间被完全连接,每个神经元都接收来自上一层的输入信号并输出给下一层。

通过不断地训练神经网络,即对连接权重进行优化,神经网络能够准确地对所学习的模式进行分类、回归、识别、聚类等任务。

神经网络的应用非常广泛,涉及到各个领域。

在图像处理领域中,卷积神经网络(Convolutional Neural Network,CNN)是一种特殊的神经网络,主要应用于图像识别、图像分割等任务。

比如,在医疗领域中,CNN被用于对医学影像进行诊断,对疾病进行分类、定位和治疗建议的制定。

在语音处理领域中,循环神经网络(Recurrent Neural Network,RNN)因其能够处理序列数据而备受推崇,常用于文本生成、机器翻译等任务。

在自然语言处理领域中,基于预训练的语言模型(Pre-trained Language Models,PLM)在语言模型微调、文本分类和情感分析等方面表现出色。

尽管神经网络有诸多优点,但它也存在一些缺点。

其中最为突出的是过度拟合(overfitting),即模型过于复杂,为了适应训练集数据而使得泛化能力下降,遇到未知数据时准确率不高。

神经网络论文

人工智能专题报告题目模式识别及人工神经网络概述姓名专业学号学院电脑科学与技术学院内容摘要:模式识别是一项极具研究价值的课题,随着神经网络和模糊逻辑技术的发展,人们对这一问题的研究又采用了许多新的方法和手段,也使得这一古老的课题焕发出新的生命力.目前国际上有相当多的学者在研究这一课题,它包括了模式识别领域中所有典型的问题:数据的采集、处理及选择、输入样本表达的选择、模式识别分类器的选择以及用样本集对识别器的有指导的训练。

人工神经网络为数字识别提供了新的手段。

正是神经网络所具有的这种自组织自学习能力、推广能力、非线性和运算高度并行的能力使得模式识别成为目前神经网络最为成功的应用领域。

关键词:模式识别,神经网络,人工智能,原理,应用Abstract:Pattern recognition is an extremely valuable project research, with neural network and fuzzy logic technology development, people on this subject, and adopted many new methods and means, also make the ancient subject coruscate gives new vitality. Current international has quite a number of scholars in the study of this topic, and it includes pattern recognition field of typical problems: the data acquisition, processing and selection, input data express choice, the choice of mode identification classifier and using samples of the reader has guidance training. Artificial neural network for digital recognition to provide a new way. It is neural network which has this kind of self-organization self-learning capability, generalization, nonlinear and computing highly parallel ability makes the pattern recognition become the neural network was the most successful application fields.引言具体的模式识别是多种多样的,如果从识别的基本方法上划分,传统的模式识别大体分为统计模式识别和句法模式识别,在识别系统中引入神经网络是一种近年来发展起来的新的模式识别方法。

什么是神经网络?

什么是神经网络?神经网络是一种模仿人脑神经系统构建的计算模型。

它由一组互相连接的神经元单元组成,这些神经元单元可以传输和处理信息。

神经网络可以通过研究和训练来理解和解决问题。

结构神经网络由多个层级组成,包括输入层、隐藏层和输出层。

每个层级都由多个神经元单元组成。

输入层接收外部的数据输入,隐藏层和输出层通过连接的权重来处理和传递这些输入信息。

工作原理神经网络的工作原理主要包括两个阶段:前向传播和反向传播。

- 前向传播:输入数据通过输入层传递给隐藏层,然后进一步传递到输出层。

在传递的过程中,神经网络根据权重和激活函数计算每个神经元的输出值。

- 反向传播:通过比较神经网络的输出和期望的输出,计算误差,并根据误差调整权重和偏差。

这个过程不断重复,直到神经网络的输出接近期望结果。

应用领域神经网络在许多领域有广泛的应用,包括:- 机器研究:神经网络可以用于图像识别、语音识别、自然语言处理等任务。

- 金融领域:用于预测股票价格、风险评估等。

- 医疗领域:用于疾病诊断、药物发现等。

- 自动驾驶:神经网络在自动驾驶汽车中的感知和决策中有重要作用。

优势和局限性神经网络的优势包括:- 可以研究和适应不同的数据模式和问题。

- 能够处理大量的数据和复杂的非线性关系。

- 具有并行计算的能力,可以高效处理大规模数据。

神经网络的局限性包括:- 需要调整许多参数,并且结果可能不稳定。

- 解释性较差,很难理解模型的内部工作原理。

总结神经网络是一种模仿人脑神经系统构建的计算模型,具有广泛的应用领域和一定的优势和局限性。

随着技术的不断发展,神经网络在各个领域的应用将会越来越广泛。

神经网络

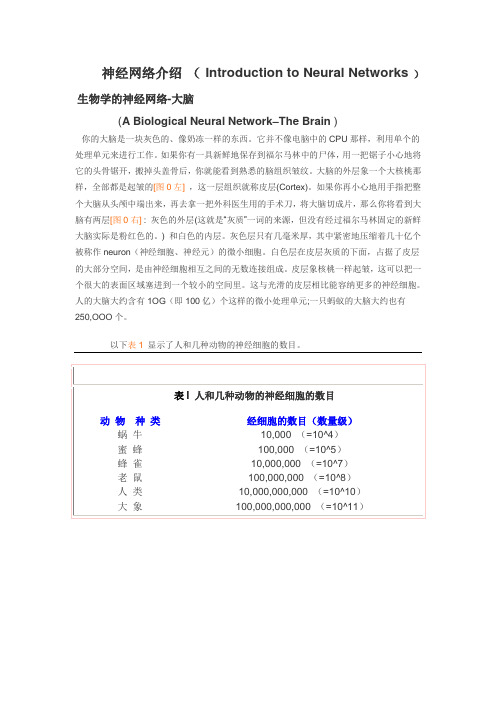

神经网络介绍(Introduction to Neural Networks)生物学的神经网络-大脑(A Biological Neural Network–The Brain )你的大脑是一块灰色的、像奶冻一样的东西。

它并不像电脑中的CPU那样,利用单个的处理单元来进行工作。

如果你有一具新鲜地保存到福尔马林中的尸体,用一把锯子小心地将它的头骨锯开,搬掉头盖骨后,你就能看到熟悉的脑组织皱纹。

大脑的外层象一个大核桃那样,全部都是起皱的[图0左],这一层组织就称皮层(Cortex)。

如果你再小心地用手指把整个大脑从头颅中端出来,再去拿一把外科医生用的手术刀,将大脑切成片,那么你将看到大脑有两层[图0右] : 灰色的外层(这就是“灰质”一词的来源,但没有经过福尔马林固定的新鲜大脑实际是粉红色的。

) 和白色的内层。

灰色层只有几毫米厚,其中紧密地压缩着几十亿个被称作neuron(神经细胞、神经元)的微小细胞。

白色层在皮层灰质的下面,占据了皮层的大部分空间,是由神经细胞相互之间的无数连接组成。

皮层象核桃一样起皱,这可以把一个很大的表面区域塞进到一个较小的空间里。

这与光滑的皮层相比能容纳更多的神经细胞。

人的大脑大约含有1OG(即100亿)个这样的微小处理单元;一只蚂蚁的大脑大约也有250,OOO个。

图1 神经细胞的结构在人的生命的最初9个月内,这些细胞以每分钟25,000个的惊人速度被创建出来。

神经细胞和人身上任何其他类型细胞十分不同,每个神经细胞都长着一根像电线一样的称为轴突(axon)的东西,它的长度有时伸展到几厘米[译注],用来将信号传递给其他的神经细胞。

神经细胞的结构如图1所示。

它由一个细胞体(soma)、一些树突(dendrite) 、和一根可以很长的轴突组成。

神经细胞体是一颗星状球形物,里面有一个核(nucleus)。

树突由细胞体向各个方向长出,本身可有分支,是用来接收信号的。

轴突也有许多的分支。

神经网络的介绍范文

神经网络的介绍范文

神经网络(Neural Networks)是一种利用统计学习的方法,来解决

计算机视觉,自然语言处理,以及其他各种智能问题。

基本的神经网络架

构是由多个由具有可学习的权重的神经元构成的多层网络,每个神经元都

有一个可以被其他神经元影响的活动函数(例如逻辑函数或非线性函数)。

在简单的神经网络中,神经元可以接收输入信号并输出一个基于输入

信号。

输入信号可以是一维数组或多维数组,输出值可以是一维数组,也

可以是多维数组。

神经元可以有不同的连接强度,一些强连接的神经元可

以更大程度的影响输出,而连接弱的神经元则起到一个较小的作用。

神经

元之间的权重(weights)可以用梯度下降算法调整,以更精确的拟合数据。

分类器神经网络(Classifier Neural Networks)是使用神经网络来

实现分类任务的一种技术,类似于支持向量机(SVM)和朴素贝叶斯分类

器(Naive Bayes Classifier)。

该网络包含多个输入层,隐藏层和输出层。

输入层接收原始信号,隐藏层处理特征和聚类,然后输出层将结果转

换为有意义的分类结果。

神经网络ppt课件

通常,人们较多地考虑神经网络的互连结构。本 节将按照神经网络连接模式,对神经网络的几种 典型结构分别进行介绍

12

2.2.1 单层感知器网络

单层感知器是最早使用的,也是最简单的神经 网络结构,由一个或多个线性阈值单元组成

这种神经网络的输入层不仅 接受外界的输入信号,同时 接受网络自身的输出信号。 输出反馈信号可以是原始输 出信号,也可以是经过转化 的输出信号;可以是本时刻 的输出信号,也可以是经过 一定延迟的输出信号

此种网络经常用于系统控制、 实时信号处理等需要根据系 统当前状态进行调节的场合

x1

…… …… ……

…… yi …… …… …… …… xi

再励学习

再励学习是介于上述两者之间的一种学习方法

19

2.3.2 学习规则

Hebb学习规则

这个规则是由Donald Hebb在1949年提出的 他的基本规则可以简单归纳为:如果处理单元从另一个处

理单元接受到一个输入,并且如果两个单元都处于高度活 动状态,这时两单元间的连接权重就要被加强 Hebb学习规则是一种没有指导的学习方法,它只根据神经 元连接间的激活水平改变权重,因此这种方法又称为相关 学习或并联学习

9

2.1.2 研究进展

重要学术会议

International Joint Conference on Neural Networks

IEEE International Conference on Systems, Man, and Cybernetics

World Congress on Computational Intelligence

复兴发展时期 1980s至1990s

神经网络简介

神经网络简介神经网络(Neural Network),又被称为人工神经网络(Artificial Neural Network),是一种模仿人类智能神经系统结构与功能的计算模型。

它由大量的人工神经元组成,通过建立神经元之间的连接关系,实现信息处理与模式识别的任务。

一、神经网络的基本结构与原理神经网络的基本结构包括输入层、隐藏层和输出层。

其中,输入层用于接收外部信息的输入,隐藏层用于对输入信息进行处理和加工,输出层负责输出最终的结果。

神经网络的工作原理主要分为前向传播和反向传播两个过程。

在前向传播过程中,输入信号通过输入层进入神经网络,并经过一系列的加权和激活函数处理传递到输出层。

反向传播过程则是根据输出结果与实际值之间的误差,通过调整神经元之间的连接权重,不断优化网络的性能。

二、神经网络的应用领域由于神经网络在模式识别和信息处理方面具有出色的性能,它已经广泛应用于各个领域。

1. 图像识别神经网络在图像识别领域有着非常广泛的应用。

通过对图像进行训练,神经网络可以学习到图像中的特征,并能够准确地判断图像中的物体种类或者进行人脸识别等任务。

2. 自然语言处理在自然语言处理领域,神经网络可以用于文本分类、情感分析、机器翻译等任务。

通过对大量语料的学习,神经网络可以识别文本中的语义和情感信息。

3. 金融预测与风险评估神经网络在金融领域有着广泛的应用。

它可以通过对历史数据的学习和分析,预测股票价格走势、评估风险等,并帮助投资者做出更科学的决策。

4. 医学诊断神经网络在医学领域的应用主要体现在医学图像分析和诊断方面。

通过对医学影像进行处理和分析,神经网络可以辅助医生进行疾病的诊断和治疗。

5. 机器人控制在机器人领域,神经网络可以用于机器人的感知与控制。

通过将传感器数据输入到神经网络中,机器人可以通过学习和训练来感知环境并做出相应的反应和决策。

三、神经网络的优缺点虽然神经网络在多个领域中都有着广泛的应用,但它也存在一些优缺点。

神经网络(论文资料)

过渡期(1970—1986) 1980年,Kohonen提出自组织映射理论 1982年,Hopfield提出离散的Hopfield网络模型 1984年,又提出连续的Hopfield网络模型,并用电 子线路实现了该网路的仿真。 1986年,Rumelhart等提出BP算法

发展期(1987--) 1993年创刊的“IEEE Transaction on Neural Network”

特性,不考虑时间特性。

人工神经元

M-P模型

最早由心理学家麦克罗奇(W.McLolloch)和 数理逻辑学家皮兹(W.Pitts)于1943年提出。

e 兴奋型输入

e

i 抑制型输入 i

阈值

θ

Y 输出

M-P模型输入输出关系表

输入条件

e, i 0 e, i 0 e, i 0

输出

y 1 y0 y0

p

( yˆ pk y pk )2

k

其中P为训练样本的个数

此时,按反向传播计算样本p在各层的连接权 值变化量∆pwjk,但并不对各层神经元的连接权值

进行修改,而是不断重复这一过程,直至完成对 训练样本集中所有样本的计算,并产生这一轮训

练的各层连接权值的改变量∆wjk

此时才对网络中各神经元的连接权值进行调整, 若正向传播后重新计算的E仍不满足要求,则开始 下一轮权值修正。

在传递过程中会被滤除,而对于大于阈值的则有整形 作用。即无论输入脉冲的幅度与宽度,输出波形恒定。 信息传递的时延性和不应期性

在两次输入之间要有一定的时间间隔,即时延; 而在此期间内不响应激励,不传递信息,称为不应 期。

人工神经元及其互连结构

人工神经网络的基本处理单元-人工神经元 模拟主要考虑两个特性:时空加权,阈值作用。 其中对于时空加权,模型分为两类,即考虑时间

完整的神经网络讲解资料

一、感知器的学习结构感知器的学习是神经网络最典型的学习。

目前,在控制上应用的是多层前馈网络,这是一种感知器模型,学习算法是BP法,故是有教师学习算法。

一个有教师的学习系统可以用图1—7表示。

这种学习系统分成三个部分:输入部,训练部和输出部。

神经网络学习系统框图1-7 图神经网络的学习一般需要多次重复训练,使误差值逐渐向零趋近,最后到达零。

则这时才会使输出与期望一致。

故而神经网络的学习是消耗一定时期的,有的学习过程要重复很多次,甚至达万次级。

原因在于神经网络的权系数W有很多分量W ,W ,----W ;也即是一n12个多参数修改系统。

系统的参数的调整就必定耗时耗量。

目前,提高神经网络的学习速度,减少学习重复次数是十分重要的研究课题,也是实时控制中的关键问题。

二、感知器的学习算法.感知器是有单层计算单元的神经网络,由线性元件及阀值元件组成。

感知器如图1-9所示。

图1-9 感知器结构感知器的数学模型:(1-12)其中:f[.]是阶跃函数,并且有(1-13)θ是阀值。

感知器的最大作用就是可以对输入的样本分类,故它可作分类器,感知器对输入信号的分类如下:即是,当感知器的输出为1时,输入样本称为A类;输出为-1时,输入样本称为B类。

从上可知感知器的分类边界是:(1-15)在输入样本只有两个分量X1,X2时,则有分类边界条件:(1-16)即W X +W X -θ=0 (1-17) 2121也可写成(1-18)这时的分类情况如固1—10所示。

感知器的学习算法目的在于找寻恰当的权系数w=(w1.w2,…,Wn),。

当d熊产生期望值xn),…,x2,(xt=x定的样本使系统对一个特.x分类为A类时,期望值d=1;X为B类时,d=-1。

为了方便说明感知器学习算法,把阀值θ并人权系数w中,同时,样本x也相应增加一个分量x 。

故令:n+1W =-θ,X =1 (1-19) n+1n+1则感知器的输出可表示为:(1-20)感知器学习算法步骤如下:1.对权系数w置初值对权系数w=(W.W ,…,W ,W )的n+11n2各个分量置一个较小的零随机值,但W =—g。

什么是神经网络

什么是神经网络神经网络是当今人工智能技术中最常见的模式,它引发了各种科学革命,无论是工程学还是商业,它在不同行业和应用中发挥着越来越大的作用。

本文将介绍神经网络在解决各种问题方面的神奇力量。

1. 什么是神经网络神经网络是一种仿照人脑的“机器学习”算法。

它是一种可以从大量示例分析和学习的计算机算法,具有自适应性,可大规模搜索。

神经网络的算法就像人类的记忆技能,可以自行学习数据并扩展知识,从而解决一些非常困难的问题,因此也被称为“深度学习”算法。

2. 神经网络如何工作神经网络通过网络层积的多层神经元结构,可以从大量输入数据中特征提取、预测和学习,这些神经元结构在建立连接的基础上,可以识别复杂的模式,从而整合起输入到输出之间的映射。

在学习过程中,神经网络根据示例数据调整其参数,在训练完毕后输入到测试集中,根据其表现度量精度,从而让人工智能系统能够有效地满足需求。

3. 神经网络的应用(1)计算机视觉:神经网络在人工智能方面应用最为广泛的是计算机视觉,它可以被用于图像识别、物体检测、图像检索等。

(2)自然语言处理:神经网络还可以用于自然语言处理,用于文本分类、问答机器人、聊天机器人等。

(3)机器学习:神经网络也是机器学习的最常见方法,可以用于大规模优化、行为预测和分类。

(4)语音识别:神经网络可以用于语音识别,可以对输入的音频信号进行分析,从而实现自动语音识别。

(5)机器人学:神经网络技术也被应用于机器人学,以控制机器人的动作和行为,可以实现在环境中自主行走。

4.结论通过以上介绍可以看出,神经网络具有极大的潜力,能够自动学习和发现规律,并能应用到各种不同的领域,迅速应对瞬息万变的人工智能环境。

什么是神经网络?

什么是神经网络?

神经网络是最近几年引起重视的计算机技术,也是未来发展的重要方向之一。

它以自己的独特优势赢得众多受众,从早期的生物神经科学家到IT从业者都在关注它。

今天,让我们一起来解读神经网络:

神经网络(Neural Network)对应于生物学中的神经元网络,是一种人工智能的学习模型,旨在模拟生物的神经元网络,利用大量的计算节点来处理复杂的任务,也就是运用大量数据以及人工智能算法,使机器可以自动学习,进而实现自动决策。

在神经网络中,有多个计算节点,这些节点组成一个网络,每一节点都有不同的权重和偏差,这些计算节点可以传递数据并进行处理。

神经网络的结构可以分为输入层、隐藏层和输出层。

输入层的节点用来接收外部输入的数据,隐藏层的节点会通过不同形式的处理把输入变成有效的数据,最后输出层就会输出处理结果。

随着计算机技术的发展,神经网络的应用越来越广泛,已经被广泛应用于很多方面:

- 计算机视觉:神经网络可以分析视频流中的图像,从而可以实现自动

识别,有助于更好地理解图像内容;

- 自动驾驶:神经网络可以帮助自动车辆在复杂的环境中顺利行驶;

- 机器学习:神经网络可以构建从历史数据中学习出来的模型,帮助企

业做出准确的决策;

此外,神经网络还有许多其他的应用,比如自然语言处理、机器翻译、文本挖掘等,都是极受欢迎的计算机技术。

神经网络近些年来发展得很快,从早期的深度学习以及模式识别一步

步发展到现在的再生成模型、跨模态深度学习,也成为当下热门话题。

未来,人工智能的发展将变得更加普及,其核心技术之一是神经网络,因此神经网络也将更加重要,它可以实现更智能、更可靠的机器学习

任务,这也是未来的趋势。

神经网络(neuralnetwork(NN))百科物理大全

神经网络(neuralnetwork(NN))百科物理大全

神经网络(neuralnetwork(NN))百科物理大全广泛的阅读有助于学生形成良好的道德品质和健全的人格,向往真、善、美,摈弃假、恶、丑;有助于沟通个人与外部世界的联系,使学生认识丰富多彩的世界,获取信息和知识,拓展视野。

快一起来阅读神经网络(neuralnetwork(NN))百科物理大全吧~

神经网络(neuralnetwork(NN))

神经网络(neuralnetwork(NN))

是由巨量神经元相互连接成的复杂非线性系统。

它具有并行处理和分布式存储信息及其整体特性不依赖于其中个别神经元的性质,很难用单个神经元的特性去说明整个神经网络的特性。

脑是自然界中最巧妙的控制器和信息处理系统。

自1943年McCulloch和Pitts最早尝试建立神经网络模型,至今已有许多模拟脑功能的模型,但距理论模型还很远。

大致分两类:①人工神经网络,模拟生物神经系统功能,由简单神经元模型构成,以图解决工程技术问题。

如模拟脑细胞的感知功能的BP(Back-Propagation)神经网络;基于自适应共振理论的模拟自动分类识别的

ART(AdaptiveResonanceTheory)神经网络等;②现实性模型,与神经细胞尽可能一致的神经元模型构成的网络,以图阐明神经网络结构与功能的机理,从而建立脑模型和脑理论。

如基于突触和细胞膜电特性的霍泊费尔特(Hopfield)模。

神经网络基本介绍ppt课件.ppt

从使用情况来看,闭胸式的使用比较 广泛。 敞开式 盾构之 中有挤 压式盾 构、全 部敞开 式盾构 ,但在 近些年 的城市 地下工 程施工 中已很 少使用 ,在此 不再说 明。

神经网络控制的研究领域

(1)基于神经网络的系统辨识 ① 将神经网络作为被辨识系统的模型,可在已知

从使用情况来看,闭胸式的使用比较 广泛。 敞开式 盾构之 中有挤 压式盾 构、全 部敞开 式盾构 ,但在 近些年 的城市 地下工 程施工 中已很 少使用 ,在此 不再说 明。

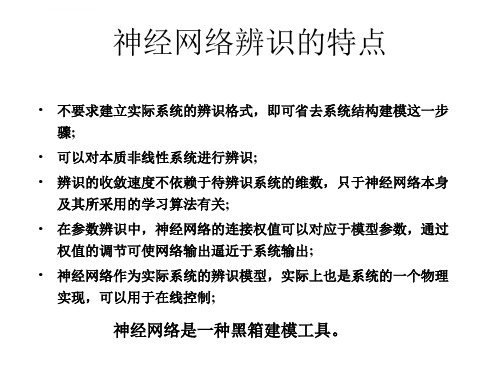

神经网络辨识的特点

• 不要求建立实际系统的辨识格式,即可省去系统结构建模这一步 骤;

• 可以对本质非线性系统进行辨识; • 辨识的收敛速度不依赖于待辨识系统的维数,只于神经网络本身

从使用情况来看,闭胸式的使用比较 广泛。 敞开式 盾构之 中有挤 压式盾 构、全 部敞开 式盾构 ,但在 近些年 的城市 地下工 程施工 中已很 少使用 ,在此 不再说 明。

从使用情况来看,闭胸式的使用比较 广泛。 敞开式 盾构之 中有挤 压式盾 构、全 部敞开 式盾构 ,但在 近些年 的城市 地下工 程施工 中已很 少使用 ,在此 不再说 明。

从使用情况来看,闭胸式的使用比较 广泛。 敞开式 盾构之 中有挤 压式盾 构、全 部敞开 式盾构 ,但在 近些年 的城市 地下工 程施工 中已很 少使用 ,在此 不再说 明。

图 单个神经元的解剖图

从使用情况来看,闭胸式的使用比较 广泛。 敞开式 盾构之 中有挤 压式盾 构、全 部敞开 式盾构 ,但在 近些年 的城市 地下工 程施工 中已很 少使用 ,在此 不再说 明。

神经元由三部分构成: (1)细胞体(主体部分):包括细胞质、 细胞膜和细胞核; (2)树突:用于为细胞体传入信息; (3)轴突:为细胞体传出信息,其末端是 轴突末梢,含传递信息的化学物质; (4)突触:是神经元之间的接口( 104~105个/每个神经元)。

计算机视觉(一):神经网络简介

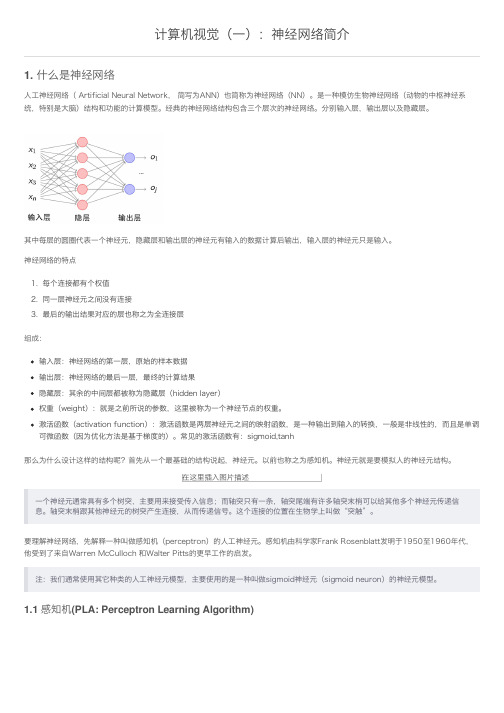

计算机视觉(⼀):神经⽹络简介1. 什么是神经⽹络⼈⼯神经⽹络( Artificial Neural Network, 简写为ANN)也简称为神经⽹络(NN)。

是⼀种模仿⽣物神经⽹络(动物的中枢神经系统,特别是⼤脑)结构和功能的计算模型。

经典的神经⽹络结构包含三个层次的神经⽹络。

分别输⼊层,输出层以及隐藏层。

其中每层的圆圈代表⼀个神经元,隐藏层和输出层的神经元有输⼊的数据计算后输出,输⼊层的神经元只是输⼊。

神经⽹络的特点1. 每个连接都有个权值2. 同⼀层神经元之间没有连接3. 最后的输出结果对应的层也称之为全连接层组成:输⼊层:神经⽹络的第⼀层,原始的样本数据输出层:神经⽹络的最后⼀层,最终的计算结果隐藏层:其余的中间层都被称为隐藏层(hidden layer)权重(weight):就是之前所说的参数,这⾥被称为⼀个神经节点的权重。

激活函数(activation function):激活函数是两层神经元之间的映射函数,是⼀种输出到输⼊的转换,⼀般是⾮线性的,⽽且是单调可微函数(因为优化⽅法是基于梯度的)。

常见的激活函数有:sigmoid,tanh那么为什么设计这样的结构呢?⾸先从⼀个最基础的结构说起,神经元。

以前也称之为感知机。

神经元就是要模拟⼈的神经元结构。

在这⾥插⼊图⽚描述⼀个神经元通常具有多个树突,主要⽤来接受传⼊信息;⽽轴突只有⼀条,轴突尾端有许多轴突末梢可以给其他多个神经元传递信息。

轴突末梢跟其他神经元的树突产⽣连接,从⽽传递信号。

这个连接的位置在⽣物学上叫做“突触”。

要理解神经⽹络,先解释⼀种叫做感知机(perceptron)的⼈⼯神经元。

感知机由科学家Frank Rosenblatt发明于1950⾄1960年代,他受到了来⾃Warren McCulloch 和Walter Pitts的更早⼯作的启发。

注:我们通常使⽤其它种类的⼈⼯神经元模型,主要使⽤的是⼀种叫做sigmoid神经元(sigmoid neuron)的神经元模型。

- 1、下载文档前请自行甄别文档内容的完整性,平台不提供额外的编辑、内容补充、找答案等附加服务。

- 2、"仅部分预览"的文档,不可在线预览部分如存在完整性等问题,可反馈申请退款(可完整预览的文档不适用该条件!)。

- 3、如文档侵犯您的权益,请联系客服反馈,我们会尽快为您处理(人工客服工作时间:9:00-18:30)。

毕业设计(论文)外文文献翻译文献、资料中文题目:神经网络介绍文献、资料英文题目:Neural Network Introduction文献、资料来源:文献、资料发表(出版)日期:院(部):专业:班级:姓名:学号:指导教师:翻译日期:2017.02.14外文文献翻译注:节选自Neural Network Introduction神经网络介绍,绪论。

HistoryThe history of artificial neural networks is filled with colorful, creative individuals from many different fields, many of whom struggled for decades to develop concepts that we now take for granted. This history has been documented by various authors. One particularly interesting book is Neurocomputing: Foundations of Research by John Anderson and Edward Rosenfeld. They have collected and edited a set of some 43 papers of special historical interest. Each paper is preceded by an introduction that puts the paper in historical perspective.Histories of some of the main neural network contributors are included at the beginning of various chapters throughout this text and will not be repeated here. However, it seems appropriate to give a brief overview, a sample of the major developments.At least two ingredients are necessary for the advancement of a technology: concept and implementation. First, one must have a concept, a way of thinking about a topic, some view of it that gives clarity not there before. This may involve a simple idea, or it may be more specific and include a mathematical description. To illustrate this point, consider the history of the heart. It was thought to be, at various times, the center of the soul or a source of heat. In the 17th century medical practitioners finally began to view the heart as a pump, and they designed experiments to study its pumping action. These experiments revolutionized our view of the circulatory system. Without the pump concept, an understanding of the heart was out of grasp.Concepts and their accompanying mathematics are not sufficient for a technology to mature unless there is some way to implement the system. For instance, the mathematics necessary for the reconstruction of images from computer-aided topography (CAT) scans was known many years before the availability of high-speed computers and efficient algorithms finally made it practical to implement a useful CAT system.The history of neural networks has progressed through both conceptual innovations and implementation developments. These advancements, however, seem to have occurred in fits and starts rather than by steady evolution.Some of the background work for the field of neural networks occurred in the late 19th and early 20th centuries. This consisted primarily of interdisciplinary work in physics, psychology and neurophysiology by such scientists as Hermann von Helmholtz, Ernst Much and Ivan Pavlov. This early work emphasized general theories of learning, vision, conditioning, etc.,and did not include specific mathematical models of neuron operation.The modern view of neural networks began in the 1940s with the work of Warren McCulloch and Walter Pitts [McPi43], who showed that networks of artificial neurons could, in principle, compute any arithmetic or logical function. Their work is often acknowledged as the origin of theneural network field.McCulloch and Pitts were followed by Donald Hebb [Hebb49], who proposed that classical conditioning (as discovered by Pavlov) is present because of the properties of individual neurons. He proposed a mechanism for learning in biological neurons.The first practical application of artificial neural networks came in the late 1950s, with the invention of the perception network and associated learning rule by Frank Rosenblatt [Rose58]. Rosenblatt and his colleagues built a perception network and demonstrated its ability to perform pattern recognition. This early success generated a great deal of interest in neural network research. Unfortunately, it was later shown that the basic perception network could solve only a limited class of problems. (See Chapter 4 for more on Rosenblatt and the perception learning rule.)At about the same time, Bernard Widrow and Ted Hoff [WiHo60] introduced a new learning algorithm and used it to train adaptive linear neural networks, which were similar in structure and capability to Rosenblatt’s perception. The Widrow Hoff learning rule is still in use today. (See Chapter 10 for more on Widrow-Hoff learning.) Unfortunately, both Rosenblatt's and Widrow's networks suffered from the same inherent limitations, which were widely publicized in a book by Marvin Minsky and Seymour Papert [MiPa69]. Rosenblatt and Widrow wereaware of these limitations and proposed new networks that would overcome them. However, they were not able to successfully modify their learning algorithms to train the more complex networks.Many people, influenced by Minsky and Papert, believed that further research on neural networks was a dead end. This, combined with the fact that there were no powerful digital computers on which to experiment,caused many researchers to leave the field. For a decade neural network research was largely suspended. Some important work, however, did continue during the 1970s. In 1972 Teuvo Kohonen [Koho72] and James Anderson [Ande72] independently and separately developed new neural networks that could act as memories. Stephen Grossberg [Gros76] was also very active during this period in the investigation of self-organizing networks.Interest in neural networks had faltered during the late 1960s because of the lack of new ideas and powerful computers with which to experiment. During the 1980s both of these impediments were overcome, and researchin neural networks increased dramatically. New personal computers and workstations, which rapidly grew in capability, became widely available. In addition, important new concepts were introduced.Two new concepts were most responsible for the rebirth of neural net works. The first was the use of statistical mechanics to explain the operation of a certain class of recurrent network, which could be used as an associative memory. This was described in a seminal paper by physicist John Hopfield [Hopf82].The second key development of the 1980s was the backpropagation algo rithm for training multilayer perceptron networks, which was discovered independently by several different researchers. The most influential publication of the backpropagation algorithm was by David Rumelhart and James McClelland [RuMc86]. This algorithm was the answer to the criticisms Minsky and Papert had made in the 1960s. (See Chapters 11 and 12 for a development of the backpropagation algorithm.)These new developments reinvigorated the field of neural networks. In the last ten years, thousands of papers have been written, and neural networks have found many applications. The field is buzzing with new theoretical and practical work. As noted below, it is not clear where all of this will lead US.The brief historical account given above is not intended to identify all of the major contributors, but is simply to give the reader some feel for how knowledge in the neuralnetwork field has progressed. As one might note, the progress has not always been "slow but sure." There have been periods of dramatic progress and periods when relatively little has been accomplished.Many of the advances in neural networks have had to do with new concepts, such as innovative architectures and training. Just as important has been the availability of powerful new computers on which to test these new concepts.Well, so much for the history of neural networks to this date. The real question is, "What will happen in the next ten to twenty years?" Will neural networks take a permanent place as a mathematical/engineering tool, or will they fade away as have so many promising technologies? At present, the answer seems to be that neural networks will not only have their day but will have a permanent place, not as a solution to every problem, but as a tool to be used in appropriate situations. In addition, remember that we still know very little about how the brain works. The most important advances in neural networks almost certainly lie in the future.Although it is difficult to predict the future success of neural networks, the large number and wide variety of applications of this new technology are very encouraging. The next section describes some of these applications.ApplicationsA recent newspaper article described the use of neural networks in literature research by Aston University. It stated that "the network can be taught to recognize individual writing styles, and the researchers used it to compare works attributed to Shakespeare and his contemporaries." A popular science television program recently documented the use of neural networks by an Italian research institute to test the purity of olive oil. These examples are indicative of the broad range of applications that can be found for neural networks. The applications are expanding because neural networks are good at solving problems, not just in engineering, science and mathematics, but m medicine, business, finance and literature as well. Their application to a wide variety of problems in many fields makes them very attractive. Also, faster computers and faster algorithms have made it possible to use neural networks to solve complex industrial problems that formerly required too much computation.The following note and Table of Neural Network Applications are reproduced here from the Neural Network Toolbox for MATLAB with the permission of the Math Works, Inc.The 1988 DARPA Neural Network Study [DARP88] lists various neural network applications, beginning with the adaptive channel equalizer in about 1984. This device, which is an outstanding commercial success, is a single-neuron network used in long distance telephone systems to stabilize voice signals. The DARPA report goes on to list other commercial applications, including a small word recognizer, a process monitor, a sonar classifier and a risk analysis system.Neural networks have been applied in many fields since the DARPA report was written. A list of some applications mentioned in the literature follows.AerospaceHigh performance aircraft autopilots, flight path simulations, aircraft control systems, autopilot enhancements, aircraft component simulations, aircraft component fault detectorsAutomotiveAutomobile automatic guidance systems, warranty activity analyzersBankingCheck and other document readers, credit application evaluatorsDefenseWeapon steering, target tracking, object discrimination, facial recognition, new kinds of sensors, sonar, radar and image signal processing including data compression, feature extraction and noise suppression, signal/image identificationElectronicsCode sequence prediction, integrated circuit chip layout, process control, chip failure analysis, machine vision, voice synthesis, nonlinear modelingEntertainmentAnimation, special effects, market forecasting。