Pitch-based segmentation and recognition of dot-matrix text

A Discriminatively Trained, Multiscale, Deformable Part Model

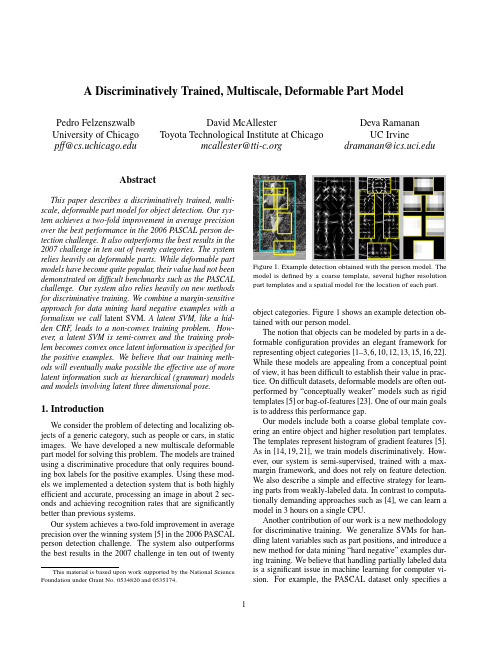

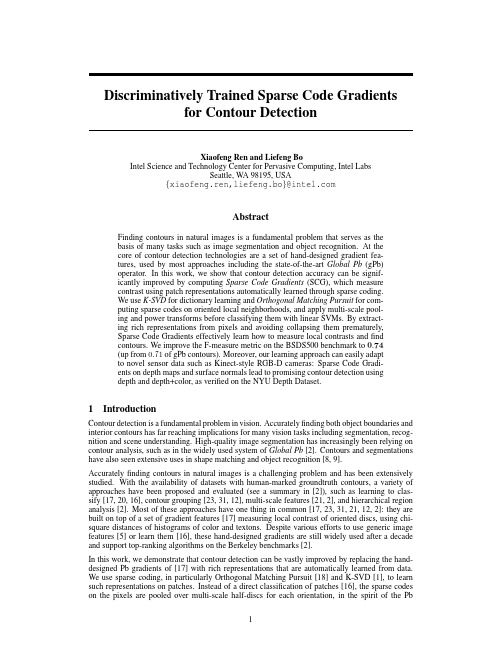

A Discriminatively Trained,Multiscale,Deformable Part ModelPedro Felzenszwalb University of Chicago pff@David McAllesterToyota Technological Institute at Chicagomcallester@Deva RamananUC Irvinedramanan@AbstractThis paper describes a discriminatively trained,multi-scale,deformable part model for object detection.Our sys-tem achieves a two-fold improvement in average precision over the best performance in the2006PASCAL person de-tection challenge.It also outperforms the best results in the 2007challenge in ten out of twenty categories.The system relies heavily on deformable parts.While deformable part models have become quite popular,their value had not been demonstrated on difficult benchmarks such as the PASCAL challenge.Our system also relies heavily on new methods for discriminative training.We combine a margin-sensitive approach for data mining hard negative examples with a formalism we call latent SVM.A latent SVM,like a hid-den CRF,leads to a non-convex training problem.How-ever,a latent SVM is semi-convex and the training prob-lem becomes convex once latent information is specified for the positive examples.We believe that our training meth-ods will eventually make possible the effective use of more latent information such as hierarchical(grammar)models and models involving latent three dimensional pose.1.IntroductionWe consider the problem of detecting and localizing ob-jects of a generic category,such as people or cars,in static images.We have developed a new multiscale deformable part model for solving this problem.The models are trained using a discriminative procedure that only requires bound-ing box labels for the positive ing these mod-els we implemented a detection system that is both highly efficient and accurate,processing an image in about2sec-onds and achieving recognition rates that are significantly better than previous systems.Our system achieves a two-fold improvement in average precision over the winning system[5]in the2006PASCAL person detection challenge.The system also outperforms the best results in the2007challenge in ten out of twenty This material is based upon work supported by the National Science Foundation under Grant No.0534820and0535174.Figure1.Example detection obtained with the person model.The model is defined by a coarse template,several higher resolution part templates and a spatial model for the location of each part. object categories.Figure1shows an example detection ob-tained with our person model.The notion that objects can be modeled by parts in a de-formable configuration provides an elegant framework for representing object categories[1–3,6,10,12,13,15,16,22]. While these models are appealing from a conceptual point of view,it has been difficult to establish their value in prac-tice.On difficult datasets,deformable models are often out-performed by“conceptually weaker”models such as rigid templates[5]or bag-of-features[23].One of our main goals is to address this performance gap.Our models include both a coarse global template cov-ering an entire object and higher resolution part templates. The templates represent histogram of gradient features[5]. As in[14,19,21],we train models discriminatively.How-ever,our system is semi-supervised,trained with a max-margin framework,and does not rely on feature detection. We also describe a simple and effective strategy for learn-ing parts from weakly-labeled data.In contrast to computa-tionally demanding approaches such as[4],we can learn a model in3hours on a single CPU.Another contribution of our work is a new methodology for discriminative training.We generalize SVMs for han-dling latent variables such as part positions,and introduce a new method for data mining“hard negative”examples dur-ing training.We believe that handling partially labeled data is a significant issue in machine learning for computer vi-sion.For example,the PASCAL dataset only specifies abounding box for each positive example of an object.We treat the position of each object part as a latent variable.We also treat the exact location of the object as a latent vari-able,requiring only that our classifier select a window that has large overlap with the labeled bounding box.A latent SVM,like a hidden CRF[19],leads to a non-convex training problem.However,unlike a hidden CRF, a latent SVM is semi-convex and the training problem be-comes convex once latent information is specified for thepositive training examples.This leads to a general coordi-nate descent algorithm for latent SVMs.System Overview Our system uses a scanning window approach.A model for an object consists of a global“root”filter and several part models.Each part model specifies a spatial model and a partfilter.The spatial model defines a set of allowed placements for a part relative to a detection window,and a deformation cost for each placement.The score of a detection window is the score of the root filter on the window plus the sum over parts,of the maxi-mum over placements of that part,of the partfilter score on the resulting subwindow minus the deformation cost.This is similar to classical part-based models[10,13].Both root and partfilters are scored by computing the dot product be-tween a set of weights and histogram of gradient(HOG) features within a window.The rootfilter is equivalent to a Dalal-Triggs model[5].The features for the partfilters are computed at twice the spatial resolution of the rootfilter. Our model is defined at afixed scale,and we detect objects by searching over an image pyramid.In training we are given a set of images annotated with bounding boxes around each instance of an object.We re-duce the detection problem to a binary classification prob-lem.Each example x is scored by a function of the form, fβ(x)=max zβ·Φ(x,z).Hereβis a vector of model pa-rameters and z are latent values(e.g.the part placements). To learn a model we define a generalization of SVMs that we call latent variable SVM(LSVM).An important prop-erty of LSVMs is that the training problem becomes convex if wefix the latent values for positive examples.This can be used in a coordinate descent algorithm.In practice we iteratively apply classical SVM training to triples( x1,z1,y1 ,..., x n,z n,y n )where z i is selected to be the best scoring latent label for x i under the model learned in the previous iteration.An initial rootfilter is generated from the bounding boxes in the PASCAL dataset. The parts are initialized from this rootfilter.2.ModelThe underlying building blocks for our models are the Histogram of Oriented Gradient(HOG)features from[5]. We represent HOG features at two different scales.Coarse features are captured by a rigid template covering anentireImage pyramidFigure2.The HOG feature pyramid and an object hypothesis de-fined in terms of a placement of the rootfilter(near the top of the pyramid)and the partfilters(near the bottom of the pyramid). detection window.Finer scale features are captured by part templates that can be moved with respect to the detection window.The spatial model for the part locations is equiv-alent to a star graph or1-fan[3]where the coarse template serves as a reference position.2.1.HOG RepresentationWe follow the construction in[5]to define a dense repre-sentation of an image at a particular resolution.The image isfirst divided into8x8non-overlapping pixel regions,or cells.For each cell we accumulate a1D histogram of gra-dient orientations over pixels in that cell.These histograms capture local shape properties but are also somewhat invari-ant to small deformations.The gradient at each pixel is discretized into one of nine orientation bins,and each pixel“votes”for the orientation of its gradient,with a strength that depends on the gradient magnitude.For color images,we compute the gradient of each color channel and pick the channel with highest gradi-ent magnitude at each pixel.Finally,the histogram of each cell is normalized with respect to the gradient energy in a neighborhood around it.We look at the four2×2blocks of cells that contain a particular cell and normalize the his-togram of the given cell with respect to the total energy in each of these blocks.This leads to a vector of length9×4 representing the local gradient information inside a cell.We define a HOG feature pyramid by computing HOG features of each level of a standard image pyramid(see Fig-ure2).Features at the top of this pyramid capture coarse gradients histogrammed over fairly large areas of the input image while features at the bottom of the pyramid capture finer gradients histogrammed over small areas.2.2.FiltersFilters are rectangular templates specifying weights for subwindows of a HOG pyramid.A w by hfilter F is a vector with w×h×9×4weights.The score of afilter is defined by taking the dot product of the weight vector and the features in a w×h subwindow of a HOG pyramid.The system in[5]uses a singlefilter to define an object model.That system detects objects from a particular class by scoring every w×h subwindow of a HOG pyramid and thresholding the scores.Let H be a HOG pyramid and p=(x,y,l)be a cell in the l-th level of the pyramid.Letφ(H,p,w,h)denote the vector obtained by concatenating the HOG features in the w×h subwindow of H with top-left corner at p.The score of F on this detection window is F·φ(H,p,w,h).Below we useφ(H,p)to denoteφ(H,p,w,h)when the dimensions are clear from context.2.3.Deformable PartsHere we consider models defined by a coarse rootfilter that covers the entire object and higher resolution partfilters covering smaller parts of the object.Figure2illustrates a placement of such a model in a HOG pyramid.The rootfil-ter location defines the detection window(the pixels inside the cells covered by thefilter).The partfilters are placed several levels down in the pyramid,so the HOG cells at that level have half the size of cells in the rootfilter level.We have found that using higher resolution features for defining partfilters is essential for obtaining high recogni-tion performance.With this approach the partfilters repre-sentfiner resolution edges that are localized to greater ac-curacy when compared to the edges represented in the root filter.For example,consider building a model for a face. The rootfilter could capture coarse resolution edges such as the face boundary while the partfilters could capture details such as eyes,nose and mouth.The model for an object with n parts is formally defined by a rootfilter F0and a set of part models(P1,...,P n) where P i=(F i,v i,s i,a i,b i).Here F i is afilter for the i-th part,v i is a two-dimensional vector specifying the center for a box of possible positions for part i relative to the root po-sition,s i gives the size of this box,while a i and b i are two-dimensional vectors specifying coefficients of a quadratic function measuring a score for each possible placement of the i-th part.Figure1illustrates a person model.A placement of a model in a HOG pyramid is given by z=(p0,...,p n),where p i=(x i,y i,l i)is the location of the rootfilter when i=0and the location of the i-th part when i>0.We assume the level of each part is such that a HOG cell at that level has half the size of a HOG cell at the root level.The score of a placement is given by the scores of eachfilter(the data term)plus a score of the placement of each part relative to the root(the spatial term), ni=0F i·φ(H,p i)+ni=1a i·(˜x i,˜y i)+b i·(˜x2i,˜y2i),(1)where(˜x i,˜y i)=((x i,y i)−2(x,y)+v i)/s i gives the lo-cation of the i-th part relative to the root location.Both˜x i and˜y i should be between−1and1.There is a large(exponential)number of placements for a model in a HOG pyramid.We use dynamic programming and distance transforms techniques[9,10]to compute the best location for the parts of a model as a function of the root location.This takes O(nk)time,where n is the number of parts in the model and k is the number of cells in the HOG pyramid.To detect objects in an image we score root locations according to the best possible placement of the parts and threshold this score.The score of a placement z can be expressed in terms of the dot product,β·ψ(H,z),between a vector of model parametersβand a vectorψ(H,z),β=(F0,...,F n,a1,b1...,a n,b n).ψ(H,z)=(φ(H,p0),φ(H,p1),...φ(H,p n),˜x1,˜y1,˜x21,˜y21,...,˜x n,˜y n,˜x2n,˜y2n,). We use this representation for learning the model parame-ters as it makes a connection between our deformable mod-els and linear classifiers.On interesting aspect of the spatial models defined here is that we allow for the coefficients(a i,b i)to be negative. This is more general than the quadratic“spring”cost that has been used in previous work.3.LearningThe PASCAL training data consists of a large set of im-ages with bounding boxes around each instance of an ob-ject.We reduce the problem of learning a deformable part model with this data to a binary classification problem.Let D=( x1,y1 ,..., x n,y n )be a set of labeled exam-ples where y i∈{−1,1}and x i specifies a HOG pyramid, H(x i),together with a range,Z(x i),of valid placements for the root and partfilters.We construct a positive exam-ple from each bounding box in the training set.For these ex-amples we define Z(x i)so the rootfilter must be placed to overlap the bounding box by at least50%.Negative exam-ples come from images that do not contain the target object. Each placement of the rootfilter in such an image yields a negative training example.Note that for the positive examples we treat both the part locations and the exact location of the rootfilter as latent variables.We have found that allowing uncertainty in the root location during training significantly improves the per-formance of the system(see Section4).tent SVMsA latent SVM is defined as follows.We assume that each example x is scored by a function of the form,fβ(x)=maxz∈Z(x)β·Φ(x,z),(2)whereβis a vector of model parameters and z is a set of latent values.For our deformable models we define Φ(x,z)=ψ(H(x),z)so thatβ·Φ(x,z)is the score of placing the model according to z.In analogy to classical SVMs we would like to trainβfrom labeled examples D=( x1,y1 ,..., x n,y n )by optimizing the following objective function,β∗(D)=argminβλ||β||2+ni=1max(0,1−y i fβ(x i)).(3)By restricting the latent domains Z(x i)to a single choice, fβbecomes linear inβ,and we obtain linear SVMs as a special case of latent tent SVMs are instances of the general class of energy-based models[18].3.2.Semi-ConvexityNote that fβ(x)as defined in(2)is a maximum of func-tions each of which is linear inβ.Hence fβ(x)is convex inβ.This implies that the hinge loss max(0,1−y i fβ(x i)) is convex inβwhen y i=−1.That is,the loss function is convex inβfor negative examples.We call this property of the loss function semi-convexity.Consider an LSVM where the latent domains Z(x i)for the positive examples are restricted to a single choice.The loss due to each positive example is now bined with the semi-convexity property,(3)becomes convex inβ.If the labels for the positive examples are notfixed we can compute a local optimum of(3)using a coordinate de-scent algorithm:1.Holdingβfixed,optimize the latent values for the pos-itive examples z i=argmax z∈Z(xi )β·Φ(x,z).2.Holding{z i}fixed for positive examples,optimizeβby solving the convex problem defined above.It can be shown that both steps always improve or maintain the value of the objective function in(3).If both steps main-tain the value we have a strong local optimum of(3),in the sense that Step1searches over an exponentially large space of latent labels for positive examples while Step2simulta-neously searches over weight vectors and an exponentially large space of latent labels for negative examples.3.3.Data Mining Hard NegativesIn object detection the vast majority of training exam-ples are negative.This makes it infeasible to consider all negative examples at a time.Instead,it is common to con-struct training data consisting of the positive instances and “hard negative”instances,where the hard negatives are data mined from the very large set of possible negative examples.Here we describe a general method for data mining ex-amples for SVMs and latent SVMs.The method iteratively solves subproblems using only hard instances.The innova-tion of our approach is a theoretical guarantee that it leads to the exact solution of the training problem defined using the complete training set.Our results require the use of a margin-sensitive definition of hard examples.The results described here apply both to classical SVMs and to the problem defined by Step2of the coordinate de-scent algorithm for latent SVMs.We omit the proofs of the theorems due to lack of space.These results are related to working set methods[17].We define the hard instances of D relative toβas,M(β,D)={ x,y ∈D|yfβ(x)≤1}.(4)That is,M(β,D)are training examples that are incorrectly classified or near the margin of the classifier defined byβ. We can show thatβ∗(D)only depends on hard instances. Theorem1.Let C be a subset of the examples in D.If M(β∗(D),D)⊆C thenβ∗(C)=β∗(D).This implies that in principle we could train a model us-ing a small set of examples.However,this set is defined in terms of the optimal modelβ∗(D).Given afixedβwe can use M(β,D)to approximate M(β∗(D),D).This suggests an iterative algorithm where we repeatedly compute a model from the hard instances de-fined by the model from the last iteration.This is further justified by the followingfixed-point theorem.Theorem2.Ifβ∗(M(β,D))=βthenβ=β∗(D).Let C be an initial“cache”of examples.In practice we can take the positive examples together with random nega-tive examples.Consider the following iterative algorithm: 1.Letβ:=β∗(C).2.Shrink C by letting C:=M(β,C).3.Grow C by adding examples from M(β,D)up to amemory limit L.Theorem3.If|C|<L after each iteration of Step2,the algorithm will converge toβ=β∗(D)infinite time.3.4.Implementation detailsMany of the ideas discussed here are only approximately implemented in our current system.In practice,when train-ing a latent SVM we iteratively apply classical SVM train-ing to triples x1,z1,y1 ,..., x n,z n,y n where z i is se-lected to be the best scoring latent label for x i under themodel trained in the previous iteration.Each of these triples leads to an example Φ(x i,z i),y i for training a linear clas-sifier.This allows us to use a highly optimized SVM pack-age(SVMLight[17]).On a single CPU,the entire training process takes3to4hours per object class in the PASCAL datasets,including initialization of the parts.Root Filter Initialization:For each category,we auto-matically select the dimensions of the rootfilter by looking at statistics of the bounding boxes in the training data.1We train an initial rootfilter F0using an SVM with no latent variables.The positive examples are constructed from the unoccluded training examples(as labeled in the PASCAL data).These examples are anisotropically scaled to the size and aspect ratio of thefilter.We use random subwindows from negative images to generate negative examples.Root Filter Update:Given the initial rootfilter trained as above,for each bounding box in the training set wefind the best-scoring placement for thefilter that significantly overlaps with the bounding box.We do this using the orig-inal,un-scaled images.We retrain F0with the new positive set and the original random negative set,iterating twice.Part Initialization:We employ a simple heuristic to ini-tialize six parts from the rootfilter trained above.First,we select an area a such that6a equals80%of the area of the rootfilter.We greedily select the rectangular region of area a from the rootfilter that has the most positive energy.We zero out the weights in this region and repeat until six parts are selected.The partfilters are initialized from the rootfil-ter values in the subwindow selected for the part,butfilled in to handle the higher spatial resolution of the part.The initial deformation costs measure the squared norm of a dis-placement with a i=(0,0)and b i=−(1,1).Model Update:To update a model we construct new training data triples.For each positive bounding box in the training data,we apply the existing detector at all positions and scales with at least a50%overlap with the given bound-ing box.Among these we select the highest scoring place-ment as the positive example corresponding to this training bounding box(Figure3).Negative examples are selected byfinding high scoring detections in images not containing the target object.We add negative examples to a cache un-til we encounterfile size limits.A new model is trained by running SVMLight on the positive and negative examples, each labeled with part placements.We update the model10 times using the cache scheme described above.In each it-eration we keep the hard instances from the previous cache and add as many new hard instances as possible within the memory limit.Toward thefinal iterations,we are able to include all hard instances,M(β,D),in the cache.1We picked a simple heuristic by cross-validating over5object classes. We set the model aspect to be the most common(mode)aspect in the data. We set the model size to be the largest size not larger than80%of thedata.Figure3.The image on the left shows the optimization of the la-tent variables for a positive example.The dotted box is the bound-ing box label provided in the PASCAL training set.The large solid box shows the placement of the detection window while the smaller solid boxes show the placements of the parts.The image on the right shows a hard-negative example.4.ResultsWe evaluated our system using the PASCAL VOC2006 and2007comp3challenge datasets and protocol.We refer to[7,8]for details,but emphasize that both challenges are widely acknowledged as difficult testbeds for object detec-tion.Each dataset contains several thousand images of real-world scenes.The datasets specify ground-truth bounding boxes for several object classes,and a detection is consid-ered correct when it overlaps more than50%with a ground-truth bounding box.One scores a system by the average precision(AP)of its precision-recall curve across a testset.Recent work in pedestrian detection has tended to report detection rates versus false positives per window,measured with cropped positive examples and negative images with-out objects of interest.These scores are tied to the reso-lution of the scanning window search and ignore effects of non-maximum suppression,making it difficult to compare different systems.We believe the PASCAL scoring method gives a more reliable measure of performance.The2007challenge has20object categories.We entered a preliminary version of our system in the official competi-tion,and obtained the best score in6categories.Our current system obtains the highest score in10categories,and the second highest score in6categories.Table1summarizes the results.Our system performs well on rigid objects such as cars and sofas as well as highly deformable objects such as per-sons and horses.We also note that our system is successful when given a large or small amount of training data.There are roughly4700positive training examples in the person category but only250in the sofa category.Figure4shows some of the models we learned.Figure5shows some ex-ample detections.We evaluated different components of our system on the longer-established2006person dataset.The top AP scoreaero bike bird boat bottle bus car cat chair cow table dog horse mbike person plant sheep sofa train tvOur rank 31211224111422112141Our score .180.411.092.098.249.349.396.110.155.165.110.062.301.337.267.140.141.156.206.336Darmstadt .301INRIA Normal .092.246.012.002.068.197.265.018.097.039.017.016.225.153.121.093.002.102.157.242INRIA Plus.136.287.041.025.077.279.294.132.106.127.067.071.335.249.092.072.011.092.242.275IRISA .281.318.026.097.119.289.227.221.175.253MPI Center .060.110.028.031.000.164.172.208.002.044.049.141.198.170.091.004.091.034.237.051MPI ESSOL.152.157.098.016.001.186.120.240.007.061.098.162.034.208.117.002.046.147.110.054Oxford .262.409.393.432.375.334TKK .186.078.043.072.002.116.184.050.028.100.086.126.186.135.061.019.036.058.067.090Table 1.PASCAL VOC 2007results.Average precision scores of our system and other systems that entered the competition [7].Empty boxes indicate that a method was not tested in the corresponding class.The best score in each class is shown in bold.Our current system ranks first in 10out of 20classes.A preliminary version of our system ranked first in 6classes in the official competition.BottleCarBicycleSofaFigure 4.Some models learned from the PASCAL VOC 2007dataset.We show the total energy in each orientation of the HOG cells in the root and part filters,with the part filters placed at the center of the allowable displacements.We also show the spatial model for each part,where bright values represent “cheap”placements,and dark values represent “expensive”placements.in the PASCAL competition was .16,obtained using a rigid template model of HOG features [5].The best previous re-sult of.19adds a segmentation-based verification step [20].Figure 6summarizes the performance of several models we trained.Our root-only model is equivalent to the model from [5]and it scores slightly higher at .18.Performance jumps to .24when the model is trained with a LSVM that selects a latent position and scale for each positive example.This suggests LSVMs are useful even for rigid templates because they allow for self-adjustment of the detection win-dow in the training examples.Adding deformable parts in-creases performance to .34AP —a factor of two above the best previous score.Finally,we trained a model with partsbut no root filter and obtained .29AP.This illustrates the advantage of using a multiscale representation.We also investigated the effect of the spatial model and allowable deformations on the 2006person dataset.Recall that s i is the allowable displacement of a part,measured in HOG cells.We trained a rigid model with high-resolution parts by setting s i to 0.This model outperforms the root-only system by .27to .24.If we increase the amount of allowable displacements without using a deformation cost,we start to approach a bag-of-features.Performance peaks at s i =1,suggesting it is useful to constrain the part dis-placements.The optimal strategy allows for larger displace-ments while using an explicit deformation cost.The follow-Figure 5.Some results from the PASCAL 2007dataset.Each row shows detections using a model for a specific class (Person,Bottle,Car,Sofa,Bicycle,Horse).The first three columns show correct detections while the last column shows false positives.Our system is able to detect objects over a wide range of scales (such as the cars)and poses (such as the horses).The system can also detect partially occluded objects such as a person behind a bush.Note how the false detections are often quite reasonable,for example detecting a bus with the car model,a bicycle sign with the bicycle model,or a dog with the horse model.In general the part filters represent meaningful object parts that are well localized in each detection such as the head in the person model.Figure6.Evaluation of our system on the PASCAL VOC2006 person dataset.Root uses only a rootfilter and no latent place-ment of the detection windows on positive examples.Root+Latent uses a rootfilter with latent placement of the detection windows. Parts+Latent is a part-based system with latent detection windows but no rootfilter.Root+Parts+Latent includes both root and part filters,and latent placement of the detection windows.ing table shows AP as a function of freely allowable defor-mation in thefirst three columns.The last column gives the performance when using a quadratic deformation cost and an allowable displacement of2HOG cells.s i01232+quadratic costAP.27.33.31.31.345.DiscussionWe introduced a general framework for training SVMs with latent structure.We used it to build a recognition sys-tem based on multiscale,deformable models.Experimental results on difficult benchmark data suggests our system is the current state-of-the-art in object detection.LSVMs allow for exploration of additional latent struc-ture for recognition.One can consider deeper part hierar-chies(parts with parts),mixture models(frontal vs.side cars),and three-dimensional pose.We would like to train and detect multiple classes together using a shared vocab-ulary of parts(perhaps visual words).We also plan to use A*search[11]to efficiently search over latent parameters during detection.References[1]Y.Amit and A.Trouve.POP:Patchwork of parts models forobject recognition.IJCV,75(2):267–282,November2007.[2]M.Burl,M.Weber,and P.Perona.A probabilistic approachto object recognition using local photometry and global ge-ometry.In ECCV,pages II:628–641,1998.[3] D.Crandall,P.Felzenszwalb,and D.Huttenlocher.Spatialpriors for part-based recognition using statistical models.In CVPR,pages10–17,2005.[4] D.Crandall and D.Huttenlocher.Weakly supervised learn-ing of part-based spatial models for visual object recognition.In ECCV,pages I:16–29,2006.[5]N.Dalal and B.Triggs.Histograms of oriented gradients forhuman detection.In CVPR,pages I:886–893,2005.[6] B.Epshtein and S.Ullman.Semantic hierarchies for recog-nizing objects and parts.In CVPR,2007.[7]M.Everingham,L.Van Gool,C.K.I.Williams,J.Winn,and A.Zisserman.The PASCAL Visual Object Classes Challenge2007(VOC2007)Results./challenges/VOC/voc2007/workshop.[8]M.Everingham, A.Zisserman, C.K.I.Williams,andL.Van Gool.The PASCAL Visual Object Classes Challenge2006(VOC2006)Results./challenges/VOC/voc2006/results.pdf.[9]P.Felzenszwalb and D.Huttenlocher.Distance transformsof sampled functions.Cornell Computing and Information Science Technical Report TR2004-1963,September2004.[10]P.Felzenszwalb and D.Huttenlocher.Pictorial structures forobject recognition.IJCV,61(1),2005.[11]P.Felzenszwalb and D.McAllester.The generalized A*ar-chitecture.JAIR,29:153–190,2007.[12]R.Fergus,P.Perona,and A.Zisserman.Object class recog-nition by unsupervised scale-invariant learning.In CVPR, 2003.[13]M.Fischler and R.Elschlager.The representation andmatching of pictorial structures.IEEE Transactions on Com-puter,22(1):67–92,January1973.[14] A.Holub and P.Perona.A discriminative framework formodelling object classes.In CVPR,pages I:664–671,2005.[15]S.Ioffe and D.Forsyth.Probabilistic methods forfindingpeople.IJCV,43(1):45–68,June2001.[16]Y.Jin and S.Geman.Context and hierarchy in a probabilisticimage model.In CVPR,pages II:2145–2152,2006.[17]T.Joachims.Making large-scale svm learning practical.InB.Sch¨o lkopf,C.Burges,and A.Smola,editors,Advances inKernel Methods-Support Vector Learning.MIT Press,1999.[18]Y.LeCun,S.Chopra,R.Hadsell,R.Marc’Aurelio,andF.Huang.A tutorial on energy-based learning.InG.Bakir,T.Hofman,B.Sch¨o lkopf,A.Smola,and B.Taskar,editors, Predicting Structured Data.MIT Press,2006.[19] A.Quattoni,S.Wang,L.Morency,M.Collins,and T.Dar-rell.Hidden conditional randomfields.PAMI,29(10):1848–1852,October2007.[20] ing segmentation to verify object hypothe-ses.In CVPR,pages1–8,2007.[21] D.Ramanan and C.Sminchisescu.Training deformablemodels for localization.In CVPR,pages I:206–213,2006.[22]H.Schneiderman and T.Kanade.Object detection using thestatistics of parts.IJCV,56(3):151–177,February2004. [23]J.Zhang,M.Marszalek,zebnik,and C.Schmid.Localfeatures and kernels for classification of texture and object categories:A comprehensive study.IJCV,73(2):213–238, June2007.。

基于时频分析的深度学习调制识别算法

在通信系统中,通常用不同的调制方法来调制发送的信号,以进行有效的数据传输。

调制识别是信号检测和信号解调之间的中间过程[1],自动调制识别(Automatic Modulation Recog⁃nition ,AMR )可以识别出信号的调制信息,在实际的民用和军事应用中发挥关键作用,如认知无线电、信号识别和频谱监控[2]。

通常AMR 算法可分为两类:基于似然的方法和基于特征的方法。

基于似然的方法使用概率论、假设检验理论和适当的决策准则来解决调制识别问题[3],但其计算十分复杂;而基于特征的方法则对调制信号进行特征提取和分类,其识别性能与提取的特征数量有关。

调制信号的瞬时幅度、相位和频率等各种统计特征已用于对调制方式的识别,如循环平稳特性和高阶统计[4-5]。

现有基于特征的分类器包括决策树算法和机器学习算法,如朴素贝叶斯和人工神经网络。

近年来,作为一种强大的机器学习方法的深度学习在图像分类和语音识别等方面取得了巨大的成功。

基于深度学习的方法级联多层非线性处理单元对输入数据自动优化提取特征,以最大程度地减少分类错误。

深度学习方法也已应用于调制识别[6-7]。

文献[8]论述了在无线电信号处理中的深度学习新兴应用,并使用GNU Ra⁃dio 软件生成了具有同相(in-phase ,I )和正交(quadrature ,Q )分量的调制信号的开放数据集,从而进行调制识别;文献[9]在开源数据集上应用CNN 网络结构进行识别,相对于基于循环特征的识别算法,识别性能更优;文献[10]提出使用基于CNN 、起始网络(inception network )、卷积长短时记忆全连接网络(ConVoluional ,Long Short -Term Memory ,Fully Connected Deep Neural Network ,CLDNN )和残差神经网络(ResidualNetwork ,ResNet )对调制信号数据集进行识别同时对各自识别性能进行比较;文献[11]提出将调制信号的同相、正交分量以及四阶高阶累积量一起组成数据集来提升调制识别性能。

Fast 3D Recognition and Pose Using the Viewpoint Feature Histogram

Fast3D Recognition and Pose Using the Viewpoint Feature Histogram Radu Bogdan Rusu,Gary Bradski,Romain Thibaux,John HsuWillow Garage68Willow Rd.,Menlo Park,CA94025,USA{rusu,bradski,thibaux,hsu}@Abstract—We present the Viewpoint Feature Histogram (VFH),a descriptor for3D point cloud data that encodes geometry and viewpoint.We demonstrate experimentally on a set of60objects captured with stereo cameras that VFH can be used as a distinctive signature,allowing simultaneous recognition of the object and its pose.The pose is accurate enough for robot manipulation,and the computational cost is low enough for real time operation.VFH was designed to be robust to large surface noise and missing depth information in order to work reliably on stereo data.I.I NTRODUCTIONAs part of a long term goal to develop reliable capabilities in the area of perception for mobile manipulation,we address a table top manipulation task involving objects that can be manipulated by one robot hand.Our robot is shown in Fig.1. In order to manipulate an object,the robot must reliably identify it,as well as its6degree-of-freedom(6DOF)pose. This paper proposes a method to identify both at the same time,reliably and at high speed.We make the following assumptions.•Objects are rigid and relatively Lambertian.They can be shiny,but not reflective or transparent.•Objects are in light clutter.They can be easily seg-mented in3D and can be grabbed by the robot hand without obstruction.•The item of interest can be grabbed directly,so it is not occluded.•Items can be grasped even given an approximate pose.The gripper on our robot can open to9cm and each grip is2.5cm wide which allows an object8.5cm wide object to be grasped when the pose is off by+/-10 degrees.Despite these assumptions our problem has several prop-erties that make the task difficult.•The objects need not contain texture.•Our dataset includes objects of very similar shapes,for example many slight variations of typical wine glasses.•To be usable,the recognition accuracy must be very high,typically much higher than,say,for image retrieval tasks,since false positives have very high costs and so must be kept extremely rare.•To interact usefully with humans,recognition cannot take more than a fraction of a second.This puts constraints on computation,but more importantly this precludes the use of accurate but slow3Dacquisition Fig.1.A PR2robot from Willow Garage,showing its grippers and stereo camerasusing lasers.Instead we rely on stereo data,which suffers from higher noise and missing data.Our focus is perception for mobile manipulation.Working on a mobile versus a stationary robot means that we can’t depend on instrumenting the external world with active vision systems or special lighting,but we can put such devices on the robot.In our case,we use projected texture1 to yield dense stereo depth maps at30Hz.We also cannot ensure environmental conditions.We may move from a sunlit room to a dim hallway into a room with no light at all.The projected texture gives us a fair amount of resilience to local lighting conditions as well.1Not structured light,this is random textureAlthough this paper focuses on3D depth features,2D imagery is clearly important,for example for shiny and transparent objects,or to distinguish items based on texture such as telling apart a Coke can from a Diet Coke can.In our case,the textured light alternates with no light to allow for2D imagery aligned with the texture based dense depth, however adding2D visual features will be studied in future work.Here,we look for an effective purely3D feature. Our philosophy is that one should use or design a recogni-tion algorithm thatfits one’s engineering needs such as scal-ability,training speed,incremental training needs,and so on, and thenfind features that make the recognition performance of that architecture meet one’s specifications.For reasons of online training,and because of large memory availability, we choose fast approximate K-Nearest Neighbors(K-NN) implemented in the FLANN library[1]as our recognition architecture.The key contribution of this paper is then the design of a new,computationally efficient3D feature that yields object recognition and6DOF pose.The structure of this paper is as follows:Related work is described in Section II.Next,we give a brief description of our system architecture in Section III.We discuss our surface normal and segmentation algorithm in Section IV followed by a discussion of the Viewpoint Feature Histogram in Section V.Experimental setup and resulting computational and recognition performance are described in Section VI. Conclusions and future work are discussed in Section VII.II.R ELATED W ORKThe problem that we are trying to solve requires global (3D object level)classification based on estimated features. This has been under investigation for a long time in various researchfields,such as computer graphics,robotics,and pattern matching,see[2]–[4]for comprehensive reviews.We address the most relevant work below.Some of the widely used3D point feature extraction approaches include:spherical harmonic invariants[5],spin images[6],curvature maps[7],or more recently,Point Feature Histograms(PFH)[8],and conformal factors[9]. Spherical harmonic invariants and spin images have been successfully used for the problem of object recognition for densely sampled datasets,though their performance seems to degrade for noisier and sparser datasets[4].Our stereo data is noisier and sparser than typical line scan data which motivated the use of our new features.Conformal factors are based on conformal geometry,which is invariant to isometric transformations,and thus obtains good results on databases of watertight models.Its main drawback is that it can only be applied to manifold meshes which can be problematic in stereo.Curvature maps and PFH descriptors have been studied in the context of local shape comparisons for data registration.A side study[10]applied the PFH descriptors to the problem of surface classification into3D geometric primitives,although only for data acquired using precise laser sensors.A different pointfingerprint representation using the projections of geodesic circles onto the tangent plane at a point p i was proposed in[11]for the problem of surface registration.As the authors note,geodesic distances are more sensitive to surface sampling noise,and thus are unsuitable for real sensed data without a priori smoothing and reconstruction.A decomposition of objects into parts learned using spin images is presented in[12]for the problem of vehicle identification.Methods relying on global features include descriptors such as Extended Gaussian Images(EGI)[13],eigen shapes[14],or shape distributions[15].The latter samples statistics of the entire object and represents them as distri-butions of shape properties,however they do not take into account how the features are distributed over the surface of the object.Eigen shapes show promising results but they have limits on their discrimination ability since important higher order variances are discarded.EGIs describe objects based on the unit normal sphere,but have problems handling arbitrarily curved objects.The work in[16]makes use of spin-image signatures and normal-based signatures to achieve classification rates over 90%with synthetic and CAD model datasets.The datasets used however are very different than the ones acquired using noisy640×480stereo cameras such as the ones used in our work.In addition,the authors do not provide timing information on the estimation and matching parts which is critical for applications such as ours.A system for fully automatic3D model-based object recognition and segmentation is presented in[17]with good recognition rates of over95%for a database of55objects.Unfortunately,the computational performance of the proposed method is not suitable for real-time as the authors report the segmentation of an object model in a cluttered scene to be around2 minutes.Moreover,the objects in the database are scanned using a high resolution Minolta scanner and their geometric shapes are very different.As shown in Section VI,the objects used in our experiments are much more similar in terms of geometry,so such a registration-based method would fail. In[18],the authors propose a system for recognizing3D objects in photographs.The techniques presented can only be applied in the presence of texture information,and require a cumbersome generation of models in an offline step,which makes this unsuitable for our work.As previously presented,our requirements are real-time object recognition and pose identification from noisy real-world datasets acquired using projective texture stereo cam-eras.Our3D object classification is based on an extension of the recently proposed Fast Point Feature Histogram(FPFH) descriptors[8],which record the relative angular directions of surface normals with respect to one another.The FPFH performs well in classification applications and is robust to noise but it is invariant to viewpoint.This paper proposes a novel descriptor that encodes the viewpoint information and has two parts:(1)an extended FPFH descriptor that achieves O(k∗n)to O(n)speed up over FPFHs where n is the number of points in the point cloud and k is how many points used in each local neighborhood;(2)a new signature that encodes important statistics between the viewpoint and the surface normals on the object.We callthis new feature the Viewpoint Feature Histogram(VFH)as detailed below.III.A RCHITECTUREOur system architecture employs the following processing steps:•Synchronized,calibrated and epipolar aligned left and right images of the scene are acquired.•A dense depth map is computed from the stereo pair.•Surface normals in the scene are calculated.•Planes are identified and segmented out and the remain-ing point clouds from non-planar objects are clustered in Euclidean space.•The Viewpoint Feature Histogram(VFH)is calculated over large enough objects(here,objects having at least 100points).–If there are multiple objects in a scene,they are processed front to back relative to the camera.–Occluded point clouds with less than75%of the number of points of the frontal objects are notedbut not identified.•Fast approximate K-NN is used to classify the object and its view.Some steps from the early processing pipeline are shown in Figure2.Shown left to right,top to bottom in thatfigure are: a moderately complex scene with many different vertical and horizontal surfaces,the resulting depth map,the estimated surface normals and the objects segmented from the planar surfaces in thescene.Fig.2.Early processing steps row wise,top to bottom:A scene,its depthmap,surface normals and segmentation into planes and outlier objects.For computing3D depth maps,we use640x480stereowith textured light.The textureflashes on only very brieflyas the cameras take a picture resulting in lights that look dimto the human eye but bright to the camera.Textureflashesonly every other frame so that raw imagery without texturecan be gathered alternating with densely textured scenes.Thestereo has a38degreefield of view and is designed for closein manipulation tasks,thus the objects that we deal with arefrom0.5to1.5meters away.The stereo algorithm that weuse was developed in[19]and uses the implementation in theOpenCV library[20]as described in detail in[21],runningat30Hz.IV.S URFACE N ORMALS AND3D S EGMENTATIONWe employ segmentation prior to the actual feature es-timation because in robotic manipulation scenarios we areonly interested in certain precise parts of the environment,and thus computational resources can be saved by tacklingonly those parts.Here,we are looking to manipulate reach-able objects that lie on horizontal surfaces.Therefore,oursegmentation scheme proceeds at extracting these horizontalsurfacesfirst.Fig.3.From left to right:raw point cloud dataset,planar and clustersegmentation,more complex segmentation.Compared to our previous work[22],we have improvedthe planar segmentation algorithms by incorporating surfacenormals into the sample selection and model estimationsteps.We also took care to carefully build SSE aligneddata structures in memory for any computationally expensiveoperation.By rejecting candidates which do not supportour constraints,our system can segment data at about7Hz,including normal estimation,on a regular Core2Duo laptopusing a single core.To get frame rate performance(realtime),we use a voxelized data structure over the input point cloudand downsample with a leaf size of0.5cm.The surfacenormals are therefore estimated only for the downsampledresult,but using the information in the original point cloud.The planar components are extracted using a RMSAC(Ran-domized MSAC)method that takes into account weightedaverages of distances to the model together with the angleof the surface normals.We then select candidate table planesusing a heuristic combining the number of inliers whichsupport the planar model as well as their proximity to thecamera viewpoint.This approach emphasizes the part of thespace where the robot manipulators can reach and grasp theobjects.The segmentation of object candidates supported by thetable surface is performed by looking at points whose projec-tion falls inside the bounding2D polygon for the table,andapplying single-link clustering.The result of these processingsteps is a set of Euclidean point clusters.This works toreliably segment objects that are separated by about half theirminimum radius from each other.An can be seen in Figure3.To resolve further ambiguities with to the chosen candidate clusters,such as objects stacked on other planar objects(such as books),we repeat the mentioned step by treating each additional horizontal planar structure on top of the table candidates as a table itself and repeating the segmentation step(see results in Figure3).We emphasize that this segmentation step is of extreme importance for our application,because it allows our methods to achieve favorable computational performances by extract-ing only the regions of interest in a scene(i.e.,objects that are to be manipulated,located on horizontal surfaces).In cases where our“light clutter”assumption does not hold and the geometric Euclidean clustering is prone to failure, a more sophisticated segmentation scheme based on texture properties could be implemented.V.V IEWPOINT F EATURE H ISTOGRAMIn order to accurately and robustly classify points with respect to their underlying surface,we borrow ideas from the recently proposed Point Feature Histogram(PFH)[10]. The PFH is a histogram that collects the pairwise pan,tilt and yaw angles between every pair of normals on a surface patch (see Figure4).In detail,for a pair of3D points p i,p j ,and their estimated surface normals n i,n j ,the set of normal angular deviations can be estimated as:α=v·n jφ=u·(p j−p i)dθ=arctan(w·n j,u·n j)(1)where u,v,w represent a Darboux frame coordinate system chosen at p i.Then,the Point Feature Histogram at a patch of points P={p i}with i={1···n}captures all the sets of α,φ,θ between all pairs of p i,p j from P,and bins the results in a histogram.The bottom left part of Figure4 presents the selection of the Darboux frame and a graphical representation of the three angular features.Because all possible pairs of points are considered,the computation complexity of a PFH is O(n2)in the number of surface normals n.In order to make a more efficient algorithm,the Fast Point Feature Histogram[8]was de-veloped.The FPFH measures the same angular features as PFH,but estimates the sets of values only between every point and its k nearest neighbors,followed by a reweighting of the resultant histogram of a point with the neighboring histograms,thus reducing the computational complexity to O(k∗n).Our past work[22]has shown that a global descriptor (GFPFH)can be constructed from the classification results of many local FPFH features,and used on a wide range of confusable objects(20different types of glasses,bowls, mugs)in500scenes achieving96.69%on object class recognition.However,the categorized objects were only split into4distinct classes,which leaves the scaling problem open.Moreover,the GFPFH is susceptible to the errors of the local classification results,and is more cumbersome to estimate.In any case,for manipulation,we require that the robot not only identifies objects,but also recognizes their6DOF poses for grasping.FPFH is invariant both to object scale (distance)and object pose and so cannot achieve the latter task.In this work,we decided to leverage the strong recognition results of FPFH,but to add in viewpoint variance while retaining invariance to scale,since the dense stereo depth map gives us scale/distance directly.Our contribution to the problem of object recognition and pose identification is to extend the FPFH to be estimated for the entire object cluster (as seen in Figure4),and to compute additional statistics between the viewpoint direction and the normals estimated at each point.To do this,we used the key idea of mixing the viewpoint direction directly into the relative normal angle calculation in the FPFH.Figure6presents this idea with the new feature consisting of two parts:(1)a viewpoint direction component(see Figure5)and(2)a surface shape component comprised of an extended FPFH(see Figure4).The viewpoint component is computed by collecting a histogram of the angles that the viewpoint direction makes with each normal.Note,we do not mean the view angle to each normal as this would not be scale invariant,but instead we mean the angle between the central viewpoint direction translated to each normal.The second component measures the relative pan,tilt and yaw angles as described in[8],[10] but now measured between the viewpoint direction at the central point and each of the normals on the surface.We call the new assembled feature the Viewpoint Feature Histogram (VFH).Figure6presents the resultant assembled VFH for a random object.piαFig.5.The Viewpoint Feature Histogram is created from the extendedFast Point Feature Histogram as seen in Figure4together with the statisticsof the relative angles between each surface normal to the central viewpointdirection.The computational complexity of VFH is O(n).In ourexperiments,we divided the viewpoint angles into128binsand theα,φandθangles into45bins each or a total of263dimensions.The estimation of a VFH takes about0.3ms onaverage on a2.23GHz single core of a Core2Duo machineusing optimized SSE instructions.p 7p p 8p 9p 10p 11p 5p 1p p 3p 4cn c =uun 5v=(p 5-c)×u w=u ×vc p 5wv αφθFig.4.The extended Fast Point Feature Histogram collects the statistics of the relative angles between the surface normals at each point to the surface normal at the centroid of the object.The bottom left part of the figure describes the three angular feature for an example pair of points.Viewpoint componentextended FPFH componentFig.6.An example of the resultant Viewpoint Feature Histogram for one of the objects used.Note the two concatenated components.VI.V ALIDATION AND E XPERIMENTAL R ESULTS To evaluate our proposed descriptor and system archi-tecture,we collected a large dataset consisting of over 60IKEA kitchenware objects as show in Figure 8.These objects consisted of many kinds each of:wine glasses,tumblers,drinking glasses,mugs,bowls,and a couple of boxes.In each of these categories,many of the objects were distinguished only by subtle variations in shape as can be seen for example in the confusions in Figure 10.We captured over 54000scenes of these objects by spinning them on a turn table 180◦2at each of 2offsets on a platform that tilted 0,8,16,22and 30degrees.Each 180◦rotation was captured with about 90images.The turn table is shown in Fig.7.We additionally worked with a subset of 20objects in 500lightly cluttered scenes with varying arrangements of horizontal and vertical surfaces,using the same data set provided by in [22].No2Wedidn’t go 360degrees so that we could keep the calibration box inviewFig.7.The turn table used to collect views of objects with known orientation.pose information was available for this second dataset so we only ran experiments separately for object recognition results.The complete source code used to generate our experimen-tal results together with both object databases are available under a BSD open source license in our ROS repository at Willow Garage 3.We are currently taking steps towards creating a web page with complete tutorials on how to fully replicate the experiments presented herein.Both the objects in the [22]dataset as well as the ones we acquired,constitute valid examples of objects of daily use that our robot needs to be able to reliably identify and manipulate.While 60objects is far from the number of objects the robot eventually needs to be able to recognize,it may be enough if we assume that the robot knows what3Fig.8.The complete set of IKEA objects used for the purpose of our experiments.All transparent glasses have been painted white to obtain3D information during the acquisition process.TABLE IR ESULTS FOR OBJECT RECOGNITION AND POSE DETECTION OVER 54000SCENES PLUS500LIGHTLY CLUTTERED SCENES.Object PoseMethod Recognition EstimationVFH98.52%98.52%Spin75.3%61.2%context(kitchen table,workbench,coffee table)it is in, so that it needs only discriminate among a small context dependent set of objects.The geometric variations between objects are subtle,and the data acquired is noisy due to the stereo sensor character-istics,yet the perception system has to work well enough to differentiate between,say,glasses that look similar but serve different purposes(e.g.,a wine glass versus a brandy glass). As presented in Section II,the performance of the3D descriptors proposed in the literature degrade on noisier datasets.One of the most popular3D descriptor to date used on datasets acquired using sensing devices similar to ours (e.g.,similar noise characteristics)is the spin image[6].To validate the VFH feature we thus compare it to the spin image,by running the same experiments multiple times. For the reasons given in Section I,we base our recogni-tion architecture on fast approximate K-Nearest Neighbors (KNN)searches using kd-trees[1].The construction of the tree and the search of the nearest neighbors places an equal weight on each histogram bin in the VFH and spin images features.Figure11shows time stop sequentially aggregated exam-ples of the training set.Figure12shows example recognition results for VFH.Andfinally,Figure10gives some idea of the performance differences between VFH and spin images. The object recognition rates over the lightly cluttered dataset were98.1%for VFH and73.2%for spin images.The overall recognition rates for VFH andSpin imagesare shown inTable I where VFH handily outperforms spin images for both object recognition and pose.Fig.9.Data training performed in simulation.Thefigure presents a snapshot of the simulation with a water bottle from the object model database and the corresponding stereo point cloud output.VII.C ONCLUSIONS AND F UTURE W ORKIn this paper we presented a novel3D feature descriptor, the Viewpoint Feature Histogram(VFH),useful for object recognition and6DOF pose identification for application where a priori segmentation is possible.The high recognition performance and fast computational properties,demonstrated the superiority of VFH over spin images on a large scale dataset consisting of over54000scenes with pared to other similar initiatives,our architecture works well with noisy data acquired using standard stereo cameras in real-time,and can detect subtle variations in the geometry of objects.Moreover,we presented an integrated approach for both recognition and6DOF pose identification for untextured objects,the latter being of extreme importance for mobile manipulation and grasping applications.Fig.10.VFH consistently outperforms spin images for both recognition and for pose.The bottom of the figure presents an example result of VFH run on a mug.The bottom left corner is the learned models and the matches go from best to worse from left to right across the bottom followed by left to right across the top.The top part of the figure presents the results obtained using a spin image.For VFH,3of 5object recognition and 3of 5pose results are correct.For spin images,2of 5object recognition results are correct and 0of 5pose results arecorrect.Fig.11.Sequence examples of object training with calibration box on the outside.An automatic training pipeline can be integrated with our 3D simulator based on Gazebo [23]as depicted in figure 9,where the stereo point cloud is generated from perfectly rectified camera images.We are currently working on making both the fully an-notated database of objects together with the source codeof VFH available to the research community as open source.The preliminary results of our efforts can already be checked from the trunk of our Willow Garage ROS repository,but we are taking steps towards generating a set of tutorials on how to replicate and extend the experiments presented in this paper.R EFERENCES[1]M.Muja and D.G.Lowe,“Fast approximate nearest neighbors withautomatic algorithm configuration,”VISAPP ,2009.[2]J.W.Tangelder and R.C.Veltkamp,“A Survey of Content Based3D Shape Retrieval Methods,”in SMI ’04:Proceedings of the Shape Modeling International ,2004,pp.145–156.[3] A.K.Jain and C.Dorai,“3D object recognition:Representation andmatching,”Statistics and Computing ,vol.10,no.2,pp.167–182,2000.[4] A.D.Bimbo and P.Pala,“Content-based retrieval of 3D models,”ACM Trans.Multimedia mun.Appl.,vol.2,no.1,pp.20–43,2006.[5]G.Burel and H.H´e nocq,“Three-dimensional invariants and theirapplication to object recognition,”Signal Process.,vol.45,no.1,pp.1–22,1995.[6] A.Johnson and M.Hebert,“Using spin images for efficient objectrecognition in cluttered 3D scenes,”IEEE Transactions on Pattern Analysis and Machine Intelligence ,May 1999.[7]T.Gatzke,C.Grimm,M.Garland,and S.Zelinka,“Curvature Mapsfor Local Shape Comparison,”in SMI ’05:Proceedings of the Inter-national Conference on Shape Modeling and Applications 2005(SMI’05),2005,pp.246–255.[8]R.B.Rusu,N.Blodow,and M.Beetz,“Fast Point Feature Histograms(FPFH)for 3D Registration,”in ICRA ,2009.[9] B.-C.M.and G.C.,“Characterizing shape using conformal factors,”in Eurographics Workshop on 3D Object Retrieval ,2008.[10]R.B.Rusu,Z.C.Marton,N.Blodow,and M.Beetz,“LearningInformative Point Classes for the Acquisition of Object Model Maps,”in In Proceedings of the 10th International Conference on Control,Automation,Robotics and Vision (ICARCV),2008.[11]Y .Sun and M.A.Abidi,“Surface matching by 3D point’s fingerprint,”in Proc.IEEE Int’l Conf.on Computer Vision ,vol.II,2001,pp.263–269.[12] D.Huber,A.Kapuria,R.R.Donamukkala,and M.Hebert,“Parts-based 3D object classification,”in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR 04),June 2004.[13] B.K.P.Horn,“Extended Gaussian Images,”Proceedings of the IEEE ,vol.72,pp.1671–1686,1984.[14]R.J.Campbell and P.J.Flynn,“Eigenshapes for 3D object recognitionin range data,”in Computer Vision and Pattern Recognition,1999.IEEE Computer Society Conference on.,pp.505–510.[15]R.Osada,T.Funkhouser,B.Chazelle,and D.Dobkin,“Shape distri-butions,”ACM Transactions on Graphics ,vol.21,pp.807–832,2002.[16]X.Li and I.Guskov,“3D object recognition from range images usingpyramid matching,”in ICCV07,2007,pp.1–6.[17] A.S.Mian,M.Bennamoun,and R.Owens,“Three-dimensionalmodel-based object recognition and segmentation in cluttered scenes,”IEEE Trans.Pattern Anal.Mach.Intell ,vol.28,pp.1584–1601,2006.[18] F.Rothganger,zebnik,C.Schmid,and J.Ponce,“3d objectmodeling and recognition using local affine-invariant image descriptors and multi-view spatial constraints,”International Journal of Computer Vision ,vol.66,p.2006,2006.[19]K.Konolige,“Small vision systems:hardware and implementation,”in In Eighth International Symposium on Robotics Research ,1997,pp.111–116.[20]“OpenCV ,Open source Computer Vision library,”in/wiki/,2009.[21]G.Bradski and A.Kaehler,“Learning OpenCV:Computer Vision withthe OpenCV Library,”in O’Reilly Media,Inc.,2008,pp.415–453.[22]R. B.Rusu, A.Holzbach,M.Beetz,and G.Bradski,“Detectingand segmenting objects for mobile manipulation,”in ICCV S3DV workshop ,2009.[23]N.Koenig and A.Howard,“Design and use paradigms for gazebo,an open-source multi-robot simulator,”in IEEE/RSJ International Conference on Intelligent Robots and Systems ,Sendai,Japan,Sep 2004,pp.2149–2154.。

tracking-by-segmentation方法的原理

tracking-by-segmentation方法的原理"Tracking by Segmentation"(通过分割进行跟踪)是一种计算机视觉中用于目标跟踪的方法。

该方法的原理是通过将目标从视频帧中分割出来,然后在连续帧之间跟踪这个目标的运动。

以下是"Tracking by Segmentation" 方法的基本原理:目标分割:首先,从视频帧中分割出包含目标对象的图像区域。

这通常需要使用图像分割算法,例如背景减除、阈值分割、边缘检测或语义分割等技术。

目标分割的目的是将目标与背景分离,以便进一步的跟踪。

特征提取:一旦目标被成功分割,就需要从目标区域中提取特征,以描述目标的外观和形状。

这些特征可以包括颜色直方图、纹理特征、形状描述符等。

这些特征将用于后续帧中的目标匹配。

运动估计:在接下来的视频帧中,通过比较当前帧中的目标特征与之前帧中的特征,估计目标的运动。

这可以通过不同的方法实现,如光流估计、外观模型匹配等。

通过运动估计,系统可以预测目标在下一帧中的位置。

目标匹配和跟踪:使用目标的特征和运动信息,将目标在连续帧之间进行匹配和跟踪。

目标匹配可以是一个关键步骤,它确定目标在新帧中的位置,以确保跟踪的连续性。

匹配可以通过各种方法实现,包括相关滤波、卡尔曼滤波、粒子滤波等。

更新目标模型:随着时间的推移,目标的外观可能会发生变化,例如光照条件的变化、遮挡或目标本身的运动。

因此,需要定期更新目标模型,以确保跟踪的准确性。

这可能涉及到在线学习或模型适应的技术。

终止条件:跟踪可以在达到某些终止条件时结束,例如目标不再可见、跟踪失败或用户停止跟踪。

在终止时,系统可能会输出跟踪结果或汇总目标的轨迹信息。

"Tracking by Segmentation" 方法的优点是它能够处理目标在复杂背景下的跟踪,并且对目标的外观和形状变化相对鲁棒。

然而,它也面临着挑战,例如遮挡、光照变化、目标形状变化等问题可能会导致跟踪失败。

基于分形维和独立分量分析的声发射特征提取

发射信号具有来源于缺陷本身、 对检测对象干扰小 、 可 以长期连续监测物体安全性的特点 , 因此在无损 检测中具有广阔的应用前景. 目 前采集和处理声发射信号的方法可分为两大 类 :1 以多个 简化 的波形特征参数来表示声发射 () 信号的特征, 然后对其进行分析和处理;2 存储和 () 记录声发射信号的波形 , 对波形进行频谱分析. 简化 波形特征参数分析法是 2 世纪 5 年代以来广泛使 0 0

用 的经典声 发射信 号 分析 方 法 , 目前 在 声发 射 检测

析( A 对信号进行预处理 , I ) C 以提取与噪声源分离 的声发射信号进行分形维处理, 进而可以提高对结 构 材料 的完整性 、 安全性 检测 的准确 性 .

1 声发射信号的分形维特征

分形 几何 是 近几 年 发展起 来 的数学 分 支. 方 该 法 是对具有 自相似性 或统计 自相似 性信号进 行处理

刘 国华 黄平捷 龚翔 顾江 周泽魁

( 浙江大学 工业控制技术国家重点实验室 , 浙江 杭州 30 2 ) 107

摘 要: 针对噪 声对声发射信号分形维的影响, 出了一种基于分形维和独立分量分析 提

(C 的结构材料 声发射 信号特征提 取 方法. 中首先给 出了分形 维的概 念 , IA) 文 并从 理论上

裂时, 以弹性 波 的形 式 释 放 能量 的现 象 』 由于声 .

显 的 区别 , 又 有 某 种 相 似 性. 但 已有 实 验 证 明 [5, 4] -

声发射信号不仅在时域上的分布是分形 的, 而且在 空 间上 的分布也是 分形 的. 但实际声发射信号在空间传播途径中折射 、 反

射 频繁 , 种噪声 混 杂 , 过 简单 的分形 维 分 析 , 各 通 容 易引起最终 结果 的误 判 . 因此 文 中采 用 独 立分 量分 Βιβλιοθήκη 维普资讯 第1 期

A Fast and Accurate Plane Detection Algorithm for Large Noisy Point Clouds Using Filtered Normals