SVM_MATLAB代码

svmd函数的matlab程序

【主题】svmd函数的matlab程序【内容】1. 介绍svmd函数的作用svmd(Support Vector Machine for Discrete Input)函数是一种针对离散型输入数据的支持向量机(SVM)方法。

它用于在SVM模型中处理离散型输入数据,该方法采用一种特殊的数据压缩算法,以减少SVM模型中的存储和计算需求。

2. svmd函数的matlab程序实现svmd函数的matlab程序可通过以下步骤实现:2.1 导入数据需要导入已准备好的离散型输入数据。

数据的准备包括数据清洗、数据转换、数据归一化等预处理步骤。

2.2 构建模型接下来,使用svmtr本人n函数构建支持向量机模型。

在svmtr本人n函数中,需要指定核函数、惩罚因子C等参数。

构建好的模型将用于对数据进行分类。

2.3 模型训练利用svmtr本人n函数构建好模型后,需要使用该模型对数据进行训练,以找到最佳的分类超平面。

2.4 模型预测训练完成后,使用svmclassify函数对新的数据进行预测分类。

svmclassify函数将利用已训练好的模型对新的离散型输入数据进行分类。

2.5 模型评估使用svmd函数对分类结果进行评估,计算模型的准确率、召回率等指标,以评估模型的性能。

3. 示例程序下面是一个简单的svmd函数的matlab程序示例:```matlab导入数据load('discreteData.mat');构建模型model = svmtr本人n(discreteData, labels, 'BoxConstr本人nt', 1);模型训练predictedLabels = svmclassify(model, testData);模型评估accuracy = sum(predictedLabels ==testLabels)/numel(testLabels);```4. 总结svmd函数的matlab程序能够有效地处理离散型输入数据,并构建支持向量机模型进行分类。

Matlab的SVM算法进行线性和非线性分类实例_20131128

Matlab_svmtranin_example1.Linear classification%Two Dimension Linear-SVM Problem,Two Class and Separable Situation%Method from Christopher J.C.Burges:%"A Tutorial on Support Vector Machines for Pattern Recognition",page9 %Optimizing||W||directly:%Objective:min"f(A)=||W||",p8/line26%Subject to:yi*(xi*W+b)-1>=0,function(12);clear all;close allclc;sp=[3,7;6,6;4,6;5,6.5]%positive sample pointsnsp=size(sp);sn=[1,2;3,5;7,3;3,4;6,2.7]%negative sample pointsnsn=size(sn)sd=[sp;sn]lsd=[true true true true false false false false false]Y=nominal(lsd)figure(1);subplot(1,2,1)plot(sp(1:nsp,1),sp(1:nsp,2),'m+');hold onplot(sn(1:nsn,1),sn(1:nsn,2),'c*');subplot(1,2,2)svmStruct=svmtrain(sd,Y,'showplot',true);2.NonLinear classification%Two Dimension quadratic-SVM Problem,Two Class and Separable Situation %Method from Christopher J.C.Burges:%"A Tutorial on Support Vector Machines for Pattern Recognition",page9 %Optimizing||W||directly:%Objective:min"f(A)=||W||",p8/line26%Subject to:yi*(xi*W+b)-1>=0,function(12);clear all;close allclc;sp=[3,7;6,6;4,6;5,6.5]%positive sample pointsnsp=size(sp);sn=[1,2;3,5;7,3;3,4;6,2.7;4,3;2,7]%negative sample pointsnsn=size(sn)sd=[sp;sn]lsd=[true true true true false false false false false false false]Y=nominal(lsd)figure(1);subplot(1,2,1)plot(sp(1:nsp,1),sp(1:nsp,2),'m+');hold onplot(sn(1:nsn,1),sn(1:nsn,2),'c*');subplot(1,2,2)%svmStruct=svmtrain(sd,Y,'Kernel_Function','linear','showplot',true);svmStruct=svmtrain(sd,Y,'Kernel_Function','quadratic','showplot',true);%use the trained svm(svmStruct)to classify the dataRD=svmclassify(svmStruct,sd,'showplot',true)%RD is the classification result vector3.Svmtrainsvmtrain Train a support vector machine classifierSVMSTRUCT=svmtrain(TRAINING,Y)trains a support vector machine(SVM) classifier on data taken from two groups.TRAINING is a numeric matrix of predictor data.Rows of TRAINING correspond to observations;columns correspond to features.Y is a column vector that contains the knownclass labels for TRAINING.Y is a grouping variable,i.e.,it can be a categorical,numeric,or logical vector;a cell vector of strings;or a character matrix with each row representing a class label(see help forgroupingvariable).Each element of Y specifies the group thecorresponding row of TRAINING belongs to.TRAINING and Y must have the same number of rows.SVMSTRUCT contains information about the trained classifier,including the support vectors,that is used by SVMCLASSIFY for classification.svmtrain treats NaNs,empty strings or'undefined' values as missing values and ignores the corresponding rows inTRAINING and Y.SVMSTRUCT=svmtrain(TRAINING,Y,'PARAM1',val1,'PARAM2',val2,...) specifies one or more of the following name/value pairs:Name Value'kernel_function'A string or a function handle specifying thekernel function used to represent the dotproduct in a new space.The value can be one ofthe following:'linear'-Linear kernel or dot product(default).In this case,svmtrainfinds the optimal separating planein the original space.'quadratic'-Quadratic kernel'polynomial'-Polynomial kernel with defaultorder3.To specify another order,use the'polyorder'argument.'rbf'-Gaussian Radial Basis Functionwith default scaling factor1.Tospecify another scaling factor,use the'rbf_sigma'argument.'mlp'-Multilayer Perceptron kernel(MLP)with default weight1and defaultbias-1.To specify another weightor bias,use the'mlp_params'argument.function-A kernel function specified using@(for example@KFUN),or ananonymous function.A kernelfunction must be of the formfunction K=KFUN(U,V)The returned value,K,is a matrixof size M-by-N,where M and N arethe number of rows in U and Vrespectively.'rbf_sigma'A positive number specifying the scaling factorin the Gaussian radial basis function kernel.Default is1.'polyorder'A positive integer specifying the order of thepolynomial kernel.Default is3.'mlp_params'A vector[P1P2]specifying the parameters of MLPkernel.The MLP kernel takes the form:K=tanh(P1*U*V'+P2),where P1>0and P2<0.Default is[1,-1].'method'A string specifying the method used to find theseparating hyperplane.Choices are:'SMO'-Sequential Minimal Optimization(SMO)method(default).It implements the L1soft-margin SVM classifier.'QP'-Quadratic programming(requires anOptimization Toolbox license).Itimplements the L2soft-margin SVMclassifier.Method'QP'doesn't scalewell for TRAINING with large number ofobservations.'LS'-Least-squares method.It implements theL2soft-margin SVM classifier.'options'Options structure created using either STATSET orOPTIMSET.*When you set'method'to'SMO'(default),create the options structure using STATSET.Applicable options:'Display'Level of display output.Choicesare'off'(the default),'iter',and'final'.Value'iter'reports every500iterations.'MaxIter'A positive integer specifying themaximum number of iterations allowed.Default is15000for method'SMO'.*When you set method to'QP',create theoptions structure using OPTIMSET.For detailsof applicable options choices,see QUADPROGoptions.SVM uses a convex quadratic program,so you can choose the'interior-point-convex'algorithm in QUADPROG.'tolkkt'A positive scalar that specifies the tolerancewith which the Karush-Kuhn-Tucker(KKT)conditionsare checked for method'SMO'.Default is1.0000e-003.'kktviolationlevel'A scalar specifying the fraction of observationsthat are allowed to violate the KKT conditions formethod'SMO'.Setting this value to be positivehelps the algorithm to converge faster if it isfluctuating near a good solution.Default is0.'kernelcachelimit'A positive scalar S specifying the size of thekernel matrix cache for method'SMO'.Thealgorithm keeps a matrix with up to S*Sdouble-precision numbers in memory.Default is5000.When the number of points in TRAININGexceeds S,the SMO method slows down.It'srecommended to set S as large as your systempermits.'boxconstraint'The box constraint C for the soft margin.C can bea positive numeric scalar or a vector of positivenumbers with the number of elements equal to thenumber of rows in TRAINING.Default is1.*If C is a scalar,it is automatically rescaledby N/(2*N1)for the observations of group one,and by N/(2*N2)for the observations of grouptwo,where N1is the number of observations ingroup one,N2is the number of observations ingroup two.The rescaling is done to take intoaccount unbalanced groups,i.e.,when N1and N2are different.*If C is a vector,then each element of Cspecifies the box constraint for thecorresponding observation.'autoscale'A logical value specifying whether or not toshift and scale the data points before training.When the value is true,the columns of TRAININGare shifted and scaled to have zero mean unitvariance.Default is true.'showplot'A logical value specifying whether or not to showa plot.When the value is true,svmtrain creates aplot of the grouped data and the separating linefor the classifier,when using data with2features(columns).Default is false. SVMSTRUCT is a structure having the following properties:SupportVectors Matrix of data points with each row correspondingto a support vector.Note:when'autoscale'is false,this fieldcontains original support vectors in TRAINING.When'autoscale'is true,this field containsshifted and scaled vectors from TRAINING.Alpha Vector of Lagrange multipliers for the supportvectors.The sign is positive for support vectorsbelonging to the first group and negative forsupport vectors belonging to the second group. Bias Intercept of the hyperplane that separatesthe two groups.Note:when'autoscale'is false,this fieldcorresponds to the original data points inTRAINING.When'autoscale'is true,this fieldcorresponds to shifted and scaled data points. KernelFunction The function handle of kernel function used. KernelFunctionArgs Cell array containing the additional argumentsfor the kernel function.GroupNames A column vector that contains the knownclass labels for TRAINING.Y is a groupingvariable(see help for groupingvariable). SupportVectorIndices A column vector indicating the indices of supportvectors.ScaleData This field contains information about auto-scale.When'autoscale'is false,it is empty.When'autoscale'is set to true,it is a structurecontaining two fields:shift-A row vector containing the negativeof the mean across all observationsin TRAINING.scaleFactor-A row vector whose value is1./STD(TRAINING).FigureHandles A vector of figure handles created by svmtrainwhen'showplot'argument is TRUE.Example:%Load the data and select features for classificationload fisheririsX=[meas(:,1),meas(:,2)];%Extract the Setosa classY=nominal(ismember(species,'setosa'));%Randomly partitions observations into a training set and a test %set using stratified holdoutP=cvpartition(Y,'Holdout',0.20);%Use a linear support vector machine classifiersvmStruct=svmtrain(X(P.training,:),Y(P.training),'showplot',true);C=svmclassify(svmStruct,X(P.test,:),'showplot',true);errRate=sum(Y(P.test)~=C)/P.TestSize%mis-classification rate conMat=confusionmat(Y(P.test),C)%the confusion matrix。

支持向量机(SVM)算法的matlab的实现

⽀持向量机(SVM)算法的matlab的实现⽀持向量机(SVM)的matlab的实现⽀持向量机是⼀种分类算法之中的⼀个,matlab中也有对应的函数来对其进⾏求解;以下贴⼀个⼩例⼦。

这个例⼦来源于我们实际的项⽬。

clc;clear;N=10;%以下的数据是我们实际项⽬中的训练例⼦(例⼦中有8个属性)correctData=[0,0.2,0.8,0,0,0,2,2];errorData_ReversePharse=[1,0.8,0.2,1,0,0,2,2];errorData_CountLoss=[0.2,0.4,0.6,0.2,0,0,1,1];errorData_X=[0.5,0.5,0.5,1,1,0,0,0];errorData_Lower=[0.2,0,1,0.2,0,0,0,0];errorData_Local_X=[0.2,0.2,0.8,0.4,0.4,0,0,0];errorData_Z=[0.53,0.55,0.45,1,0,1,0,0];errorData_High=[0.8,1,0,0.8,0,0,0,0];errorData_CountBefore=[0.4,0.2,0.8,0.4,0,0,2,2];errorData_Local_X1=[0.3,0.3,0.7,0.4,0.2,0,1,0];sampleData=[correctData;errorData_ReversePharse;errorData_CountLoss;errorData_X;errorData_Lower;errorData_Local_X;errorData_Z;errorData_High;errorData_CountBefore;errorData_Local_X1];%训练例⼦type1=1;%正确的波形的类别,即我们的第⼀组波形是正确的波形,类别号⽤ 1 表⽰type2=-ones(1,N-2);%不对的波形的类别,即第2~10组波形都是有故障的波形。

支持向量机matlab实现源代码知识讲解

支持向量机m a t l a b 实现源代码edit svmtrain>>edit svmclassify>>edit svmpredictfunction [svm_struct, svIndex] = svmtrain(training, groupnames, varargin)%SVMTRAIN trains a support vector machine classifier%% SVMStruct = SVMTRAIN(TRAINING,GROUP) trains a support vector machine % classifier using data TRAINING taken from two groups given by GROUP.% SVMStruct contains information about the trained classifier that is% used by SVMCLASSIFY for classification. GROUP is a column vector of% values of the same length as TRAINING that defines two groups. Each% element of GROUP specifies the group the corresponding row of TRAINING % belongs to. GROUP can be a numeric vector, a string array, or a cell% array of strings. SVMTRAIN treats NaNs or empty strings in GROUP as% missing values and ignores the corresponding rows of TRAINING.%% SVMTRAIN(...,'KERNEL_FUNCTION',KFUN) allows you to specify the kernel % function KFUN used to map the training data into kernel space. The% default kernel function is the dot product. KFUN can be one of the% following strings or a function handle:%% 'linear' Linear kernel or dot product% 'quadratic' Quadratic kernel% 'polynomial' Polynomial kernel (default order 3)% 'rbf' Gaussian Radial Basis Function kernel% 'mlp' Multilayer Perceptron kernel (default scale 1)% function A kernel function specified using @,% for example @KFUN, or an anonymous function%% A kernel function must be of the form%% function K = KFUN(U, V)%% The returned value, K, is a matrix of size M-by-N, where U and V have M% and N rows respectively. If KFUN is parameterized, you can use% anonymous functions to capture the problem-dependent parameters. For% example, suppose that your kernel function is%% function k = kfun(u,v,p1,p2)% k = tanh(p1*(u*v')+p2);%% You can set values for p1 and p2 and then use an anonymous function:% @(u,v) kfun(u,v,p1,p2).%% SVMTRAIN(...,'POLYORDER',ORDER) allows you to specify the order of a% polynomial kernel. The default order is 3.%% SVMTRAIN(...,'MLP_PARAMS',[P1 P2]) allows you to specify the% parameters of the Multilayer Perceptron (mlp) kernel. The mlp kernel% requires two parameters, P1 and P2, where K = tanh(P1*U*V' + P2) and P1% > 0 and P2 < 0. Default values are P1 = 1 and P2 = -1.%% SVMTRAIN(...,'METHOD',METHOD) allows you to specify the method used% to find the separating hyperplane. Options are%% 'QP' Use quadratic programming (requires the Optimization Toolbox)% 'LS' Use least-squares method%% If you have the Optimization Toolbox, then the QP method is the default% method. If not, the only available method is LS.%% SVMTRAIN(...,'QUADPROG_OPTS',OPTIONS) allows you to pass an OPTIONS % structure created using OPTIMSET to the QUADPROG function when using% the 'QP' method. See help optimset for more details.%% SVMTRAIN(...,'SHOWPLOT',true), when used with two-dimensional data,% creates a plot of the grouped data and plots the separating line for% the classifier.%% Example:% % Load the data and select features for classification% load fisheriris% data = [meas(:,1), meas(:,2)];% % Extract the Setosa class% groups = ismember(species,'setosa');% % Randomly select training and test sets% [train, test] = crossvalind('holdOut',groups);% cp = classperf(groups);% % Use a linear support vector machine classifier% svmStruct = svmtrain(data(train,:),groups(train),'showplot',true);% classes = svmclassify(svmStruct,data(test,:),'showplot',true);% % See how well the classifier performed% classperf(cp,classes,test);% cp.CorrectRate%% See also CLASSIFY, KNNCLASSIFY, QUADPROG, SVMCLASSIFY.% Copyright 2004 The MathWorks, Inc.% $Revision: 1.1.12.1 $ $Date: 2004/12/24 20:43:35 $% References:% [1] Kecman, V, Learning and Soft Computing,% MIT Press, Cambridge, MA. 2001.% [2] Suykens, J.A.K., Van Gestel, T., De Brabanter, J., De Moor, B.,% Vandewalle, J., Least Squares Support Vector Machines,% World Scientific, Singapore, 2002.% [3] Scholkopf, B., Smola, A.J., Learning with Kernels,% MIT Press, Cambridge, MA. 2002.%% SVMTRAIN(...,'KFUNARGS',ARGS) allows you to pass additional% arguments to kernel functions.% set defaultsplotflag = false;qp_opts = [];kfunargs = {};setPoly = false; usePoly = false;setMLP = false; useMLP = false;if ~isempty(which('quadprog'))useQuadprog = true;elseuseQuadprog = false;end% set default kernel functionkfun = @linear_kernel;% check inputsif nargin < 2error(nargchk(2,Inf,nargin))endnumoptargs = nargin -2;optargs = varargin;% grp2idx sorts a numeric grouping var ascending, and a string grouping % var by order of first occurrence[g,groupString] = grp2idx(groupnames);% check group is a vector -- though char input is special...if ~isvector(groupnames) && ~ischar(groupnames)error('Bioinfo:svmtrain:GroupNotVector',...'Group must be a vector.');end% make sure that the data is correctly oriented.if size(groupnames,1) == 1groupnames = groupnames';end% make sure data is the right sizen = length(groupnames);if size(training,1) ~= nif size(training,2) == ntraining = training';elseerror('Bioinfo:svmtrain:DataGroupSizeMismatch',...'GROUP and TRAINING must have the same number of rows.')endend% NaNs are treated as unknown classes and are removed from the training% datanans = find(isnan(g));if length(nans) > 0training(nans,:) = [];g(nans) = [];endngroups = length(groupString);if ngroups > 2error('Bioinfo:svmtrain:TooManyGroups',...'SVMTRAIN only supports classification into two groups.\nGROUP contains %d different groups.',ngroups) end% convert to 1, -1.g = 1 - (2* (g-1));% handle optional argumentsif numoptargs >= 1if rem(numoptargs,2)== 1error('Bioinfo:svmtrain:IncorrectNumberOfArguments',...'Incorrect number of arguments to %s.',mfilename);okargs = {'kernel_function','method','showplot','kfunargs','quadprog_opts','polyorder','mlp_params'}; for j=1:2:numoptargspname = optargs{j};pval = optargs{j+1};k = strmatch(lower(pname), okargs);%#okif isempty(k)error('Bioinfo:svmtrain:UnknownParameterName',...'Unknown parameter name: %s.',pname);elseif length(k)>1error('Bioinfo:svmtrain:AmbiguousParameterName',...'Ambiguous parameter name: %s.',pname);elseswitch(k)case 1 % kernel_functionif ischar(pval)okfuns = {'linear','quadratic',...'radial','rbf','polynomial','mlp'};funNum = strmatch(lower(pval), okfuns);%#okif isempty(funNum)funNum = 0;endswitch funNum %maybe make this less strict in the futurecase 1kfun = @linear_kernel;case 2kfun = @quadratic_kernel;case {3,4}kfun = @rbf_kernel;case 5kfun = @poly_kernel;usePoly = true;case 6kfun = @mlp_kernel;useMLP = true;otherwiseerror('Bioinfo:svmtrain:UnknownKernelFunction',...'Unknown Kernel Function %s.',kfun);endelseif isa (pval, 'function_handle')kfun = pval;elseerror('Bioinfo:svmtrain:BadKernelFunction',...'The kernel function input does not appear to be a function handle\nor valid function name.')case 2 % methodif strncmpi(pval,'qp',2)useQuadprog = true;if isempty(which('quadprog'))warning('Bioinfo:svmtrain:NoOptim',...'The Optimization Toolbox is required to use the quadratic programming method.') useQuadprog = false;endelseif strncmpi(pval,'ls',2)useQuadprog = false;elseerror('Bioinfo:svmtrain:UnknownMethod',...'Unknown method option %s. Valid methods are ''QP'' and ''LS''',pval);endcase 3 % displayif pval ~= 0if size(training,2) == 2plotflag = true;elsewarning('Bioinfo:svmtrain:OnlyPlot2D',...'The display option can only plot 2D training data.')endendcase 4 % kfunargsif iscell(pval)kfunargs = pval;elsekfunargs = {pval};endcase 5 % quadprog_optsif isstruct(pval)qp_opts = pval;elseif iscell(pval)qp_opts = optimset(pval{:});elseerror('Bioinfo:svmtrain:BadQuadprogOpts',...'QUADPROG_OPTS must be an opts structure.');endcase 6 % polyorderif ~isscalar(pval) || ~isnumeric(pval)error('Bioinfo:svmtrain:BadPolyOrder',...'POLYORDER must be a scalar value.');endif pval ~=floor(pval) || pval < 1error('Bioinfo:svmtrain:PolyOrderNotInt',...'The order of the polynomial kernel must be a positive integer.')endkfunargs = {pval};setPoly = true;case 7 % mlpparamsif numel(pval)~=2error('Bioinfo:svmtrain:BadMLPParams',...'MLP_PARAMS must be a two element array.');endif ~isscalar(pval(1)) || ~isscalar(pval(2))error('Bioinfo:svmtrain:MLPParamsNotScalar',...'The parameters of the multi-layer perceptron kernel must be scalar.'); endkfunargs = {pval(1),pval(2)};setMLP = true;endendendendif setPoly && ~usePolywarning('Bioinfo:svmtrain:PolyOrderNotPolyKernel',...'You specified a polynomial order but not a polynomial kernel');endif setMLP && ~useMLPwarning('Bioinfo:svmtrain:MLPParamNotMLPKernel',...'You specified MLP parameters but not an MLP kernel');end% plot the data if requestedif plotflag[hAxis,hLines] = svmplotdata(training,g);legend(hLines,cellstr(groupString));end% calculate kernel functiontrykx = feval(kfun,training,training,kfunargs{:});% ensure function is symmetrickx = (kx+kx')/2;catcherror('Bioinfo:svmtrain:UnknownKernelFunction',...'Error calculating the kernel function:\n%s\n', lasterr);end% create Hessian% add small constant eye to force stabilityH =((g*g').*kx) + sqrt(eps(class(training)))*eye(n);if useQuadprog% The large scale solver cannot handle this type of problem, so turn it% off.qp_opts = optimset(qp_opts,'LargeScale','Off');% X=QUADPROG(H,f,A,b,Aeq,beq,LB,UB,X0,opts)alpha = quadprog(H,-ones(n,1),[],[],...g',0,zeros(n,1),inf *ones(n,1),zeros(n,1),qp_opts);% The support vectors are the non-zeros of alphasvIndex = find(alpha > sqrt(eps));sv = training(svIndex,:);% calculate the parameters of the separating line from the support% vectors.alphaHat = g(svIndex).*alpha(svIndex);% Calculate the bias by applying the indicator function to the support% vector with largest alpha.[maxAlpha,maxPos] = max(alpha); %#okbias = g(maxPos) - sum(alphaHat.*kx(svIndex,maxPos));% an alternative method is to average the values over all support vectors % bias = mean(g(sv)' - sum(alphaHat(:,ones(1,numSVs)).*kx(sv,sv)));% An alternative way to calculate support vectors is to look for zeros of % the Lagrangians (fifth output from QUADPROG).%% [alpha,fval,output,exitflag,t] = quadprog(H,-ones(n,1),[],[],...% g',0,zeros(n,1),inf *ones(n,1),zeros(n,1),opts);%% sv = t.lower < sqrt(eps) & t.upper < sqrt(eps);else % Least-Squares% now build up compound matrix for solverA = [0 g';g,H];b = [0;ones(size(g))];x = A\b;% calculate the parameters of the separating line from the support % vectors.sv = training;bias = x(1);alphaHat = g.*x(2:end);endsvm_struct.SupportVectors = sv;svm_struct.Alpha = alphaHat;svm_struct.Bias = bias;svm_struct.KernelFunction = kfun;svm_struct.KernelFunctionArgs = kfunargs;svm_struct.GroupNames = groupnames;svm_struct.FigureHandles = [];if plotflaghSV = svmplotsvs(hAxis,svm_struct);svm_struct.FigureHandles = {hAxis,hLines,hSV};end。

(Matlab)SVM工具箱快速入手简易教程

SVM工具箱快速入手简易教程(by faruto)一. matlab 自带的函数(matlab帮助文件里的例子)[只有较新版本的matlab中有这两个SVM的函数] =====svmtrain svmclassify=====简要语法规则====svmtrainTrain support vector machine classifierSyntaxSVMStruct = svmtrain(Training, Group)SVMStruct = svmtrain(..., 'Kernel_Function', Kernel_FunctionValue, ...) SVMStruct = svmtrain(..., 'RBF_Sigma', RBFSigmaValue, ...)SVMStruct = svmtrain(..., 'Polyorder', PolyorderValue, ...) SVMStruct = svmtrain(..., 'Mlp_Params', Mlp_ParamsValue, ...) SVMStruct = svmtrain(..., 'Method', MethodValue, ...)SVMStruct = svmtrain(..., 'QuadProg_Opts', QuadProg_OptsValue, ...) SVMStruct = svmtrain(..., 'SMO_Opts', SMO_OptsValue, ...)SVMStruct = svmtrain(..., 'BoxConstraint', BoxConstraintValue, ...) SVMStruct = svmtrain(..., 'Autoscale', AutoscaleValue, ...) SVMStruct = svmtrain(..., 'Showplot', ShowplotValue, ...)---------------------svmclassifyClassify data using support vector machineSyntaxGroup = svmclassify(SVMStruct, Sample)Group = svmclassify(SVMStruct, Sample, 'Showplot', ShowplotValue)============================实例研究====================load fisheriris%载入matlab自带的数据[有关数据的信息可以自己到UCI查找,这是UCI的经典数据之一],得到的数据如下图:其中meas是150*4的矩阵代表着有150个样本每个样本有4个属性描述,species 代表着这150个样本的分类.data = [meas(:,1), meas(:,2)];%在这里只取meas的第一列和第二列,即只选取前两个属性.groups = ismember(species,'setosa');%由于species分类中是有三个分类:setosa,versicolor,virginica,为了使问题简单,我们将其变为二分类问题:Setosa and non-Setosa.[train, test] = crossvalind('holdOut',groups);cp = classperf(groups);%随机选择训练集合测试集[有关crossvalind的使用请自己help一下]其中cp作用是后来用来评价分类器的.svmStruct = svmtrain(data(train,:),groups(train),'showplot',true);%使用svmtrain进行训练,得到训练后的结构svmStruct,在预测时使用.训练结果如图:classes = svmclassify(svmStruct,data(test,:),'showplot',true); %对于未知的测试集进行分类预测,结果如图:classperf(cp,classes,test);cp.CorrectRateans =0.9867%分类器效果测评,就是看测试集分类的准确率的高低.二.台湾林智仁的libsvm工具箱该工具箱下载[libsvm-mat-2.86-1]: libsvm-mat-2.86-1.rar (73.75 KB)安装方法也很简单,解压文件,把当前工作目录调整到libsvm所在的文件夹下,再在set path里将libsvm 所在的文件夹加到里面.然后在命令行里输入mex -setup %选择一下编译器make这样就可以了.建议大家使用libsvm工具箱,这个更好用一些.可以进行分类[多类别],预测....=========svmtrainsvmpredict================简要语法:Usage=====matlab> model = svmtrain(training_label_vector, training_instance_matrix [, 'libsvm_options']);-training_label_vector:An m by 1 vector of training labels (type must be double).-training_instance_matrix:An m by n matrix of m training instances with n features.It can be dense or sparse (type must be double).-libsvm_options:A string of training options in the same format as that of LIBSVM.matlab> [predicted_label, accuracy,decision_values/prob_estimates] =svmpredict(testing_label_vector,testing_instance_matrix, model [,'libsvm_options']);-testing_label_vector:An m by 1 vector of prediction labels. If labels of testdata are unknown, simply useany random values. (type must be double)-testing_instance_matrix:An m by n matrix of m testing instances with n features.It can be dense or sparse. (type must be double)-model:The output of svmtrain.-libsvm_options:A string of testing options in the same format as that of LIBSVM.Returned Model Structure========================实例研究:load heart_scale.mat%工具箱里自带的数据如图:tu4其中 heart_scale_inst是样本,heart_scale_label是样本标签model = svmtrain(heart_scale_label,heart_scale_inst, '-c 1 -g 0.07');%训练样本,具体参数的调整请看帮助文件[predict_label, accuracy, dec_values] = svmpredict(heart_scale_label, heart_scale_inst, model);%分类预测,这里把训练集当作测试集,验证效果如下: >> [predict_label, accuracy, dec_values] = svmpredict(heart_scale_label, heart_scale_inst, model); % test the training dataAccuracy = 86.6667% (234/270) (classification)==============这回把SVM这点入门的东西都说完了,大家可以参照着上手了,有关SVM的原理我下面有个简易的PPT,是以前做项目时我做的[当时我负责有关SVM这一块代码实现讲解什么的],感兴趣的你可以看看,都是上手较快的东西,想要深入学习SVM,你的学习统计学习理论什么的....挺多的呢..SVM.ppt (391 KB)-----------有关SVM和libsvm的非常好的资料,想要详细研究SVM看这个------libsvm_guide.pdf (194.53 KB)libsvm_library.pdf (316.82 KB) OptimizationSupportVectorMachinesandMachine。

MATLAB技术SVM算法实现

MATLAB技术SVM算法实现引言:支持向量机(Support Vector Machine, SVM)是机器学习领域中一种常用的监督学习方法,广泛应用于分类和回归问题。

本文将介绍如何使用MATLAB技术实现SVM算法,包括数据预处理、特征选择、模型训练和性能评估等方面的内容。

一、数据预处理在使用SVM算法之前,我们需要先进行数据的预处理。

数据预处理是为了将原始数据转化为能够被SVM算法处理的形式,一般包括数据清洗、特征缩放和特征编码等步骤。

1. 数据清洗数据清洗是指对数据中的缺失值、异常值和噪声进行处理的过程。

在MATLAB中,可以使用诸如ismissing和fillmissing等函数来处理缺失值。

对于异常值和噪声的处理,可以使用统计学方法或者基于模型的方法。

2. 特征缩放特征缩放是指对特征值进行标准化处理的过程,使得各个特征值具有相同的量纲。

常用的特征缩放方法有均值归一化和方差归一化等。

在MATLAB中,可以使用zscore函数来进行特征缩放。

3. 特征编码特征编码是指将非数值型特征转化为数值型的过程,以便SVM算法能够对其进行处理。

常用的特征编码方法有独热编码和标签编码等。

在MATLAB中,可以使用诸如dummyvar和encode等函数来进行特征编码。

二、特征选择特征选择是指从原始特征中选择出最具有代表性的特征,以减少维度和提高模型性能。

在SVM算法中,选择合适的特征对分类效果非常关键。

1. 相关性分析通过分析特征与目标变量之间的相关性,可以选择与目标变量相关性较高的特征。

在MATLAB中,可以使用corrcoef函数计算特征之间的相关系数。

2. 特征重要性评估特征重要性评估可以通过各种特征选择方法来实现,如基于统计学方法的方差分析、基于模型的递归特征消除和基于树模型的特征重要性排序等。

在MATLAB 中,可以使用诸如anova1、rfimportance等函数进行特征重要性评估。

三、模型训练与评估在进行完数据预处理和特征选择之后,我们可以开始进行SVM模型的训练和评估了。

SVM分类器-matlab程序-可运行

fprintf('-1');

else

fprintf(' 1');

end

fprintf(' 判别函数值%f 分类结果',sum(i) + b);

if abs(sum(i) + b - 1) < 0.5

else

U = max(0,a1old + a2old - C);

V = min(C,a1old + a2old);

end

if a2new > V

a2new = V;

end

if a2new < U

a2new = U;

end

a1new = a1old + S * (a2old - a2new);%计算新的a1

break;

end

if y(n1) * (sum(n1) + b) < 1 && a(n1) ~=C

break;

end

n1 = n1 + 1;

end

%n2按照最大化|E1-E2|的原则选取

a(n1) = a1new;%更新a

a(n2) = a2new;

%更新部分值

sum = zeros(n,1);

for k = 1 : n

for i = 1 : n

sum(k) = sum(k) + a(i) * y(i) * K(i,k);

end

end

0.9885 5.7625 0.1832 0

-0.3318 2.4373 -0.6884 0

支持向量机支持向量机回归原理简述及其MATLAB实例

支持向量机支持向量机回归原理简述及其MATLAB实例支持向量机 (Support Vector Machine, SVM) 是一种在监督学习中应用广泛的机器学习算法。

它既可以用于分类问题(SVM),又可以用于回归问题(SVR)。

本文将分别简要介绍 SVM 和 SVR 的原理,并提供MATLAB 实例来展示其应用。

SVM的核心思想是找到一个最优的超平面,使得正样本和负样本之间的间隔最大化,同时保证误分类的样本最少。

这个最优化问题可以转化为一个凸二次规划问题进行求解。

具体的求解方法是通过拉格朗日乘子法,将约束优化问题转化为一个拉格朗日函数的无约束极小化问题,并使用庞加莱对偶性将原问题转化为对偶问题,最终求解出法向量和偏差项。

SVR的目标是找到一个回归函数f(x),使得预测值f(x)和实际值y之间的损失函数最小化。

常用的损失函数包括平方损失函数、绝对损失函数等。

与SVM类似,SVR也可以使用核函数将问题转化为非线性回归问题。

MATLAB实例:下面以一个简单的数据集为例,展示如何使用MATLAB实现SVM和SVR。

1.SVM实例:假设我们有一个二分类问题,数据集包含两个特征和两类样本。

首先加载数据集,划分数据集为训练集和测试集。

```matlabload fisheririsX = meas(51:end, 1:2);Y=(1:100)';Y(1:50)=-1;Y(51:100)=1;randn('seed', 1);I = randperm(100);X=X(I,:);Y=Y(I);X_train = X(1:80, :);Y_train = Y(1:80, :);X_test = X(81:end, :);Y_test = Y(81:end, :);```然后,使用 fitcsvm 函数来训练 SVM 模型,并用 predict 函数来进行预测。

```matlabSVMModel = fitcsvm(X_train, Y_train);Y_predict = predict(SVMModel, X_test);```最后,可以计算分类准确度来评估模型的性能。

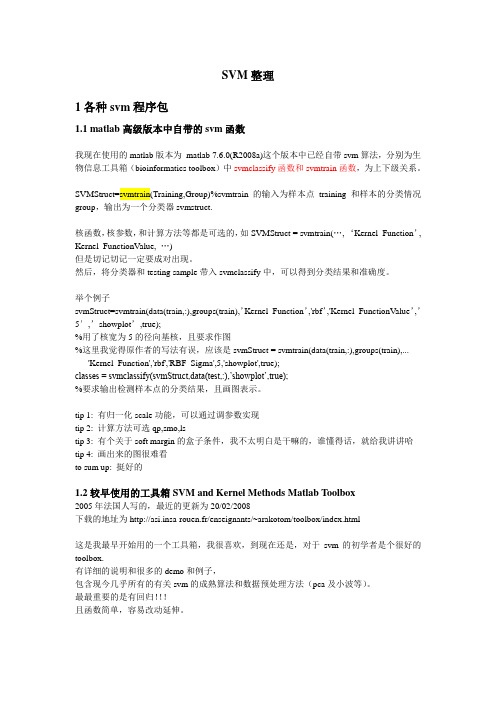

Matlab-SVM整理

SVM整理1各种svm程序包1.1 matlab高级版本中自带的svm函数我现在使用的matlab版本为matlab 7.6.0(R2008a)这个版本中已经自带svm算法,分别为生物信息工具箱(bioinformatics toolbox)中svmclassify函数和svmtrain函数,为上下级关系。

SVMStruct=svmtrain(Training,Group)%svmtrain的输入为样本点training和样本的分类情况group,输出为一个分类器svmstruct.核函数,核参数,和计算方法等都是可选的,如SVMStruct = svmtrain(…, ‘Kernel_Function’, Kernel_FunctionValue, …)但是切记切记一定要成对出现。

然后,将分类器和testing sample带入svmclassify中,可以得到分类结果和准确度。

举个例子svmStruct=svmtrain(data(train,:),groups(train),’Kernel_Function’,'rbf’,'Kernel_FunctionValue’,’5′,’showplot’,true);%用了核宽为5的径向基核,且要求作图%这里我觉得原作者的写法有误,应该是svmStruct = svmtrain(data(train,:),groups(train),...'Kernel_Function','rbf','RBF_Sigma',5,'showplot',true);classes = svmclassify(svmStruct,data(test,:),’showplot’,true);%要求输出检测样本点的分类结果,且画图表示。

tip 1: 有归一化scale功能,可以通过调参数实现tip 2: 计算方法可选qp,smo,lstip 3: 有个关于soft margin的盒子条件,我不太明白是干嘛的,谁懂得话,就给我讲讲哈tip 4: 画出来的图很难看to sum up: 挺好的1.2较早使用的工具箱SVM and Kernel Methods Matlab Toolbox2005年法国人写的,最近的更新为20/02/2008下载的地址为http://asi.insa-rouen.fr/enseignants/~arakotom/toolbox/index.html这是我最早开始用的一个工具箱,我很喜欢,到现在还是,对于svm的初学者是个很好的toolbox.有详细的说明和很多的demo和例子,包含现今几乎所有的有关svm的成熟算法和数据预处理方法(pca及小波等)。

SVMmatlab代码详解说明

SVMmatlab 代码详解说明1234567891011x=[0 1 0 1 2 -1];y=[0 0 1 1 2 -1];z=[-1 1 1 -1 1 1]; %其中,(x,y )代表二维的数据点,z 表示相应点的类型属性。

data=[1,0;0,1;2,2;-1,-1;0,0;1,1];% (x,y)构成的数据点 groups=[1;1;1;1;-1;-1];%各个数据点的标签 figure; subplot(2,2,1); Struct1 = svmtrain(data,groups,'Kernel_Function','quadratic', 'showplot',true);%data 数据,标签,核函数,训练 classes1=svmclassify(Struct1,data,'showplot',true);%data 数据分类,并显示图形 title('二次核函数'); CorrectRate1=sum(groups==classes1)/6123456789101112131415subplot(2,2,2); Struct2 = svmtrain(data,groups,'Kernel_Function','rbf', 'RBF_Sigma',0.41,'showplot',true); classes2=svmclassify(Struct2,data,'showplot',true); title('高斯径向基核函数(核宽0.41)'); CorrectRate2=sum(groups==classes2)/6 subplot(2,2,3); Struct3 = svmtrain(data,groups,'Kernel_Function','polynomial', 'showplot',true); classes3=svmclassify(Struct3,data,'showplot',true); title('多项式核函数'); CorrectRate3=sum(groups==classes3)/6 subplot(2,2,4); Struct4 = svmtrain(data,groups,'Kernel_Function','mlp', 'showplot',true); classes4=svmclassify(Struct4,data,'showplot',true); title('多层感知机核函数');CorrectRate4=sum(groups==classes4)/61 <br><br><br>1 2 3 svmtrain 代码分析:if plotflag %画出训练数据的点[hAxis,hLines] = svmplotdata(training,groupIndex);4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20legend(hLines,cellstr(groupString)); endscaleData = [];if autoScale %训练数据标准化 scaleData.shift = - mean(training); stdVals = std(training); scaleData.scaleFactor = 1./stdVals; % leave zero-variance data unscaled: scaleData.scaleFactor(~isfinite(scaleData.scaleFactor)) = 1; % shift and scale columns of data matrix: for c = 1:size(training, 2) training(:,c) = scaleData.scaleFactor(c) * ... (training(:,c) + scaleData.shift(c)); end end1 2 3 if strcmpi(optimMethod, 'SMO')%选择最优化算法 else % QP and LS both need the kernel matrix: %求解出超平面的参数 (w,b): wX+b1 2 3 4 if plotflag %画出超平面在二维空间中的投影 hSV = svmplotsvs(hAxis,hLines,groupString,svm_struct); svm_struct.FigureHandles = {hAxis,hLines,hSV}; end1 s vmplotsvs.m 文件<br><br>1 2 3 4 5 6 7 hSV = plot(sv(:,1),sv(:,2),'ko');%从训练数据中选出支持向量,加上圈标记出来lims = axis(hAxis);%获取子图的坐标空间 [X,Y] = meshgrid(linspace(lims(1),lims(2)),linspace(lims(3),lims(4)));%根据x 和y 的范围,切分成网格,默认100份 Xorig = X; Yorig = Y;8 9 10 11 12 13 % need to scale the mesh 将这些隔点标准化 if ~isempty(scaleData) X = scaleData.scaleFactor(1) * (X + scaleData.shift(1)); Y = scaleData.scaleFactor(2) * (Y + scaleData.shift(2)); end [dummy, Z] = svmdecision([X(:),Y(:)],svm_struct); %计算这些隔点[标签,离超平面的距离]<br>contour(Xorig,Yorig,reshape(Z,size(X)),[0 0],'k');%画出等高线图,这个距离投影到二维空间的等高线12 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17svmdecision.m 文件function [out,f] = svmdecision(Xnew,svm_struct) %SVMDECISION evaluates the SVM decision function % Copyright 2004-2006 The MathWorks, Inc.% $Revision: 1.1.12.4 $ $Date: 2006/06/16 20:07:18 $ sv = svm_struct.SupportVectors; alphaHat = svm_struct.Alpha; bias = svm_struct.Bias; kfun = svm_struct.KernelFunction; kfunargs = svm_struct.KernelFunctionArgs; f = (feval(kfun,sv,Xnew,kfunargs{:})'*alphaHat(:)) + bias;%计算出距离 out = sign(f);%距离转化成标签 % points on the boundary are assigned to class 1 out(out==0) = 1;1 2 3 4 5 6 7function K = quadratic_kernel(u,v,varargin)%核函数计算 %QUADRATIC_KERNEL quadratic kernel for SVM functions % Copyright 2004-2008 The MathWorks, Inc. dotproduct = (u*v'); K = dotproduct.*(1 + dotproduct);维度分析:假设输入的训练数据为m 个,维度为d ,记作X(m,d);显然w 为w(m,1); wT*x+b核函数计算:k(x,y)->上公式改写成 wT*@(x)+b假设支持的向量跟训练数据保持一致,没有筛选掉一个,则支撑的数据就是归一化后的X,记作:Xst;测试数据为T(n,d);则核函数计算后为:(m,d)*(n,d)'=m*n;与权重和偏移中以后为: (1,m)*(m*n)=1*n ;如果是训练数据作核函数处理,则m*d 变成为m*m这n 个测试点的距离。

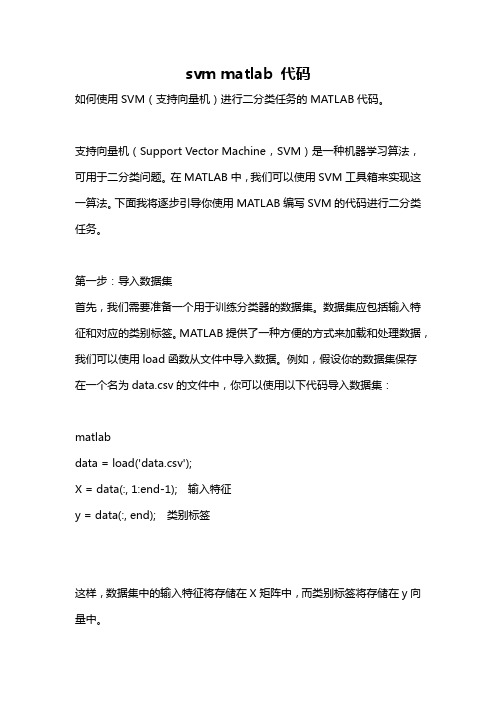

svm matlab 代码

svm matlab 代码如何使用SVM(支持向量机)进行二分类任务的MATLAB代码。

支持向量机(Support Vector Machine,SVM)是一种机器学习算法,可用于二分类问题。

在MATLAB中,我们可以使用SVM工具箱来实现这一算法。

下面我将逐步引导你使用MATLAB编写SVM的代码进行二分类任务。

第一步:导入数据集首先,我们需要准备一个用于训练分类器的数据集。

数据集应包括输入特征和对应的类别标签。

MATLAB提供了一种方便的方式来加载和处理数据,我们可以使用load函数从文件中导入数据。

例如,假设你的数据集保存在一个名为data.csv的文件中,你可以使用以下代码导入数据集:matlabdata = load('data.csv');X = data(:, 1:end-1); 输入特征y = data(:, end); 类别标签这样,数据集中的输入特征将存储在X矩阵中,而类别标签将存储在y向量中。

第二步:拆分数据集在训练SVM分类器之前,我们需要将数据集拆分为训练集和测试集。

训练集将用于训练分类器,而测试集将用于评估分类器的性能。

MATLAB提供了一个方便的函数cvpartition,可以帮助我们实现这一步骤。

以下是一个示例代码:matlabcv = cvpartition(y, 'HoldOut', 0.2); 将数据集按照20的比例划分为测试集X_train = X(training(cv), :); 训练集输入特征y_train = y(training(cv), :); 训练集类别标签X_test = X(test(cv), :); 测试集输入特征y_test = y(test(cv), :); 测试集类别标签现在,我们已经准备好了训练和测试集。

第三步:训练SVM分类器使用MATLAB的SVM工具箱,我们可以轻松地训练一个SVM分类器。

以下是一个使用线性核函数(linear kernel)的示例代码:matlabSVMModel = fitcsvm(X_train, y_train, 'KernelFunction', 'linear');上述代码中,fitcsvm函数用于训练SVM分类器。

支持向量机的matlab代码

支持向量机的matlab代码如果是7.0以上版本>>edit svmtrain>>edit svmclassify>>edit svmpredictfunction [svm_struct, svIndex] = svmtrain(training, groupnames, varargin)%SVMTRAIN trains a support vector machine classifier%% SVMStruct = SVMTRAIN(TRAINING,GROUP) trains a support vector machine% classifier using data TRAINING taken from two groups given by GROUP. % SVMStruct contains information about the trained classifier that is% used by SVMCLASSIFY for classification. GROUP is a column vector of % values of the same length as TRAINING that defines two groups. Each % element of GROUP specifies the group the corresponding row of TRAINING% belongs to. GROUP can be a numeric vector, a string array, or a cell% array of strings. SVMTRAIN treats NaNs or empty strings in GROUP as % missing values and ignores the corresponding rows of TRAINING.%% SVMTRAIN(...,'KERNEL_FUNCTION',KFUN) allows you to specify the kernel% function KFUN used to map the training data into kernel space. The% default kernel function is the dot product. KFUN can be one of the% following strings or a function handle:%% 'linear' Linear kernel or dot product% 'quadratic' Quadratic kernel% 'polynomial' Polynomial kernel (default order 3)% 'rbf' Gaussian Radial Basis Function kernel% 'mlp' Multilayer Perceptron kernel (default scale 1)% function A kernel function specified using @,% for example @KFUN, or an anonymous function%% A kernel function must be of the form%% function K = KFUN(U, V)%% The returned value, K, is a matrix of size M-by-N, where U and V have M % and N rows respectively. If KFUN is parameterized, you can use% anonymous functions to capture the problem-dependent parameters. For% example, suppose that your kernel function is%% function k = kfun(u,v,p1,p2)% k = tanh(p1*(u*v')+p2);%% You can set values for p1 and p2 and then use an anonymous function: % @(u,v) kfun(u,v,p1,p2).%% SVMTRAIN(...,'POLYORDER',ORDER) allows you to specify the order of a% polynomial kernel. The default order is 3.%% SVMTRAIN(...,'MLP_PARAMS',[P1 P2]) allows you to specify the% parameters of the Multilayer Perceptron (mlp) kernel. The mlp kernel% requires two parameters, P1 and P2, where K = tanh(P1*U*V' + P2) and P1% > 0 and P2 < 0. Default values are P1 = 1 and P2 = -1.%% SVMTRAIN(...,'METHOD',METHOD) allows you to specify the method used% to find the separating hyperplane. Options are%% 'QP' Use quadratic programming (requires the Optimization Toolbox) % 'LS' Use least-squares method%% If you have the Optimization Toolbox, then the QP method is the default % method. If not, the only available method is LS.%% SVMTRAIN(...,'QUADPROG_OPTS',OPTIONS) allows you to pass an OPTIONS% structure created using OPTIMSET to the QUADPROG function when using% the 'QP' method. See help optimset for more details.%% SVMTRAIN(...,'SHOWPLOT',true), when used with two-dimensional data,% creates a plot of the grouped data and plots the separating line for% the classifier.%% Example:% % Load the data and select features for classification% load fisheriris% data = [meas(:,1), meas(:,2)];% % Extract the Setosa class% groups = ismember(species,'setosa');% % Randomly select training and test sets% [train, test] = crossvalind('holdOut',groups);% cp = classperf(groups);% % Use a linear support vector machine classifier% svmStruct = svmtrain(data(train,:),groups(train),'showplot',true); % classes = svmclassify(svmStruct,data(test,:),'showplot',true);% % See how well the classifier performed% classperf(cp,classes,test);% cp.CorrectRate%% See also CLASSIFY, KNNCLASSIFY, QUADPROG, SVMCLASSIFY.% Copyright 2004 The MathWorks, Inc.% $Revision: 1.1.12.1 $ $Date: 2004/12/24 20:43:35 $% References:% [1] Kecman, V, Learning and Soft Computing,% MIT Press, Cambridge, MA. 2001.% [2] Suykens, J.A.K., Van Gestel, T., De Brabanter, J., De Moor, B., % Vandewalle, J., Least Squares Support Vector Machines,% World Scientific, Singapore, 2002.% [3] Scholkopf, B., Smola, A.J., Learning with Kernels,% MIT Press, Cambridge, MA. 2002.%% SVMTRAIN(...,'KFUNARGS',ARGS) allows you to pass additional% arguments to kernel functions.% set defaultsplotflag = false;qp_opts = [];kfunargs = {};setPoly = false; usePoly = false;setMLP = false; useMLP = false;if ~isempty(which('quadprog'))useQuadprog = true;elseuseQuadprog = false;end% set default kernel functionkfun = @linear_kernel;% check inputsif nargin < 2error(nargchk(2,Inf,nargin))endnumoptargs = nargin -2;optargs = varargin;% grp2idx sorts a numeric grouping var ascending, and a string grouping% var by order of first occurrence[g,groupString] = grp2idx(groupnames);% check group is a vector -- though char input is special...if ~isvector(groupnames) && ~ischar(groupnames)error('Bioinfo:svmtrain:GroupNotVector',...'Group must be a vector.');end% make sure that the data is correctly oriented.if size(groupnames,1) == 1groupnames = groupnames';end% make sure data is the right sizen = length(groupnames);if size(training,1) ~= nif size(training,2) == ntraining = training';elseerror('Bioinfo:svmtrain:DataGroupSizeMismatch',...'GROUP and TRAINING must have the same number of rows.') endend% NaNs are treated as unknown classes and are removed from the training % datanans = find(isnan(g));if length(nans) > 0training(nans,:) = [];g(nans) = [];endngroups = length(groupString);if ngroups > 2error('Bioinfo:svmtrain:TooManyGroups',...'SVMTRAIN only supports classification into two groups.\nGROUP contains %d different groups.',ngroups)end% convert to 1, -1.g = 1 - (2* (g-1));% handle optional argumentsif numoptargs >= 1if rem(numoptargs,2)== 1error('Bioinfo:svmtrain:IncorrectNumberOfArguments',...'Incorrect number of arguments to %s.',mfilename);endokargs ={'kernel_function','method','showplot','kfunargs','quadprog_opts','polyorder','ml p_params'};for j=1:2:numoptargspname = optargs{j};pval = optargs{j+1};k = strmatch(lower(pname), okargs);%#okif isempty(k)error('Bioinfo:svmtrain:UnknownParameterName',...'Unknown parameter name: %s.',pname);elseif length(k)>1error('Bioinfo:svmtrain:AmbiguousParameterName',...'Ambiguous parameter name: %s.',pname);elseswitch(k)case 1 % kernel_functionif ischar(pval)okfuns = {'linear','quadratic',...'radial','rbf','polynomial','mlp'};funNum = strmatch(lower(pval), okfuns);%#okif isempty(funNum)funNum = 0;endswitch funNum %maybe make this less strict in the futurecase 1kfun = @linear_kernel;case 2kfun = @quadratic_kernel;case {3,4}kfun = @rbf_kernel;case 5kfun = @poly_kernel;usePoly = true;case 6kfun = @mlp_kernel;useMLP = true;otherwiseerror('Bioinfo:svmtrain:UnknownKernelFunction',...'Unknown Kernel Function %s.',kfun);endelseif isa (pval, 'function_handle')kfun = pval;elseerror('Bioinfo:svmtrain:BadKernelFunction',...'The kernel function input does not appear to be a function handle\nor valid function name.')endcase 2 % methodif strncmpi(pval,'qp',2)useQuadprog = true;if isempty(which('quadprog'))warning('Bioinfo:svmtrain:NoOptim',...'The Optimization Toolbox is required to use the quadratic programming method.')useQuadprog = false;endelseif strncmpi(pval,'ls',2)useQuadprog = false;elseerror('Bioinfo:svmtrain:UnknownMethod',...'Unknown method option %s. Valid methods are ''QP'' and ''LS''',pval);endcase 3 % displayif pval ~= 0if size(training,2) == 2plotflag = true;elsewarning('Bioinfo:svmtrain:OnlyPlot2D',...'The display option can only plot 2D training data.')endendcase 4 % kfunargsif iscell(pval)kfunargs = pval;elsekfunargs = {pval};endcase 5 % quadprog_optsif isstruct(pval)qp_opts = pval;elseif iscell(pval)qp_opts = optimset(pval{:});elseerror('Bioinfo:svmtrain:BadQuadprogOpts',...'QUADPROG_OPTS must be an opts structure.');endcase 6 % polyorderif ~isscalar(pval) || ~isnumeric(pval)error('Bioinfo:svmtrain:BadPolyOrder',...'POLYORDER must be a scalar value.');endif pval ~=floor(pval) || pval < 1error('Bioinfo:svmtrain:PolyOrderNotInt',...'The order of the polynomial kernel must be a positive integer.')endkfunargs = {pval};setPoly = true;case 7 % mlpparamsif numel(pval)~=2error('Bioinfo:svmtrain:BadMLPParams',...'MLP_PARAMS must be a two element array.');endif ~isscalar(pval(1)) || ~isscalar(pval(2))error('Bioinfo:svmtrain:MLPParamsNotScalar',...'The parameters of the multi-layer perceptron kernel must be scalar.');endkfunargs = {pval(1),pval(2)};setMLP = true;endendendendif setPoly && ~usePolywarning('Bioinfo:svmtrain:PolyOrderNotPolyKernel',...'You specified a polynomial order but not a polynomial kernel');endif setMLP && ~useMLPwarning('Bioinfo:svmtrain:MLPParamNotMLPKernel',...'You specified MLP parameters but not an MLP kernel');end% plot the data if requestedif plotflag[hAxis,hLines] = svmplotdata(training,g);legend(hLines,cellstr(groupString));end% calculate kernel functiontrykx = feval(kfun,training,training,kfunargs{:});% ensure function is symmetrickx = (kx+kx')/2;catcherror('Bioinfo:svmtrain:UnknownKernelFunction',...'Error calculating the kernel function:\n%s\n', lasterr);end% create Hessian% add small constant eye to force stabilityH =((g*g').*kx) + sqrt(eps(class(training)))*eye(n);if useQuadprog% The large scale solver cannot handle this type of problem, so turn it % off.qp_opts = optimset(qp_opts,'LargeScale','Off');% X=QUADPROG(H,f,A,b,Aeq,beq,LB,UB,X0,opts)alpha = quadprog(H,-ones(n,1),[],[],...g',0,zeros(n,1),inf *ones(n,1),zeros(n,1),qp_opts);% The support vectors are the non-zeros of alphasvIndex = find(alpha > sqrt(eps));sv = training(svIndex,:);% calculate the parameters of the separating line from the support% vectors.alphaHat = g(svIndex).*alpha(svIndex);% Calculate the bias by applying the indicator function to the support% vector with largest alpha.[maxAlpha,maxPos] = max(alpha); %#okbias = g(maxPos) - sum(alphaHat.*kx(svIndex,maxPos));% an alternative method is to average the values over all support vectors % bias = mean(g(sv)' - sum(alphaHat(:,ones(1,numSVs)).*kx(sv,sv)));% An alternative way to calculate support vectors is to look for zeros of % the Lagrangians (fifth output from QUADPROG).%% [alpha,fval,output,exitflag,t] = quadprog(H,-ones(n,1),[],[],...% g',0,zeros(n,1),inf *ones(n,1),zeros(n,1),opts);%% sv = t.lower < sqrt(eps) & t.upper < sqrt(eps);else % Least-Squares% now build up compound matrix for solverA = [0 g';g,H];b = [0;ones(size(g))];x = A\b;% calculate the parameters of the separating line from the support% vectors.sv = training;bias = x(1);alphaHat = g.*x(2:end);endsvm_struct.SupportVectors = sv;svm_struct.Alpha = alphaHat;svm_struct.Bias = bias;svm_struct.KernelFunction = kfun;svm_struct.KernelFunctionArgs = kfunargs;svm_struct.GroupNames = groupnames;svm_struct.FigureHandles = [];if plotflaghSV = svmplotsvs(hAxis,svm_struct);svm_struct.FigureHandles = {hAxis,hLines,hSV}; end41|评论(6)。

Matlab下SVM代码

Matlab下SVM代码NET = SVM(NIN, KERNEL, KERNELPAR, C, USE2NORM, QPSOLVER, QPSIZE) % (All parameters from KERNELPAR on are optional).% Initialise a structure NET containing the basic settings for a Support% Vector Machine (SVM) classifier. The SVM is assumed to have input of% dimension NIN, it works with kernel function KERNEL. If the kernel% function needs extra parameters, these must be given in the array% KERNELPAR. See function SVMKERNEL for a list of valid kernel% functions.%% The structure NET has the following fields:% Basic SVM parameters:% 'type' = 'svm'% 'nin' = NIN number of input dimensions% 'nout' = 1 number of output dimensions% 'kernel' = KERNEL kernel function% 'kernelpar' = KERNELPAR parameters for the kernel function% 'c' = C Upper bound for the coefficients NET.alpha during% training. Depending on the size of NET.c, the value is% interpreted as follows:% LENGTH(NET.c)==1: Upper bound for all coefficients.% LENGTH(NET.c)==2: Different upper bounds for positive (+1) and% negative (-1) examples. NET.c(1) is the bound for the positive,% NET.c(2) is the bound for the negative examples.% LENGTH(NET.c)==N, where N is the number of examples that are% passed to SVMTRAIN: NET.c(i) is the upper bound for the% coefficient NET.alpha(i) associated with example i.% Default value: 1% 'use2norm' = USE2NORM: If non-zero, the training procedure will use% an objective function that involves the 2norm of the errors on% the training points, otherwise the 1norm is used (standard% SVM). Default value: 0.%% Fields that will be set during training with SVMTRAIN:% 'nbexamples' = Number of training examples% 'alpha' = After training, this field contains a column vector with% coefficients (weights) for each training example. NET.alpha is% not used in any subsequent SVM routines, it can be removed after% training.% 'svind' = After training, this field contains the indices of those% training examples that are Support Vectors (those with a large% enough value of alpha)% 'sv' = Contains all the training examples that are Support Vectors.% 'svcoeff' = After training, this field is the product of NET.alpha% times the label of the corresponding training example, for all% examples that are Support Vectors. It is given in the same order% as the examples are given in NET.sv.% 'bias' = The linear term of the SVM decision function.% 'normalw' = Normal vector of the hyperplane that separates the% examples. This is only computed if a linear kernel% NET.kernel='linear' is used.%% Parameters specifically for SVMTRAIN (rarely need to be changed):% 'qpsolver' = QPSOLVER. QPSOLVER must be one of 'quadprog', 'loqo', % 'qp' or empty for auto-detect. Name of the function that solves% the quadratic programming problems in SVMTRAIN.% Default value: empty (auto-detect).% 'qpsize' = QPSIZE. The maximum number of points given to the QP % solver. Default value: 50.% 'alphatol' = Tolerance for all comparisons that involve the% coefficients NET.alpha. Default value: 1E-2.% 'kkttol' = Tolerance for checking the KKT conditions (termination% criterion) Default value: 5E-2. Lower this when high precision is% required.。

matlab fitsvm参数

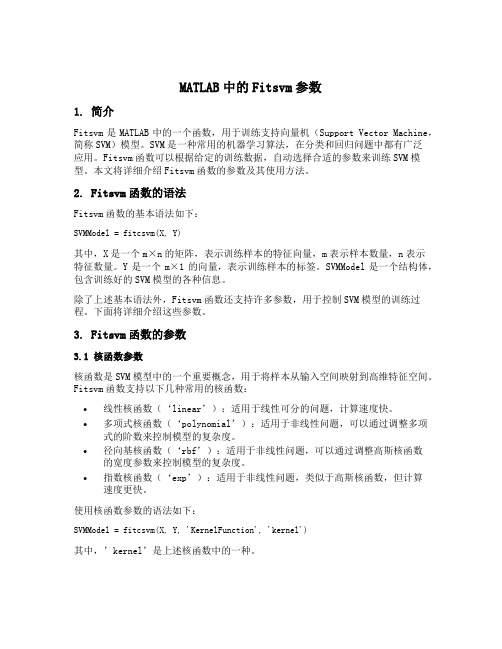

MATLAB中的Fitsvm参数1. 简介Fitsvm是MATLAB中的一个函数,用于训练支持向量机(Support Vector Machine,简称SVM)模型。

SVM是一种常用的机器学习算法,在分类和回归问题中都有广泛应用。

Fitsvm函数可以根据给定的训练数据,自动选择合适的参数来训练SVM模型。

本文将详细介绍Fitsvm函数的参数及其使用方法。

2. Fitsvm函数的语法Fitsvm函数的基本语法如下:SVMModel = fitcsvm(X, Y)其中,X是一个m×n的矩阵,表示训练样本的特征向量,m表示样本数量,n表示特征数量。

Y是一个m×1的向量,表示训练样本的标签。

SVMModel是一个结构体,包含训练好的SVM模型的各种信息。

除了上述基本语法外,Fitsvm函数还支持许多参数,用于控制SVM模型的训练过程。

下面将详细介绍这些参数。

3. Fitsvm函数的参数3.1 核函数参数核函数是SVM模型中的一个重要概念,用于将样本从输入空间映射到高维特征空间。

Fitsvm函数支持以下几种常用的核函数:•线性核函数(‘linear’):适用于线性可分的问题,计算速度快。

•多项式核函数(‘polynomial’):适用于非线性问题,可以通过调整多项式的阶数来控制模型的复杂度。

•径向基核函数(‘rbf’):适用于非线性问题,可以通过调整高斯核函数的宽度参数来控制模型的复杂度。

•指数核函数(‘exp’):适用于非线性问题,类似于高斯核函数,但计算速度更快。

使用核函数参数的语法如下:SVMModel = fitcsvm(X, Y, 'KernelFunction', 'kernel')其中,’kernel’是上述核函数中的一种。

3.2 惩罚参数惩罚参数C用于平衡模型的复杂度和训练误差。

较小的C值将导致更简单的模型,但可能会产生较高的训练误差;较大的C值将导致更复杂的模型,但可能会过拟合训练数据。

matlab支持向量机SVM用于分类的算法实现

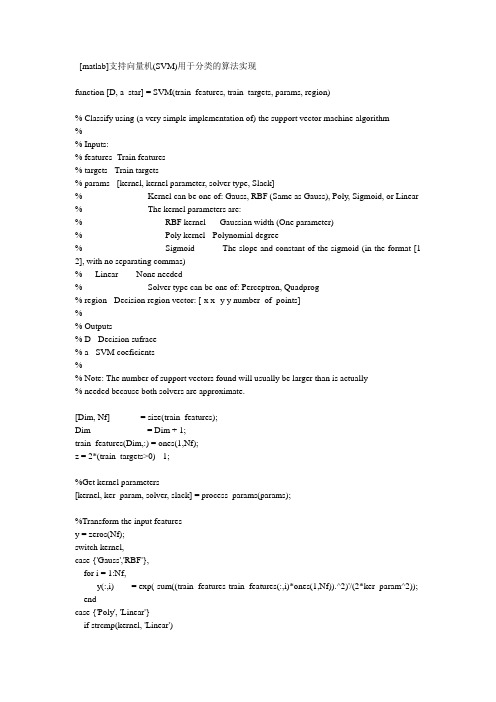

[matlab]支持向量机(SVM)用于分类的算法实现function [D, a_star] = SVM(train_features, train_targets, params, region)% Classify using (a very simple implementation of) the support vector machine algorithm%% Inputs:% features- Train features% targets - Train targets% params - [kernel, kernel parameter, solver type, Slack]% Kernel can be one of: Gauss, RBF (Same as Gauss), Poly, Sigmoid, or Linear % The kernel parameters are:% RBF kernel - Gaussian width (One parameter)% Poly kernel - Polynomial degree% Sigmoid - The slope and constant of the sigmoid (in the format [1 2], with no separating commas)% Linear - None needed% Solver type can be one of: Perceptron, Quadprog% region - Decision region vector: [-x x -y y number_of_points]%% Outputs% D - Decision sufrace% a - SVM coeficients%% Note: The number of support vectors found will usually be larger than is actually% needed because both solvers are approximate.[Dim, Nf] = size(train_features);Dim = Dim + 1;train_features(Dim,:) = ones(1,Nf);z = 2*(train_targets>0) - 1;%Get kernel parameters[kernel, ker_param, solver, slack] = process_params(params);%Transform the input featuresy = zeros(Nf);switch kernel,case {'Gauss','RBF'},for i = 1:Nf,y(:,i) = exp(-sum((train_features-train_features(:,i)*ones(1,Nf)).^2)'/(2*ker_param^2)); endcase {'Poly', 'Linear'}if strcmp(kernel, 'Linear')ker_param = 1;endfor i = 1:Nf,y(:,i) = (train_features'*train_features(:,i) + 1).^ker_param;endcase 'Sigmoid'if (length(ker_param) ~= 2)error('This kernel needs two parameters to operate!')endfor i = 1:Nf,y(:,i) = tanh(train_features'*train_features(:,i)*ker_param(1)+ker_param(2)); endotherwiseerror('Unknown kernel. Can be Gauss, Linear, Poly, or Sigmoid.')end%Find the SVM coefficientsswitch solvercase 'Quadprog'%Quadratic programmingif ~isfinite(slack)alpha_star = quadprog((z'*z).*(y'*y), -ones(1, Nf), [], [], z, 0, 0)';elsealpha_star = quadprog((z'*z).*(y'*y), -ones(1, Nf), [], [], z, 0, 0, slack)'; enda_star = (alpha_star.*z)*y';%Find the biasin = find((alpha_star > 0) & (alpha_star < slack));if isempty(in),bias = 0;elseB = z(in) - a_star * y(:,in);bias = mean(B(in));endcase 'Perceptron'max_iter = 1e5;iter = 0;rate = 0.01;xi = ones(1,Nf)/Nf*slack;if ~isfinite(slack),slack = 0;end%Find a start pointprocessed_y = [y; ones(1,Nf)] .* (ones(Nf+1,1)*z);a_star = mean(processed_y')';while ((sum(sign(a_star'*processed_y+xi)~=1)>0) & (iter < max_iter))iter = iter + 1;if (iter/5000 == floor(iter/5000)),disp(['Working on iteration number ' num2str(iter)])end%Find the worse classified sample (That farthest from the border)dist = a_star'*processed_y+xi;[m, indice] = min(dist);a_star = a_star + rate*processed_y(:,indice);%Calculate the new slack vectorxi(indice) = xi(indice) + rate;xi = xi / sum(xi) * slack;endif (iter == max_iter),disp(['Maximum iteration (' num2str(max_iter) ') reached']);elsedisp(['Converged after ' num2str(iter) ' iterations.'])endbias = 0;a_star = a_star(1:Nf)';case 'Lagrangian'%Lagrangian SVM (See Mangasarian & Musicant, Lagrangian Support Vector Machines) tol = 1e-5;max_iter = 1e5;nu = 1/Nf;iter = 0;D = diag(z);alpha = 1.9/nu;e = ones(Nf,1);I = speye(Nf);Q = I/nu + D*y'*D;P = inv(Q);u = P*e;oldu = u + 1;while ((iter<max_iter) & (sum(sum((oldu-u).^2)) > tol)), iter = iter + 1;if (iter/5000 == floor(iter/5000)),disp(['Working on iteration number ' num2str(iter)]) endoldu = u;f = Q*u-1-alpha*u;u = P*(1+(abs(f)+f)/2);enda_star = y*D*u(1:Nf);bias = -e'*D*u;otherwiseerror('Unknown solver. Can be either Quadprog or Perceptron') end%Find support verctorssv = find(abs(a_star) > 1e-10);Nsv = length(sv);if isempty(sv),error('No support vectors found');elsedisp(['Found ' num2str(Nsv) ' support vectors'])end%Marginb = 1/sqrt(sum(a_star.^2));disp(['The margin is ' num2str(b)])%Now build the decision regionN = region(5);xx = linspace (region(1),region(2),N);yy = linspace (region(3),region(4),N);D = zeros(N);for j = 1:N,y = zeros(N,1);for i = 1:Nsv,data = [xx(j)*ones(1,N); yy; ones(1,N)];switch kernel,case {'Gauss','RBF'},y = y + a_star(i) * exp(-sum((data-train_features(:,sv(i))*ones(1,N)).^2)'/(2*ker_param^2));case {'Poly', 'Linear'}y = y + a_star(i) * (data'*train_features(:,sv(i))+1).^ker_param;case 'Sigmoid'y = y + a_star(i) * tanh(data'*train_features(:,sv(i))*ker_param(1)+ker_param(2));endendD(:,j) = (y + bias);end。

svm交叉验证matlab代码

svm交叉验证matlab代码以下是一个使用支持向量机(SVM)进行交叉验证的MATLAB代码示例:matlab.% 加载数据集。

load fisheriris.X = meas(:,3:4);Y = species;% 设置SVM参数。

C = [0.01, 0.1, 1, 10];gamma = [0.1, 1, 10];k = 5; % 交叉验证折数。

% 初始化结果矩阵。

accuracy = zeros(length(C), length(gamma));% 执行交叉验证。

for i = 1:length(C)。

for j = 1:length(gamma)。

svmModel = fitcsvm(X, Y, 'KernelFunction', 'rbf', 'BoxConstraint', C(i), 'KernelScale', gamma(j));cv = crossval(svmModel, 'KFold', k);accuracy(i, j) = 1 kfoldLoss(cv);end.end.% 找到最优参数组合。

[maxAcc, idx] = max(accuracy(:));[optCIdx, optGammaIdx] = ind2sub(size(accuracy), idx);optC = C(optCIdx);optGamma = gamma(optGammaIdx);% 输出最优参数和准确率。

fprintf('最优参数组合,C = %.2f, gamma = %.2f\n', optC, optGamma);fprintf('最优准确率,%.2f%%\n', maxAcc 100);这段代码首先加载了一个经典的鸢尾花数据集(Fisher's Iris Dataset),然后定义了SVM的参数C和gamma,并设置了交叉验证的折数k。

matlab智能算法代码

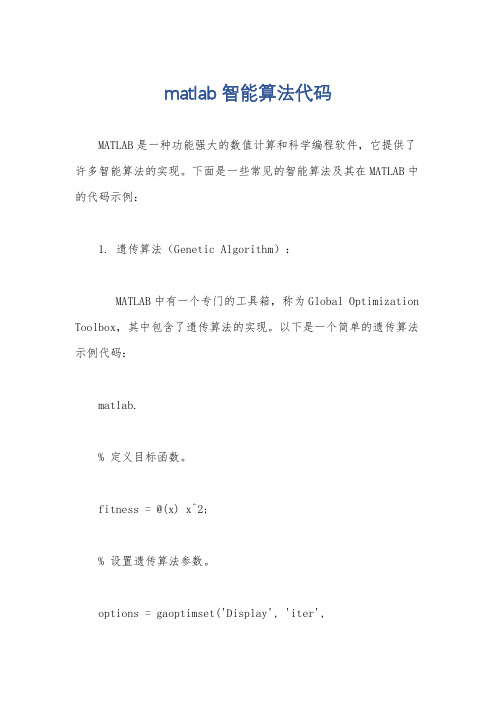

matlab智能算法代码MATLAB是一种功能强大的数值计算和科学编程软件,它提供了许多智能算法的实现。

下面是一些常见的智能算法及其在MATLAB中的代码示例:1. 遗传算法(Genetic Algorithm):MATLAB中有一个专门的工具箱,称为Global Optimization Toolbox,其中包含了遗传算法的实现。

以下是一个简单的遗传算法示例代码:matlab.% 定义目标函数。

fitness = @(x) x^2;% 设置遗传算法参数。

options = gaoptimset('Display', 'iter','PopulationSize', 50);% 运行遗传算法。

[x, fval] = ga(fitness, 1, options);2. 粒子群优化算法(Particle Swarm Optimization):MATLAB中也有一个工具箱,称为Global Optimization Toolbox,其中包含了粒子群优化算法的实现。

以下是一个简单的粒子群优化算法示例代码:matlab.% 定义目标函数。

fitness = @(x) x^2;% 设置粒子群优化算法参数。

options = optimoptions('particleswarm', 'Display','iter', 'SwarmSize', 50);% 运行粒子群优化算法。

[x, fval] = particleswarm(fitness, 1, [], [], options);3. 支持向量机(Support Vector Machine):MATLAB中有一个机器学习工具箱,称为Statistics and Machine Learning Toolbox,其中包含了支持向量机的实现。

- 1、下载文档前请自行甄别文档内容的完整性,平台不提供额外的编辑、内容补充、找答案等附加服务。

- 2、"仅部分预览"的文档,不可在线预览部分如存在完整性等问题,可反馈申请退款(可完整预览的文档不适用该条件!)。

- 3、如文档侵犯您的权益,请联系客服反馈,我们会尽快为您处理(人工客服工作时间:9:00-18:30)。

% SVMTRAIN(...,'QUADPROG_OPTS',OPTIONS) allows you to pass an OPTIONS

% structure created using OPTIMSET to the QUADPROG function when using

% the 'QP' method. See help optimset for more details.

% used by SVMCLASSIFY for classification. GROUP is a column vector of

% values of the same length as TRAINING that defines two groups. Each

% element of GROUP specifies the group the corresponding row of TRAINING

% classes = svmclassify(svmStruct,data(test,:),'showplot',true);

% % See how well the classifier performed

% classperf(cp,classes,test);

% Vandewalle, J., Least Squares Support Vector Machines,

% World Scientific, Singapore, 2002.

% [3] Scholkopf, B., Smola, A.J., Learning with Kernels,

% cp = classperf(groups);

% % Use a linear support vector machine classifier

% svmStruct = svmtrain(data(train,:),groups(train),'showplot',true);

% MIT Press, Cambridge, MA. 2002.

%

% SVMTRAIN(...,'KFUNARGS',ARGS) allows you to pass additional

% arguments to kernel functions.

% set defaults

end

numoptargs = nargin -2;

optargs = varargin;

% grp2idx sorts a numeric grouping var ascending, and a string grouping

% var by order of first occurrence

% belongs to. GROUP can be a numeric vector, a string array, or a cell

% array of strings. SVMTRAIN treats NaNs or empty strings in GROUP as

% missing values and ignores the corresponding rows of TRAINING.

% following strings or a function handle:

%

% 'linear' Linear kernel or dot product

% 'quadratic' Quadratic kernel

% 'polynomial' Polynomial kernel (default order 3)

[g,groupString] = grp2idx(groupnames);

% cp.CorrectRate

%

% See also CLASSIFY, KNNCLASSIFY, QUADPROG, SVMCLASSIFY.

% Copyright 2004 The MathWorks, Inc.

% $Revision: 1.1.12.1 $ $Date: 2004/12/24 20:43:35 $

%

% SVMTRAIN(...,'METHOD',METHOD) allows you to specify the method used

% to find the separating hyperplane. Options are

%

% 'QP' Use quadratic programming (requires the Optimization Toolbox)

%

% SVMTRAIN(...,'KERNEL_FUNCTION',KFUN) allows you to specify the kernel

% function KFUN used to map the training data into kernel space. The

% default kernel function is the dot product. KFUN can be one of the

% groups = ismember(species,'setosa');

% % Randomly select training and test sets

% [train, test] = crossvalind('holdOut',groups);

else

useQuadprog = false;

end

% set default kernel function

kfun = @linear_kernel;

% check inputs

if nargin < 2

error(nargchk(2,Inf,nargin))

%

% Example:

% % Load the data and select features for classification

% load fisheriris

% data = [meas(:,1), meas(:,2)];

% % Extract the Setosa class

%

% SVMStruct = SVMTRAIN(TRAINING,GROUP) trains a support vector machine

% classifier using data TRAINING taken from two groups given by GROUP.

% SVMStruct contains information about the trained classifier that is

%

% SVMTRAIN(...,'SHOWPLOT',true), when used with two-dimensional data,

% creates a plot of the grouped data and plots the separating line for

% the classifier.

% parameters of the Multilayer Perceptron (mlp) kernel. The mlp kernel

% requires two parameters, P1 and P2, where K = tanh(P1*U*V' + P2) and P1

% > 0 and P2 < 0. Default values are P1 = 1 and P2 = -1.

% References:

% [1] Kecman, V, Learning and Soft Computing,

% MIT Press, Cambridge, MA. 2001.

% [2] Suykens, J.A.K., Van Gestel, T., De Brabanter, J., De Moor, B.,

% for example @KFUN, or an anonymous function

%

% A kernel function must be of the form

%

% function K = KFUN(U, V)

%

% The returned value, K, is a matrix of size M-by-N, where U and V have M

% 'rbf' Gaussian Radial Basis Function kernel

% 'mlp' Multilayer Perceptron kernel (default scale 1)

% function A kernel function specified using @,

% and N rows respectively. If KFUN is parameterized, you can use

% anonymous functions to capture the problem-dependent parameters. For

% example, suppose that your kernel function is

% 'LS' Use least-squares method