Hadoop Architecture

hadoop原理与架构

hadoop原理与架构Hadoop是一个分布式计算框架,可以处理大规模的数据集。

它由Apache软件基金会开发和维护,是一个开源的项目。

Hadoop由两个主要组件组成:HDFS和MapReduce。

一、HDFSHDFS(分布式文件系统)是Hadoop的存储层。

它被设计为可靠且容错的,可以在大规模集群上运行。

HDFS将文件划分为块,并将这些块存储在不同的节点上。

每个块都有多个副本,以保证数据的可靠性和容错性。

1.1 HDFS架构HDFS采用主从架构,其中有一个NameNode和多个DataNode。

NameNode负责管理文件系统命名空间、权限和块映射表等元数据信息;而DataNode则负责存储实际数据块。

1.2 HDFS工作原理当客户端需要读取或写入文件时,它会向NameNode发送请求。

NameNode会返回包含所需数据块位置信息的响应。

客户端接收到响应后,就可以直接与DataNode通信进行读写操作。

当客户端写入文件时,它会将文件划分为多个块,并将这些块发送给不同的DataNode进行存储。

每个块都有多个副本,并且这些副本会分散在不同的节点上。

如果某个DataNode发生故障,其他副本可以被用来恢复数据。

当客户端读取文件时,它会向NameNode发送请求,并获取包含所需数据块位置信息的响应。

然后,客户端会直接从DataNode读取数据块。

二、MapReduceMapReduce是Hadoop的计算层。

它是一个分布式处理框架,可以在大规模集群上运行。

MapReduce将任务划分为两个阶段:Map和Reduce。

2.1 Map阶段在Map阶段,输入数据被划分为多个小块,并由多个Mapper并行处理。

每个Mapper都会将输入数据转换为键值对,并将这些键值对传递给Reducer进行处理。

2.2 Reduce阶段在Reduce阶段,Reducer会对Map输出的键值对进行聚合和排序,并生成最终输出结果。

hadoop核心组件概述及hadoop集群的搭建

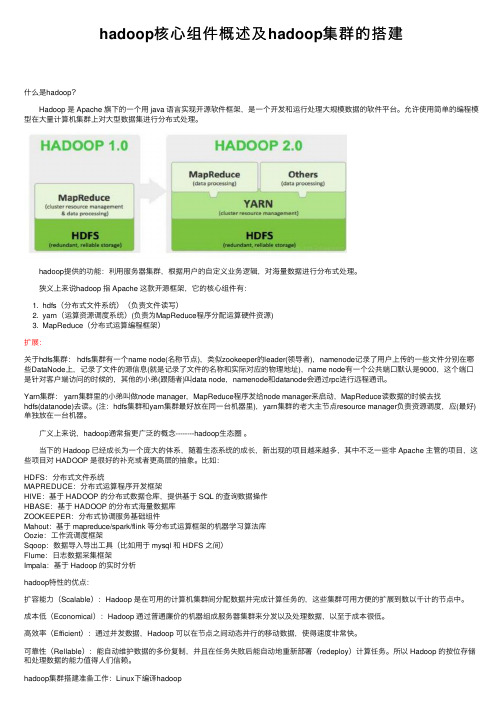

hadoop核⼼组件概述及hadoop集群的搭建什么是hadoop? Hadoop 是 Apache 旗下的⼀个⽤ java 语⾔实现开源软件框架,是⼀个开发和运⾏处理⼤规模数据的软件平台。

允许使⽤简单的编程模型在⼤量计算机集群上对⼤型数据集进⾏分布式处理。

hadoop提供的功能:利⽤服务器集群,根据⽤户的⾃定义业务逻辑,对海量数据进⾏分布式处理。

狭义上来说hadoop 指 Apache 这款开源框架,它的核⼼组件有:1. hdfs(分布式⽂件系统)(负责⽂件读写)2. yarn(运算资源调度系统)(负责为MapReduce程序分配运算硬件资源)3. MapReduce(分布式运算编程框架)扩展:关于hdfs集群: hdfs集群有⼀个name node(名称节点),类似zookeeper的leader(领导者),namenode记录了⽤户上传的⼀些⽂件分别在哪些DataNode上,记录了⽂件的源信息(就是记录了⽂件的名称和实际对应的物理地址),name node有⼀个公共端⼝默认是9000,这个端⼝是针对客户端访问的时候的,其他的⼩弟(跟随者)叫data node,namenode和datanode会通过rpc进⾏远程通讯。

Yarn集群: yarn集群⾥的⼩弟叫做node manager,MapReduce程序发给node manager来启动,MapReduce读数据的时候去找hdfs(datanode)去读。

(注:hdfs集群和yarn集群最好放在同⼀台机器⾥),yarn集群的⽼⼤主节点resource manager负责资源调度,应(最好)单独放在⼀台机器。

⼴义上来说,hadoop通常指更⼴泛的概念--------hadoop⽣态圈。

当下的 Hadoop 已经成长为⼀个庞⼤的体系,随着⽣态系统的成长,新出现的项⽬越来越多,其中不乏⼀些⾮ Apache 主管的项⽬,这些项⽬对 HADOOP 是很好的补充或者更⾼层的抽象。

Hadoop分布式文件系统:架构和设计外文翻译

外文翻译原文来源The Hadoop Distributed File System: Architecture and Design 中文译文Hadoop分布式文件系统:架构和设计姓名 XXXX学号 ************2013年4月8 日英文原文The Hadoop Distributed File System: Architecture and DesignSource:/docs/r0.18.3/hdfs_design.html IntroductionThe Hadoop Distributed File System (HDFS) is a distributed file system designed to run on commodity hardware. It has many similarities with existing distributed file systems. However, the differences from other distributed file systems are significant. HDFS is highly fault-tolerant and is designed to be deployed onlow-cost hardware. HDFS provides high throughput access to application data and is suitable for applications that have large data sets. HDFS relaxes a few POSIX requirements to enable streaming access to file system data. HDFS was originally built as infrastructure for the Apache Nutch web search engine project. HDFS is part of the Apache Hadoop Core project. The project URL is/core/.Assumptions and GoalsHardware FailureHardware failure is the norm rather than the exception. An HDFS instance may consist of hundreds or thousands of server machines, each storing part of the file system’s data. The fact that there are a huge number of components and that each component has a non-trivial probability of failure means that some component of HDFS is always non-functional. Therefore, detection of faults and quick, automatic recovery from them is a core architectural goal of HDFS.Streaming Data AccessApplications that run on HDFS need streaming access to their data sets. They are not general purpose applications that typically run on general purpose file systems. HDFS is designed more for batch processing rather than interactive use by users. The emphasis is on high throughput of data access rather than low latency of data access. POSIX imposes many hard requirements that are notneeded for applications that are targeted for HDFS. POSIX semantics in a few key areas has been traded to increase data throughput rates.Large Data SetsApplications that run on HDFS have large data sets. A typical file in HDFS is gigabytes to terabytes in size. Thus, HDFS is tuned to support large files. It should provide high aggregate data bandwidth and scale to hundreds of nodes in a single cluster. It should support tens of millions of files in a single instance.Simple Coherency ModelHDFS applications need a write-once-read-many access model for files. A file once created, written, and closed need not be changed. This assumption simplifies data coherency issues and enables high throughput data access. AMap/Reduce application or a web crawler application fits perfectly with this model. There is a plan to support appending-writes to files in the future.“Moving Computation is Cheaper than Moving Data”A computation requested by an application is much more efficient if it is executed near the data it operates on. This is especially true when the size of the data set is huge. This minimizes network congestion and increases the overall throughput of the system. The assumption is that it is often better to migrate the computation closer to where the data is located rather than moving the data to where the application is running. HDFS provides interfaces for applications to move themselves closer to where the data is located.Portability Across Heterogeneous Hardware and Software PlatformsHDFS has been designed to be easily portable from one platform to another. This facilitates widespread adoption of HDFS as a platform of choice for a large set of applications.NameNode and DataNodesHDFS has a master/slave architecture. An HDFS cluster consists of a single NameNode, a master server that manages the file system namespace and regulates access to files by clients. In addition, there are a number of DataNodes, usually one per node in the cluster, which manage storage attached to the nodes that they run on. HDFS exposes a file system namespace and allows user data to be stored in files. Internally, a file is split into one or more blocks and these blocksare stored in a set of DataNodes. The NameNode executes file system namespace operations like opening, closing, and renaming files and directories. It also determines the mapping of blocks to DataNodes. The DataNodes are responsible for serving read and write requests from the file system’s clients. The DataNodes also perform block creation, deletion, and replication upon instruction from the NameNode.The NameNode and DataNode are pieces of software designed to run on commodity machines. These machines typically run a GNU/Linux operating system (OS). HDFS is built using the Java language; any machine that supports Java can run the NameNode or the DataNode software. Usage of the highly portable Java language means that HDFS can be deployed on a wide range ofmachines. A typical deployment has a dedicated machine that runs only the NameNode software. Each of the other machines in the cluster runs one instance of the DataNode software. The architecture does not preclude running multiple DataNodes on the same machine but in a real deployment that is rarely the case.The existence of a single NameNode in a cluster greatly simplifies the architecture of the system. The NameNode is the arbitrator and repository for all HDFS metadata. The system is designed in such a way that user data never flows through the NameNode.The File System NamespaceHDFS supports a traditional hierarchical file organization. A user or an application can create directories and store files inside these directories. The file system namespace hierarchy is similar to most other existing file systems; one can create and remove files, move a file from one directory to another, or rename a file. HDFS does not yet implement user quotas or access permissions. HDFS does not support hard links or soft links. However, the HDFS architecture does not preclude implementing these features.The NameNode maintains the file system namespace. Any change to the file system namespace or its properties is recorded by the NameNode. An application can specify the number of replicas of a file that should be maintained by HDFS. The number of copies of a file is called the replication factor of that file. This information is stored by the NameNode.Data ReplicationHDFS is designed to reliably store very large files across machines in a large cluster. It stores each file as a sequence of blocks; all blocks in a file except the last block are the same size. The blocks of a file are replicated for fault tolerance. The block size and replication factor are configurable per file. An application can specify the number of replicas of a file. The replication factor can be specified at file creation time and can be changed later. Files in HDFS are write-once and have strictly one writer at any time.The NameNode makes all decisions regarding replication of blocks. It periodically receives a Heartbeat and a Blockreport from each of the DataNodes in the cluster.Receipt of a Heartbeat implies that the DataNode is functioning properly. A Blockreport contains a list of all blocks on a DataNode.Replica Placement: The First Baby StepsThe placement of replicas is critical to HDFS reliability and performance. Optimizing replica placement distinguishes HDFS from most other distributed file systems. This is a feature that needs lots of tuning and experience. The purpose of a rack-aware replica placement policy is to improve data reliability, availability, and network bandwidth utilization. The current implementation for the replica placement policy is a first effort in this direction. The short-term goals of implementing this policy are to validate it on production systems, learn more about its behavior, and build a foundation to test and research more sophisticated policies.Large HDFS instances run on a cluster of computers that commonly spread across many racks. Communication between two nodes in different racks has to go through switches. In most cases, network bandwidth between machines in the same rack is greater than network bandwidth between machines in different racks.The NameNode determines the rack id each DataNode belongs to via the process outlined in Rack Awareness. A simple but non-optimal policy is to place replicas on unique racks. This prevents losing data when an entire rack fails and allows use of bandwidth from multiple racks when reading data. This policy evenly distributes replicas in the cluster which makes it easy to balance load on component failure. However, this policy increases the cost of writes because a write needs to transfer blocks to multiple racks.For the common case, when the replication factor is three, HDFS’s placement policy is to put one replica on one node in the local rack, another on a different node in the local rack, and the last on a different node in a different rack. This policy cuts the inter-rack write traffic which generally improves write performance. The chance of rack failure is far less than that of node failure; this policy does not impact data reliability and availability guarantees. However, it does reduce the aggregate network bandwidth used when reading data since a block is placed in only two unique racks rather than three. With this policy, the replicas of a file do not evenly distribute across the racks. One third of replicas are on one node, two thirds of replicas are on one rack, and the other third are evenly distributed across the remaining racks. This policy improves write performance without compromising data reliability or read performance.The current, default replica placement policy described here is a work in progress. Replica SelectionTo minimize global bandwidth consumption and read latency, HDFS tries to satisfy a read request from a replica that is closest to the reader. If there exists a replica on the same rack as the reader node, then that replica is preferred to satisfy the read request. If angg/ HDFS cluster spans multiple data centers, then a replica that is resident in the local data center is preferred over any remote replica.SafemodeOn startup, the NameNode enters a special state called Safemode. Replication of data blocks does not occur when the NameNode is in the Safemode state. The NameNode receives Heartbeat and Blockreport messages from the DataNodes. A Blockreport contains the list of data blocks that a DataNode is hosting. Each block has a specified minimum number of replicas. A block is considered safely replicated when the minimum number of replicas of that data block has checked in with the NameNode. After a configurable percentage of safely replicated data blocks checks in with the NameNode (plus an additional 30 seconds), the NameNode exits the Safemode state. It then determines the list of data blocks (if any) that still have fewer than the specified number of replicas. The NameNode then replicates these blocks to other DataNodes.The Persistence of File System MetadataThe HDFS namespace is stored by the NameNode. The NameNode uses a transaction log called the EditLog to persistently record every change that occurs to file system metadata. For example, creating a new file in HDFS causes the NameNode to insert a record into the EditLog indicating this. Similarly, changing the replication factor of a file causes a new record to be inserted into the EditLog. The NameNode uses a file in its local host OS file system to store the EditLog. The entire file system namespace, including the mapping of blocks to files and file system properties, is stored in a file called the FsImage. The FsImage is stored as a file in the NameNode’s local file system too.The NameNode keeps an image of the entire file system namespace and file Blockmap in memory. This key metadata item is designed to be compact, such that a NameNode with 4 GB of RAM is plenty to support a huge number of files and directories. When the NameNode starts up, it reads the FsImage and EditLog from disk, applies all the transactions from the EditLog to the in-memory representation of the FsImage, and flushes out this new version into a new FsImage on disk. It can then truncate the old EditLog because its transactions have been applied to the persistent FsImage. This process is called a checkpoint. In the current implementation, a checkpoint only occurs when the NameNode starts up. Work is in progress to support periodic checkpointing in the near future.The DataNode stores HDFS data in files in its local file system. The DataNode has no knowledge about HDFS files. It stores each block of HDFS data in a separatefile in its local file system. The DataNode does not create all files in the same directory. Instead, it uses a heuristic to determine the optimal number of files per directory and creates subdirectories appropriately. It is not optimal to create all local files in the same directory because the local file system might not be able to efficiently support a huge number of files in a single directory. When a DataNode starts up, it scans through its local file system, generates a list of all HDFS data blocks that correspond to each of these local files and sends this report to the NameNode: this is the Blockreport.The Communication ProtocolsAll HDFS communication protocols are layered on top of the TCP/IP protocol. A client establishes a connection to a configurable TCP port on the NameNode machine. It talks the ClientProtocol with the NameNode. The DataNodes talk to the NameNode using the DataNode Protocol. A Remote Procedure Call (RPC) abstraction wraps both the Client Protocol and the DataNode Protocol. By design, the NameNode never initiates any RPCs. Instead, it only responds to RPC requests issued by DataNodes or clients.RobustnessThe primary objective of HDFS is to store data reliably even in the presence of failures. The three common types of failures are NameNode failures, DataNode failures and network partitions.Data Disk Failure, Heartbeats and Re-ReplicationEach DataNode sends a Heartbeat message to the NameNode periodically. A network partition can cause a subset of DataNodes to lose connectivity with the NameNode. The NameNode detects this condition by the absence of a Heartbeat message. The NameNode marks DataNodes without recent Heartbeats as dead and does not forward any new IO requests to them. Any data that was registered to a dead DataNode is not available to HDFS any more. DataNode death may cause the replication factor of some blocks to fall below their specified value. The NameNode constantly tracks which blocks need to be replicated and initiates replication whenever necessary. The necessity for re-replication may arise due to many reasons: a DataNode may become unavailable, a replica may become corrupted, a hard disk on a DataNode may fail, or the replication factor of a file may be increased.Cluster RebalancingThe HDFS architecture is compatible with data rebalancing schemes. A scheme might automatically move data from one DataNode to another if the free space on a DataNode falls below a certain threshold. In the event of a sudden high demand for a particular file, a scheme might dynamically create additional replicas and rebalance other data in the cluster. These types of data rebalancing schemes are not yet implemented.Data IntegrityIt is possible that a block of data fetched from a DataNode arrives corrupted. This corruption can occur because of faults in a storage device, network faults, or buggy software. The HDFS client software implements checksum checking on the contents of HDFS files. When a client creates an HDFS file, it computes a checksum of each block of the file and stores these checksums in a separate hidden file in the same HDFS namespace. When a client retrieves file contents it verifies that the data it received from each DataNode matches the checksum stored in the associated checksum file. If not, then the client can opt to retrieve that block from another DataNode that has a replica of that block.Metadata Disk FailureThe FsImage and the EditLog are central data structures of HDFS. A corruption of these files can cause the HDFS instance to be non-functional. For this reason, the NameNode can be configured to support maintaining multiple copies of the FsImage and EditLog. Any update to either the FsImage or EditLog causes each of the FsImages and EditLogs to get updated synchronously. This synchronous updating of multiple copies of the FsImage and EditLog may degrade the rate of namespace transactions per second that a NameNode can support. However, this degradation is acceptable because even though HDFS applications are very data intensive in nature, they are not metadata intensive. When a NameNode restarts, it selects the latest consistent FsImage and EditLog to use.The NameNode machine is a single point of failure for an HDFS cluster. If the NameNode machine fails, manual intervention is necessary. Currently, automatic restart and failover of the NameNode software to another machine is not supported.SnapshotsSnapshots support storing a copy of data at a particular instant of time. One usage of the snapshot feature may be to roll back a corrupted HDFS instance to a previously known good point in time. HDFS does not currently support snapshots but will in a future release.Data OrganizationData BlocksHDFS is designed to support very large files. Applications that are compatible with HDFS are those that deal with large data sets. These applications write their data only once but they read it one or more times and require these reads to be satisfied at streaming speeds. HDFS supports write-once-read-many semantics on files. A typical block size used by HDFS is 64 MB. Thus, an HDFS file is chopped up into 64 MB chunks, and if possible, each chunk will reside on a different DataNode.StagingA client request to create a file does not reach the NameNode immediately. In fact, initially the HDFS client caches the file data into a temporary local file. Application writes are transparently redirected to this temporary local file. When the local file accumulates data worth over one HDFS block size, the client contacts the NameNode. The NameNode inserts the file name into the file system hierarchy and allocates a data block for it. The NameNode responds to the client request with the identity of the DataNode and the destination data block. Then the client flushes the block of data from the local temporary file to the specified DataNode. When a file is closed, the remaining un-flushed data in the temporary local file is transferred to the DataNode. The client then tells the NameNode that the file is closed. At this point, the NameNode commits the file creation operation into a persistent store. If the NameNode dies before the file is closed, the file is lost.The above approach has been adopted after careful consideration of target applications that run on HDFS. These applications need streaming writes to files. If a client writes to a remote file directly without any client side buffering, the network speed and the congestion in the network impacts throughput considerably. This approach is not without precedent. Earlier distributed file systems, e.g. AFS, have used client side caching to improve performance. APOSIX requirement has been relaxed to achieve higher performance of data uploads.Replication PipeliningWhen a client is writing data to an HDFS file, its data is first written to a local file as explained in the previous section. Suppose the HDFS file has a replication factor of three. When the local file accumulates a full block of user data, the client retrieves a list of DataNodes from the NameNode. This list contains the DataNodes that will host a replica of that block. The client then flushes the data block to the first DataNode. The first DataNode starts receiving the data in small portions (4 KB), writes each portion to its local repository and transfers that portion to the second DataNode in the list. The second DataNode, in turn starts receiving each portion of the data block, writes that portion to its repository and then flushes that portion to the third DataNode. Finally, the third DataNode writes the data to its local repository. Thus, a DataNode can be receiving data from the previous one in the pipeline and at the same time forwarding data to the next one in the pipeline. Thus, the data is pipelined from one DataNode to the next.AccessibilityHDFS can be accessed from applications in many different ways. Natively, HDFS provides a Java API for applications to use. A C language wrapper for this Java API is also available. In addition, an HTTP browser can also be used to browse the files of an HDFS instance. Work is in progress to expose HDFS through the WebDAV protocol.FS ShellHDFS allows user data to be organized in the form of files and directories. It provides a commandline interface called FS shell that lets a user interact with the data in HDFS. The syntax of this command set is similar to other shells (e.g. bash, csh) that users are already familiar with. Here are some sample action/command pairs:FS shell is targeted for applications that need a scripting language to interact with the stored data.DFSAdminThe DFSAdmin command set is used for administering an HDFS cluster. These are commands that are used only by an HDFS administrator. Here are some sample action/command pairs:Browser InterfaceA typical HDFS install configures a web server to expose the HDFS namespace through a configurable TCP port. This allows a user to navigate the HDFS namespace and view the contents of its files using a web browser.Space ReclamationFile Deletes and UndeletesWhen a file is deleted by a user or an application, it is not immediately removed from HDFS. Instead, HDFS first renames it to a file in the /trash directory. The file can be restored quickly as long as it remains in /trash. A file remains in/trash for a configurable amount of time. After the expiry of its life in /trash, the NameNode deletes the file from the HDFS namespace. The deletion of a file causes the blocks associated with the file to be freed. Note that there could be an appreciable time delay between the time a file is deleted by a user and the time of the corresponding increase in free space in HDFS.A user can Undelete a file after deleting it as long as it remains in the /trash directory. If a user wants to undelete a file that he/she has deleted, he/she can navigate the /trash directory and retrieve the file. The /trash directory contains only the latest copy of the file that was deleted. The /trash directory is just like any other directory with one special feature: HDFS applies specified policies to automatically delete files from this directory. The current default policy is to delete files from /trash that are more than 6 hours old. In the future, this policy will be configurable through a well defined interface.Decrease Replication FactorWhen the replication factor of a file is reduced, the NameNode selects excess replicas that can be deleted. The next Heartbeat transfers this information to the DataNode. The DataNode then removes the corresponding blocks and the corresponding free space appears in the cluster. Once again, there might be a time delay between the completion of the setReplication API call and the appearance of free space in the cluster.中文译本原文地址:/docs/r0.18.3/hdfs_design.html一、引言Hadoop分布式文件系统(HDFS)被设计成适合运行在通用硬件(commodity hardware)上的分布式文件系统。

常用的技术架构

常用的技术架构1.分层架构(LayeredArchitecture):将系统划分为不同的层次,每个层次都有明确的职责和功能,层与层之间通过接口进行交互。

常见的分层架构有三层架构(PresentationLayer,BusinessLayer,DataLayer)和四层架构(PresentationLayer,ApplicationLayer,BusinessLayer,DataAccessLayer)。

2.微服务架构(MicroservicesArchitecture):将复杂的单体应用拆分为多个小型、自治的服务,每个服务独立部署、独立运行,通过轻量级的通信方式进行交互。

微服务架构提倡松耦合、高内聚,能够提高系统的灵活性和可伸缩性。

3.事件驱动架构(EventDrivenArchitecture):系统的各个组件之间通过事件进行通信和协作,每个组件都可以是事件的发布者和订阅者。

事件驱动架构适用于需要处理大量异步事件和具有较高实时性需求的系统。

4.服务导向架构(ServiceOrientedArchit ecture,SOA):将系统按照业务领域进行拆分,每个业务领域都通过服务暴露出自己的功能。

服务之间通过标准化的接口进行通信,实现解耦和复用。

5.容器化架构(ContainerizedArchitecture):将应用程序和其依赖打包为容器,以实现跨平台的部署和运行。

容器化架构可以使用容器编排工具来管理和扩展应用程序,提高开发效率和系统的可维护性。

6.事件溯源架构(EventSourcingArchitecture):将系统的状态和状态改变都保存为事件,通过回放事件来恢复系统的状态。

事件溯源架构可以提供更好的数据可追溯性和历史数据分析。

7.响应式架构(ReactiveArchitecture):基于响应式编程的思想,通过使用异步消息传递、非阻塞IO等技术实现高并发、高可扩展性和响应性的系统。

8.BigData架构:用于处理大规模数据的系统架构,包括数据采集、存储、处理和可视化等组件。

hadoop基本架构和工作原理

hadoop基本架构和工作原理Hadoop是一个分布式开源框架,用于处理海量数据。

它能够使用廉价的硬件来搭建集群,同时还提供了高度可靠性和容错性。

Hadoop基本架构包括Hadoop Common、Hadoop Distributed File System (HDFS)和Hadoop MapReduce三个部分,下面将详细介绍Hadoop的工作原理。

1. Hadoop CommonHadoop Common是整个Hadoop架构的基础部分,是一个共享库,它包含了大量的Java类和应用程序接口。

Hadoop集群的每一台机器上都要安装Hadoop Common,并保持相同版本。

2. HDFSHadoop Distributed File System(HDFS)是Hadoop的分布式文件存储部分。

它的目的是将大型数据集分成多个块,并且将这些块在集群中的多个节点间分布式存储。

HDFS可以实现高度可靠性,因为它将每个块在存储节点之间备份。

HDFS可以在不同的节点中进行数据备份,这确保了数据发生故障时,可以轻松恢复。

3. MapReduceHadoop MapReduce是一种编程模型,用于处理大型数据集。

它将处理任务分成两个主要阶段,即Map阶段和Reduce阶段。

在Map阶段,MapReduce将数据集分成小块,并将每个块分配给不同的节点进行处理。

在Reduce阶段,结果被聚合,以生成最终的输出结果。

总的来说,MapReduce作为Hadoop的核心组件,负责对数据集进行处理和计算。

它充当的角色是一个调度员,它会将不同的任务分发到集群中的不同节点上,并尽力保证每个任务都可以获得足够的计算资源。

Hadoop采用多种技术来提供MapReduce的分布式计算能力,其中包括TaskTracker、JobTracker和心跳机制等。

TaskTracker是每个集群节点的一个守护程序,负责处理MapReduce任务的具体实现。

hadoop的生态体系及各组件的用途

hadoop的生态体系及各组件的用途

Hadoop是一个生态体系,包括许多组件,以下是其核心组件和用途:

1. Hadoop Distributed File System (HDFS):这是Hadoop的分布式文件系统,用于存储大规模数据集。

它设计为高可靠性和高吞吐量,并能在低成本的通用硬件上运行。

通过流式数据访问,它提供高吞吐量应用程序数据访问功能,适合带有大型数据集的应用程序。

2. MapReduce:这是Hadoop的分布式计算框架,用于并行处理和分析大规模数据集。

MapReduce模型将数据处理任务分解为Map和Reduce两个阶段,从而在大量计算机组成的分布式并行环境中有效地处理数据。

3. YARN:这是Hadoop的资源管理和作业调度系统。

它负责管理集群资源、调度任务和监控应用程序。

4. Hive:这是一个基于Hadoop的数据仓库工具,提供SQL-like查询语言和数据仓库功能。

5. Kafka:这是一个高吞吐量的分布式消息队列系统,用于实时数据流的收集和传输。

6. Pig:这是一个用于大规模数据集的数据分析平台,提供类似SQL的查询语言和数据转换功能。

7. Ambari:这是一个Hadoop集群管理和监控工具,提供可视化界面和集群配置管理。

此外,HBase是一个分布式列存数据库,可以与Hadoop配合使用。

HBase 中保存的数据可以使用MapReduce来处理,它将数据存储和并行计算完美地结合在一起。

搭建hadoop集群的步骤

搭建hadoop集群的步骤Hadoop是一个开源的分布式计算平台,用于存储和处理大规模的数据集。

在大数据时代,Hadoop已经成为了处理海量数据的标准工具之一。

在本文中,我们将介绍如何搭建一个Hadoop集群。

步骤一:准备工作在开始搭建Hadoop集群之前,需要进行一些准备工作。

首先,需要选择适合的机器作为集群节点。

通常情况下,需要至少三台机器来搭建一个Hadoop集群。

其次,需要安装Java环境和SSH服务。

最后,需要下载Hadoop的二进制安装包。

步骤二:配置Hadoop环境在准备工作完成之后,需要对Hadoop环境进行配置。

首先,需要编辑Hadoop的配置文件,包括core-site.xml、hdfs-site.xml、mapred-site.xml和yarn-site.xml。

其中,core-site.xml用于配置Hadoop的核心参数,hdfs-site.xml用于配置Hadoop分布式文件系统的参数,mapred-site.xml用于配置Hadoop的MapReduce参数,yarn-site.xml用于配置Hadoop的资源管理器参数。

其次,需要在每个节点上创建一个hadoop用户,并设置其密码。

最后,需要在每个节点上配置SSH免密码登录,以便于节点之间的通信。

步骤三:启动Hadoop集群在完成Hadoop环境的配置之后,可以启动Hadoop集群。

首先,需要启动Hadoop的NameNode和DataNode服务。

NameNode是Hadoop分布式文件系统的管理节点,负责管理文件系统的元数据。

DataNode是Hadoop分布式文件系统的存储节点,负责实际存储数据。

其次,需要启动Hadoop的ResourceManager和NodeManager服务。

ResourceManager 是Hadoop的资源管理器,负责管理集群中的资源。

NodeManager是Hadoop的节点管理器,负责管理每个节点的资源。

Hadoop平台搭建简介

点击Arguments进行输入输出路径的设臵,如上图: 其中hdfs://node3/user/fengling/400为输入路径 hdfs://node3/user/fengling/out400为输出路径

JobTracker

应用程序提交到集群后由它决定哪个文件被处理,为不同的 task分配节点。每个集群只有一个JobTracker,一般运行在集群 的Master节点上。

DataNode

集群每个服务器都运行一个DataNode后台程序,这个后台

程序负责把HDFS数据块读写到本地的文件系统。

TaskTracker

指令sudo vi hdfs-site.xml

指令sudo vi mapred-site.xml

至此,Master节点的配臵已经基本完成,重启系统后执行 Hadoop,成果结果如下图所示

安装Slaves节点 将配臵好的Master(node3)复制为node4,node5,node6虚拟机。 复制前关闭Master,复制过程如下:

在hadoop installation directory后面填写保存的路径。设臵完 即可点击确定。

在如下图所示的Map/Reduce Location下空白地方点击,选 择New Hadoop location

Location nam可以随意填写,左边Host内填写的是jobtrack er所在集群机器,这里写node3。左边的port为node3的port, 为8021,右边的port为namenode的port,为8020。User na me为用户名。

以程序wordcount为例用指令hadoopdfscatout400进行查看即可看到输出文件out400自己设定中的部分内容程序分析程序分析wordcount是一个词频统计程序用来输入文件内的每个单词出现的次数统计出来如下

海量数据处理技术——Hadoop介绍

海量数据处理技术——Hadoop介绍如今,在数字化时代,数据已经成为企业和组织中最重要的资产之一,因为巨大量的数据给企业和组织带来了更多的挑战,比如如何存储、管理和分析数据。

随着数据越来越庞大,传统方法已经无法胜任。

这正是Hadoop出现的原因——Hadoop是一个开源的、可扩展的海量数据处理工具。

本文将介绍什么是Hadoop、它的架构和基本概念、以及使用的应用场景。

一、什么是HadoopHadoop是一种基于Java的开源框架,它可以将大量数据分布式分割存储在许多不同的服务器中,并能够对这些数据进行处理。

Hadoop最初是由Apache软件基金会开发的,旨在解决海量数据存储和处理的难题。

Hadoop采用了一种分布式存储和处理模式,能够高效地处理PB级别甚至EB级别的数据,使得企业和组织能够在这些大量数据中更快地发现价值,并利用它带来的价值。

二、 Hadoop架构和基本概念Hadoop架构由两个核心组成部分构成:分布式文件系统Hadoop Distributed File System(HDFS)和MapReduce的执行框架。

1. HDFSHDFS以可扩展性为前提,其存储处理是在上面构建的,它在集群内将数据分成块(Block),每个块的大小通常为64MB或128MB,然后将这些块存储在相应的数据节点上。

HDFS架构包含两类节点:一个是namenode,另一个是datanode。

namenode是文件系统的管理节点,负责存储所有文件和块的元数据,这些元数据不包括实际数据本身。

datanode是存储节点,负责存储实际的数据块,并向namenode报告其状态。

2. MapReduceMapReduce是一个处理数据的编程模型,它基于两个核心操作:map和reduce。

Map负责将输入数据划分为一些独立的小片段,再把每个小片段映射为一个元组作为输出。

Reduce将Map输出的元组进行合并和过滤,生成最终输出。

Hadoop生态圈的技术架构解析

Hadoop生态圈的技术架构解析Hadoop是一个开源的分布式计算框架,它可以处理大规模数据集并且具有可靠性和可扩展性。

Hadoop生态圈是一个由众多基于Hadoop技术的开源项目组成的体系结构。

这些项目包括Hadoop 组件以及其他与Hadoop相关的组件,例如Apache Spark、Apache Storm、Apache Flink等。

这些组件提供了不同的功能和服务,使得Hadoop生态圈可以满足各种不同的需求。

Hadoop生态圈的技术架构可以分为以下几层:1.基础设施层基础设施层是Hadoop生态圈的底层技术架构。

这一层包括操作系统、集群管理器、分布式文件系统等。

在这一层中,Hadoop 的核心技术——分布式文件系统HDFS(Hadoop Distributed File System)占据了重要位置。

HDFS是一种高度可靠、可扩展的分布式文件系统,它可以存储大规模数据集,通过将数据划分成多个块并存储在不同的机器上,实现数据的分布式存储和处理。

此外,Hadoop生态圈还使用了一些其他的分布式存储系统,例如Apache Cassandra、Apache HBase等。

这些系统提供了高可用性、可扩展性和高性能的数据存储和访问服务。

2.数据管理层数据管理层是Hadoop生态圈的中间层技术架构。

这一层提供了数据管理和数据处理的服务。

在这一层中,MapReduce框架是Hadoop生态圈最为重要的组件之一。

MapReduce框架是一种用于大规模数据处理的程序模型和软件框架,它可以将数据分解成多个小任务进行计算,并在分布式环境下执行。

MapReduce框架提供了自动管理任务调度、数据分片、容错等功能,可以处理大规模的数据集。

除了MapReduce框架,Hadoop生态圈中还有其他一些数据管理和数据处理技术,例如Apache Pig、Apache Hive、Apache Sqoop等。

这些组件提供了从数据提取、清洗和转换到数据分析和报告等各个方面的服务。

请简述hadoop的体系结构和主要组件。

请简述hadoop的体系结构和主要组件。

Hadoop是一个分布式计算框架,旨在帮助开发者构建大规模数据处理系统。

Hadoop的体系结构和主要组件包括:1. Hadoop HDFS:Hadoop的核心文件系统,用于存储和管理数据。

HDFS采用块存储,每个块具有固定的大小,支持数据的分片和分布式访问。

2. Hadoop MapReduce:Hadoop的主要计算引擎,将数据处理任务分解为小块并分配给多个计算节点进行并行处理。

MapReduce算法可以处理大规模数据,并实现高效的数据处理。

3. Mapper:Mapper是MapReduce中的一个核心组件,负责将输入数据映射到输出数据。

Mapper通常使用特定的语言处理数据,并将其转换为机器可以理解的形式。

4.Reducer:Reducer是MapReduce的另一个核心组件,负责将输出数据分解为较小的子数据,以便Mapper进行进一步处理。

5. Hive:Hive是一种查询引擎,允许用户在HDFS上执行离线查询。

Hive支持多种查询语言,并支持并行查询。

6. HBase:HBase是一种分布式数据库,用于存储大规模数据。

HBase采用B 树结构来存储数据,并支持高效的查询和排序。

7. Kafka:Kafka是一种分布式流处理引擎,用于处理大规模数据流。

Kafka 支持实时数据处理,并可用于数据共享、实时分析和监控等应用。

8. YARN:YARN是Hadoop的生态系统中的一个子系统,用于支持分布式计算和资源管理。

YARN与HDFS一起工作,支持应用程序在Hadoop集群中的部署和管理。

Hadoop的体系结构和主要组件提供了一种处理大规模数据的有效方法。

随着数据量的不断增加和数据处理需求的不断提高,Hadoop将继续发挥着重要的作用。

大数据Hadoop学习之搭建Hadoop平台(2.1)

⼤数据Hadoop学习之搭建Hadoop平台(2.1) 关于⼤数据,⼀看就懂,⼀懂就懵。

⼀、简介 Hadoop的平台搭建,设置为三种搭建⽅式,第⼀种是“单节点安装”,这种安装⽅式最为简单,但是并没有展⽰出Hadoop的技术优势,适合初学者快速搭建;第⼆种是“伪分布式安装”,这种安装⽅式安装了Hadoop的核⼼组件,但是并没有真正展⽰出Hadoop的技术优势,不适⽤于开发,适合学习;第三种是“全分布式安装”,也叫做“分布式安装”,这种安装⽅式安装了Hadoop的所有功能,适⽤于开发,提供了Hadoop的所有功能。

⼆、介绍Apache Hadoop 2.7.3 该系列⽂章使⽤Hadoop 2.7.3搭建的⼤数据平台,所以先简单介绍⼀下Hadoop 2.7.3。

既然是2.7.3版本,那就代表该版本是⼀个2.x.y发⾏版本中的⼀个次要版本,是基于2.7.2稳定版的⼀个维护版本,开发中不建议使⽤该版本,可以使⽤稳定版2.7.2或者稳定版2.7.4版本。

相较于以前的版本,2.7.3主要功能和改进如下: 1、common: ①、使⽤HTTP代理服务器时的⾝份验证改进。

当使⽤代理服务器访问WebHDFS时,能发挥很好的作⽤。

②、⼀个新的Hadoop指标接收器,允许直接写⼊Graphite。

③、与Hadoop兼容⽂件系统(HCFS)相关的规范⼯作。

2、HDFS: ①、⽀持POSIX风格的⽂件系统扩展属性。

②、使⽤OfflineImageViewer,客户端现在可以通过WebHDFS API浏览fsimage。

③、NFS⽹关接收到⼀些可⽀持性改进和错误修复。

Hadoop端⼝映射程序不再需要运⾏⽹关,⽹关现在可以拒绝来⾃⾮特权端⼝的连接。

④、SecondaryNameNode,JournalNode和DataNode Web UI已经通过HTML5和Javascript进⾏了现代化改造。

3、yarn: ①、YARN的REST API现在⽀持写/修改操作。

Hadoop大数据开发基础教案Hadoop集群的搭建及配置教案

Hadoop大数据开发基础教案-Hadoop集群的搭建及配置教案教案章节一:Hadoop简介1.1 课程目标:了解Hadoop的发展历程及其在大数据领域的应用理解Hadoop的核心组件及其工作原理1.2 教学内容:Hadoop的发展历程Hadoop的核心组件(HDFS、MapReduce、YARN)Hadoop的应用场景1.3 教学方法:讲解与案例分析相结合互动提问,巩固知识点教案章节二:Hadoop环境搭建2.1 课程目标:学会使用VMware搭建Hadoop虚拟集群掌握Hadoop各节点的配置方法2.2 教学内容:VMware的安装与使用Hadoop节点的规划与创建Hadoop配置文件(hdfs-site.xml、core-site.xml、yarn-site.xml)的编写与配置2.3 教学方法:演示与实践相结合手把手教学,确保学生掌握每个步骤教案章节三:HDFS文件系统3.1 课程目标:理解HDFS的设计理念及其优势掌握HDFS的搭建与配置方法3.2 教学内容:HDFS的设计理念及其优势HDFS的架构与工作原理HDFS的搭建与配置方法3.3 教学方法:讲解与案例分析相结合互动提问,巩固知识点教案章节四:MapReduce编程模型4.1 课程目标:理解MapReduce的设计理念及其优势学会使用MapReduce解决大数据问题4.2 教学内容:MapReduce的设计理念及其优势MapReduce的编程模型(Map、Shuffle、Reduce)MapReduce的实例分析4.3 教学方法:互动提问,巩固知识点教案章节五:YARN资源管理器5.1 课程目标:理解YARN的设计理念及其优势掌握YARN的搭建与配置方法5.2 教学内容:YARN的设计理念及其优势YARN的架构与工作原理YARN的搭建与配置方法5.3 教学方法:讲解与案例分析相结合互动提问,巩固知识点教案章节六:Hadoop生态系统组件6.1 课程目标:理解Hadoop生态系统的概念及其重要性熟悉Hadoop生态系统中的常用组件6.2 教学内容:Hadoop生态系统的概念及其重要性Hadoop生态系统中的常用组件(如Hive, HBase, ZooKeeper等)各组件的作用及相互之间的关系6.3 教学方法:互动提问,巩固知识点教案章节七:Hadoop集群的调优与优化7.1 课程目标:学会对Hadoop集群进行调优与优化掌握Hadoop集群性能监控的方法7.2 教学内容:Hadoop集群调优与优化原则参数调整与优化方法(如内存、CPU、磁盘I/O等)Hadoop集群性能监控工具(如JMX、Nagios等)7.3 教学方法:讲解与案例分析相结合互动提问,巩固知识点教案章节八:Hadoop安全与权限管理8.1 课程目标:理解Hadoop安全的重要性学会对Hadoop集群进行安全配置与权限管理8.2 教学内容:Hadoop安全概述Hadoop的认证与授权机制Hadoop安全配置与权限管理方法8.3 教学方法:互动提问,巩固知识点教案章节九:Hadoop实战项目案例分析9.1 课程目标:学会运用Hadoop解决实际问题掌握Hadoop项目开发流程与技巧9.2 教学内容:真实Hadoop项目案例介绍与分析Hadoop项目开发流程(需求分析、设计、开发、测试、部署等)Hadoop项目开发技巧与最佳实践9.3 教学方法:案例分析与讨论团队协作,完成项目任务教案章节十:Hadoop的未来与发展趋势10.1 课程目标:了解Hadoop的发展现状及其在行业中的应用掌握Hadoop的未来发展趋势10.2 教学内容:Hadoop的发展现状及其在行业中的应用Hadoop的未来发展趋势(如Big Data生态系统的演进、与大数据的结合等)10.3 教学方法:讲解与案例分析相结合互动提问,巩固知识点重点和难点解析:一、Hadoop生态系统的概念及其重要性重点:理解Hadoop生态系统的概念,掌握生态系统的组成及相互之间的关系。

hadoop体系架构

hadoop体系架构1.1 Hadoop概念:hadoop是⼀个由Apache基⾦会所开发的分布式系统基础架构。

是根据google发表的GFS(Google File System)论⽂产⽣过来的。

优点: 1. 它是⼀个能够对⼤量数据进⾏分布式处理的软件框架。

以⼀种可靠、⾼效、可伸缩的⽅式进⾏数据处理。

2. ⾼可靠性,因为它假设计算元素和存储会失败,因此它维护多个⼯作数据副本,确保能够针对失败的节点重新分布处理。

3. ⾼效性,因为它以并⾏的⽅式⼯作,通过并⾏处理加快处理速度。

4. 可伸缩的,能够处理 PB 级数据。

此外,Hadoop 依赖于社区服务,因此它的成本⽐较低,任何⼈都可以使⽤。

Hadoop是⼀个能够让⽤户轻松架构和使⽤的分布式计算平台。

⽤户可以轻松地在Hadoop上开发和运⾏处理海量数据的应⽤程序。

它主要有以下⼏个优点: 1.⾼可靠性。

Hadoop按位存储和处理数据的能⼒值得⼈们信赖。

2.⾼扩展性。

Hadoop是在可⽤的计算机集簇间分配数据并完成计算任务的,这些集簇可以⽅便地扩展到数以千计的节点中。

3.⾼效性。

Hadoop能够在节点之间动态地移动数据,并保证各个节点的动态平衡,因此处理速度⾮常快。

4.⾼容错性。

Hadoop能够⾃动保存数据的多个副本,并且能够⾃动将失败的任务重新分配。

5.低成本。

与⼀体机、商⽤数据仓库以及QlikView、Yonghong Z-Suite等数据集市相⽐,hadoop是开源的,项⽬的软件成本因此会⼤⼤降低。

Hadoop组成:主要由两部分组成,⼀个是HDFS,⼀个是MapReduce。

1)什么是HDFS(分布式⽂件系统)?HDFS 即 Hadoop Distributed File System。

⾸先他是⼀个开源系统,同时他是⼀个能够⾯向⼤规模数据使⽤的,可进⾏扩展的⽂件存储与传递系统。

是⼀种允许⽂件通过⽹络在多台主机上分享的⽂件系统,可让多机器上的多⽤户分享⽂件和存储空间。

搞懂Hadoop生态系统

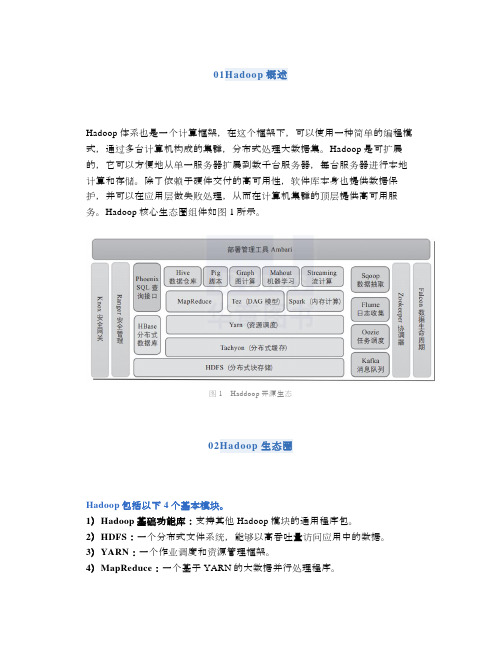

01Hadoop概述Hadoop体系也是一个计算框架,在这个框架下,可以使用一种简单的编程模式,通过多台计算机构成的集群,分布式处理大数据集。

Hadoop是可扩展的,它可以方便地从单一服务器扩展到数千台服务器,每台服务器进行本地计算和存储。

除了依赖于硬件交付的高可用性,软件库本身也提供数据保护,并可以在应用层做失败处理,从而在计算机集群的顶层提供高可用服务。

Hadoop核心生态圈组件如图1所示。

图1Haddoop开源生态02Hadoop生态圈Hadoop包括以下4个基本模块。

1)Hadoop基础功能库:支持其他Hadoop模块的通用程序包。

2)HDFS:一个分布式文件系统,能够以高吞吐量访问应用中的数据。

3)YARN:一个作业调度和资源管理框架。

4)MapReduce:一个基于YARN的大数据并行处理程序。

除了基本模块,Hadoop还包括以下项目。

1)Ambari:基于Web,用于配置、管理和监控Hadoop集群。

支持HDFS、MapReduce、Hive、HCatalog、HBase、ZooKeeper、Oozie、Pig和Sqoop。

Ambari还提供显示集群健康状况的仪表盘,如热点图等。

Ambari以图形化的方式查看MapReduce、Pig和Hive应用程序的运行情况,因此可以通过对用户友好的方式诊断应用的性能问题。

2)Avro:数据序列化系统。

3)Cassandra:可扩展的、无单点故障的NoSQL多主数据库。

4)Chukwa:用于大型分布式系统的数据采集系统。

5)HBase:可扩展的分布式数据库,支持大表的结构化数据存储。

6)Hive:数据仓库基础架构,提供数据汇总和命令行即席查询功能。

7)Mahout:可扩展的机器学习和数据挖掘库。

8)Pig:用于并行计算的高级数据流语言和执行框架。

9)Spark:可高速处理Hadoop数据的通用计算引擎。

Spark提供了一种简单而富有表达能力的编程模式,支持ETL、机器学习、数据流处理、图像计算等多种应用。

各种大型网站技术架构

各种大型网站技术架构大型网站技术架构是指那些能够应对高并发、大数据处理以及高可用性等特点的网站架构。

下面将介绍几种常见的大型网站技术架构。

1. 分层架构(Layered Architecture)分层架构是一种常见的大型网站技术架构,将系统分为多个层次,每个层次具有特定的功能。

主要包括用户界面层、应用程序层、业务逻辑层、数据访问层等。

这种架构的优点是清晰、可维护性好,不同层次的模块可以独立开发和测试,容易实现扩展和升级。

2. 微服务架构(Microservices Architecture)微服务架构是一种将大型系统拆分为多个小型服务的架构。

每个服务都运行在独立的进程中,通过API进行通信。

这种架构的优点是灵活性高,每个服务可以独立开发、部署、扩展和替换,容错性好,能够快速响应变化。

3. 分布式架构(Distributed Architecture)分布式架构是将系统的各个组件分布在不同的服务器上,通过网络进行通信。

这种架构的优点是能够有效地处理大规模数据,提高系统的可扩展性和可靠性。

常见的分布式架构包括Master/Slave(主从)、Master/Master(主主)、分布式缓存、分布式数据库等。

4. 高可用性架构(High Availability Architecture)高可用性架构是保证系统在任何时候都能保持正常运行的架构。

为了实现高可用性,常见的架构模式包括负载均衡、故障转移、冗余备份等。

负载均衡可以将请求分发到多个服务器上,提高系统的吞吐量和响应速度。

故障转移可以在一些服务器故障的情况下,将请求转移到其他正常运行的服务器上。

冗余备份可以保证系统在部分组件发生故障的情况下仍然能够正常运行。

5. 大数据架构(Big Data Architecture)大数据架构是用于处理大规模数据的架构。

常见的大数据架构包括分布式存储系统(如Hadoop、HDFS)、分布式计算框架(如MapReduce)以及实时数据处理系统(如Spark、Storm)。

- 1、下载文档前请自行甄别文档内容的完整性,平台不提供额外的编辑、内容补充、找答案等附加服务。

- 2、"仅部分预览"的文档,不可在线预览部分如存在完整性等问题,可反馈申请退款(可完整预览的文档不适用该条件!)。

- 3、如文档侵犯您的权益,请联系客服反馈,我们会尽快为您处理(人工客服工作时间:9:00-18:30)。

Object Store Architectural shift to the cloud and HPC-style workloads Open source, general purpose datawarehouse Distributed FS

ScaleDB, Big Table, SimpleDB HBase

October, 2009

3

New Data Management Economics

Compute Trend

New Analytics Emerge

(MapReduce...)

Data Trend

Semi-structured Data

(Mogile, Bigtable, HDF Nhomakorabea...)

Master/Slave

4

Changing Software Economics

1998

2008

2009

October, 2009

5

What Is Hadoop ?

“Flexible and available architecture for large scale computation and data processing on a network of commodity hardware”

October, 2009

2

Data-Driven on-Line Websites

• To run the apps : messages, posts, blog entries, video clips, maps, web graph... • To give the data context : friends networks, social networks, collaborative filtering... • To keep the applications running : web logs, system logs, system metrics, database query logs...

Hql

Hibernate Query Language Makes mapping objects to be persisted to underlying database easier Fully objectoriented, understanding notions like inheritence, polymorphism and association • •

ZooKeeper

High-performance coordination service for distributed applications Exposes common services such as naming, configuration management, synchronization, and group services in a simple interface Implement consensus, group management, leader election, and presence protocols

•

• High-level language for expressing data analysis programs, coupled with infrastructure for • evaluating these programs.

•

• •

• •

October, 2009

12

Hadoop Architecture

October, 2009

Automated Install

Security Containers ZFS DTrace

Predictive Self Healing

Image Packaging System CIFS Virtualization Technology COMSTAR

11

Solaris

Hive

Data Warehouse infrastructure Tools to enable easy data summarization Adhoc querying Analysis of large datasets data stored in Hadoop files Sophisticated analysis No Realtime querying • •

/hadoop/Support

October, 2009 8

How to Interface your Application ?

• Implement “Save As Cloud...” to Write to the cloud • Implement “Open From Cloud...” to Read from the cloud • Hadoop 0.20.0

October, 2009

Data moves to the infrastructure

13

Hadoop Architecture

x86 Servers Components

Low Cost Server & Storage : Sun Fire x4xxx

• Develop your application with Hadoop API :

October, 2009

9

OpenSolaris Live Hadoop

• Avaliable on your Laptop • OpenSolaris • Hadoop Live CD based on 3 zones > Global Zone : NameNode, JobTracker > Local Zone 1 : DataNode, TaskTracker > Local Zone 2 : DataNode, TaskTracker • Hbase • JDK with Java compiler and related tools

Semistructured Database

Master/Master

Proprietary, dedicated datawarehouse

OLTP is the datawarehouse

Federated/ Sharded

Unstructured Data

Structured Data

October, 2009

October, 2009

7

Who Support Hadoop ?

• 101tec Inc. Integration, customization, consulting. (Hadoop, Pig, Zookeeper, Lucene, Nutch) • Cloudera, Inc. Get Cloudera's Distribution for Hadoop - it's free, and help you to optimize your configuration. We also provide commercial support and professional training for Hadoop. Basic training is online for free • Cloudify - assist organizations in integrating Cloud Computing into their IT and Business strategies and in building and managing scalable, next-generation infrastructure environments (Hadoop, Solr, AWS, distributed architectures) • Doculibre Inc. Open source and information management consulting. (Lucene, Nutch, Hadoop, Solr, Lius etc.) • ScaleUnlimited, Inc. Training and mentoring on large architectures. Hadoop Bootcamp now available • Tinvention -Ingegneria Informatica - Italian Consulting Company, offer support on open source architecture based on Java, including architectures based on Hadoop.

Open Source Hadoop Subprojects

Pig

• Platform for analyzing large data sets •

Jagl

Query language for • JavaScript Object Notation (JSON) • Jaql designed to flexibly read and write data from a variety of data stores and formats • To model a wide spectrum of data, ranging from homogenous flat data to heterogeneous nested data

• /os/project/livehadoop/

October, 2009

10

OpenSolaris for Hadoop

Network AutoMagic D-Light Time Slider Distribution Constructor Open Storage