Puma与数据高速公路——实时数据流与分析

实时计算,流数据处理系统简介与简单分析

实时计算,流数据处理系统简介与简单分析发表于2014-06-12 14:19| 4350次阅读| 来源CSDN博客| 8条评论| 作者va_key大数据实时计算流计算摘要:实时计算一般都是针对海量数据进行的,一般要求为秒级。

实时计算主要分为两块:数据的实时入库、数据的实时计算。

今天这篇文章详细介绍了实时计算,流数据处理系统简介与简单分析。

编者按:互联网领域的实时计算一般都是针对海量数据进行的,除了像非实时计算的需求(如计算结果准确)以外,实时计算最重要的一个需求是能够实时响应计算结果,一般要求为秒级。

实时计算的今天,业界都没有一个准确的定义,什么叫实时计算?什么不是?今天这篇文章详细介绍了实时计算,流数据处理系统简介与简单分析。

以下为作者原文:一.实时计算的概念实时计算一般都是针对海量数据进行的,一般要求为秒级。

实时计算主要分为两块:数据的实时入库、数据的实时计算。

主要应用的场景:1) 数据源是实时的不间断的,要求用户的响应时间也是实时的(比如对于大型网站的流式数据:网站的访问PV/UV、用户访问了什么内容、搜索了什么内容等,实时的数据计算和分析可以动态实时地刷新用户访问数据,展示网站实时流量的变化情况,分析每天各小时的流量和用户分布情况)2) 数据量大且无法或没必要预算,但要求对用户的响应时间是实时的。

比如说:昨天来自每个省份不同性别的访问量分布,昨天来自每个省份不同性别不同年龄不同职业不同名族的访问量分布。

二.实时计算的相关技术主要分为三个阶段(大多是日志流):数据的产生与收集阶段、传输与分析处理阶段、存储对对外提供服务阶段下面具体针对上面三个阶段详细介绍下1)数据实时采集:需求:功能上保证可以完整的收集到所有日志数据,为实时应用提供实时数据;响应时间上要保证实时性、低延迟在1秒左右;配置简单,部署容易;系统稳定可靠等。

目前的产品:Facebook的Scribe、LinkedIn的Kafka、Cloudera的Flume,淘宝开源的TimeTunnel、Hadoop的Chukwa等,均可以满足每秒数百MB的日志数据采集和传输需求。

体育行业中的运动数据分析技术的使用方法

体育行业中的运动数据分析技术的使用方法随着科技的快速发展,运动数据分析技术在体育行业中扮演着越来越重要的角色。

运动数据分析技术可以帮助体育界的教练、运动员和球队做出更加明智的决策,优化训练计划,提高竞技表现。

本文将介绍体育行业中常用的运动数据分析技术及其使用方法。

一、运动数据采集与存储技术在体育行业中,运动数据的采集和存储是运动数据分析的基础。

常见的运动数据采集设备包括传感器、摄像机、智能手表等。

这些设备可以记录运动员的运动轨迹、速度、心率、加速度等关键指标。

在获取运动数据之后,需要将数据进行存储和整理。

云计算技术可以帮助体育机构建立大规模的数据库,方便数据的存储和管理。

此外,数据采集和存储技术还可以与物联网技术相结合,实现设备之间的无线互联,提高数据的实时性和准确性。

二、运动数据分析方法1. 数据可视化分析运动数据可视化分析方法可以将复杂的数据转化为直观、易懂的图表和图像,方便教练员和运动员理解和分析。

常见的数据可视化工具包括数据可视化软件、统计图表、动态平台等。

例如,通过绘制运动员的移动热图和速度分布图,教练员可以了解比赛或训练中运动员的运动路径和速度变化,从而改进训练计划和战术安排。

2. 数据挖掘与模式识别数据挖掘和模式识别技术可以帮助体育机构从庞大的运动数据中发现隐藏的规律和模式,提取有价值的信息。

例如,可以通过分析大量比赛数据,挖掘出不同球队或运动员的比赛策略、弱点和优势,为教练制定战术和训练计划提供参考。

此外,数据挖掘和模式识别技术还可用于身体素质评估和运动员选拔。

通过对大量运动员的身体数据和成绩进行分析,可以建立起科学合理的身体素质评估模型,辅助教练员进行选拔和训练。

3. 数据驱动的预测模型数据驱动的预测模型是根据历史数据和统计分析建立起来的模型。

该模型可以预测未来比赛或训练中的运动员表现和比赛结果。

例如,基于运动员过去的比赛数据和赛前训练数据,可以通过模型预测出运动员在某个特定环境下的表现和适应能力。

大数据平台的软件有哪些

大数据平台的软件有哪些查询引擎一、Phoenix简介:这是一个Java中间层,可以让开发者在Apache HBase上执行SQL查询。

Phoenix完全使用Java编写,代码位于GitHub上,并且提供了一个客户端可嵌入的JDBC驱动。

Phoenix查询引擎会将SQL查询转换为一个或多个HBase scan,并编排执行以生成标准的JDBC 结果集。

直接使用HBase API、协同处理器与自定义过滤器,对于简单查询来说,其性能量级是毫秒,对于百万级别的行数来说,其性能量级是秒。

Phoenix最值得关注的一些特性有:嵌入式的JDBC驱动,实现了大部分的接口,包括元数据API可以通过多部行键或是键/值单元对列进行建模完善的查询支持,可以使用多个谓词以及优化的扫描键DDL支持:通过CREATE TABLE、DROP TABLE及ALTER TABLE来添加/删除列版本化的模式仓库:当写入数据时,快照查询会使用恰当的模式DML支持:用于逐行插入的UPSERT VALUES、用于相同或不同表之间大量数据传输的UPSERT SELECT、用于删除行的DELETE通过客户端的批处理实现的有限的事务支持单表——还没有连接,同时二级索引也在开发当中紧跟ANSI SQL标准二、Stinger简介:原叫Tez,下一代Hive,Hortonworks主导开发,运行在YARN上的DAG计算框架。

某些测试下,Stinger能提升10倍左右的性能,同时会让Hive支持更多的SQL,其主要优点包括:让用户在Hadoop 获得更多的查询匹配。

其中包括类似OVER的字句分析功能,支持WHERE查询,让Hive的样式系统更符合SQL模型。

优化了Hive请求执行计划,优化后请求时间减少90%。

改动了Hive执行引擎,增加单Hive任务的被秒处理记录数。

在Hive 社区中引入了新的列式文件格式(如ORC文件),提供一种更现代、高效和高性能的方式来储存Hive数据。

AVL台架使用 介绍PO CHN

Puma o pen

2

–系统体系结构

–PUMA 软件应用程序

–PUMA 操作用户界面(POI)–PUMA 资源管理器

–试验程序编辑器(BSQ -SSQ)–参数管理器(PAM)–标准名编辑器(NED)

–Puma Concerto 数据处理软件(PUC)

重要信息

5

6

–数据采集–监视功能

–手动或自动输出设定值–子系统、子设备的控制–公式计算–数据后处理

PUMA 功能简介

8

测量部分

发动机&测功机控制部分

9

-图形显示-字母数字显示

–在同一时间可以激活5个记录仪

–针对通道的采样频率设置

–在线分析

–手动/ 自动操作

–在线更改记录仪设置

–自动(试验程序)–POI 手动

–操作面板

–控制窗口

PUMA Explorer:–易于使用

–易于学习

–用户向导

15

BSQ:

–拖放式编程

–参数库/ 工具条

–流程图式试验程序

16

SSQ:

–以图形方式编辑试验程序步骤

–试验步骤序列可视化

18

19

哪些通道被定义?哪些参数要被保存?

–系统参数

(SYS)–Test Field Param.

(TFP)哪一发动机在何种条件下被测试?

–被测试单元参数(UUT)–试验参数

(TST)

参数从何处装载?

–主机数据库或本地数据库

参数综述

–INIT 公式–记录仪触发–内含的–实时公式解释程序

–参数库功能

–根据需要

23

24。

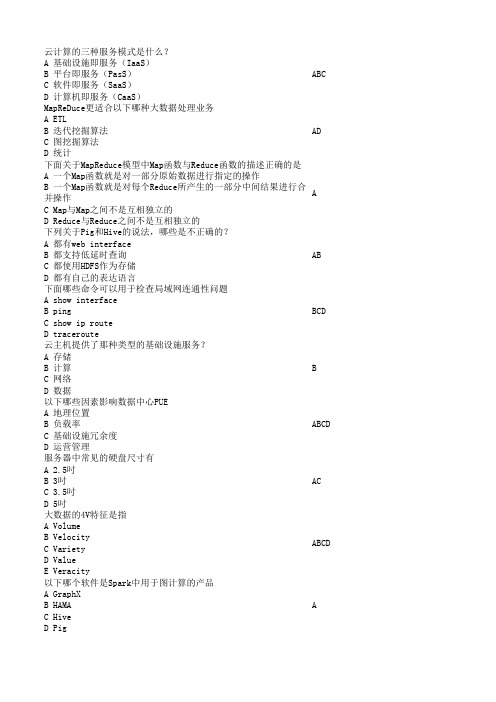

互联网新技术知识竞赛复习题-云

BCD ABCD ABC D ABC ABC ABCD D ABC

此题为单 选题,但 是却给了 两个答 案,我个 人认为应 该选C

大数据量的单位PB和ZP分布表示多少字节数 A 2的50次方和2的70次方 B 2的50次方和2的70次方 C 2的50次方和2的60次方 D 2的50次方和2的100次方 在数据仓库领域中,元数据按用途一般分成哪几类 A 技术元数据 B 应用元数据 C 程序元数据 D 业务元数据 桌面虚拟化系统一般应支持以下哪些功能 A 端口重定向 B 打印机重定向 C 语音设备重定向 D 文件重定向 虚拟机创建时vCPU核数量的上限是多少 A 32个 B 64个 C 物理主机CPU核总数 D 可以超过物理主机CPU核数 某个传统二层域可以用的VLAN数是 A 4093 B 4094 C 4095 D 4096 数据分成很多小部分并把它们分别存储到不同磁盘上的不同文件 中去。这句话描述的技术是 A RAID技术 B 负载均衡技术 C 多副本技术 D 条带化技术 NoSQL数据库的共同特征是什么 A 不需要预定义模式 B 无共享构架 C 弹性可扩展 D 分区 云计算平台需要达到的安全能力有哪些 A 抵御针对租户所部属应用共计的能力 B 抵御来至外部攻击的能力 C 证明自身无法破坏数据和应用安全的能力 D 提供差异化服务的能力 微软的Office365属于哪种服务模式的服务 A 基础设施即服务(IaaS) B 平台即服务(PasS) C 软件即服务(SaaS) D 计算机即服务(CaaS) 非结构化数据经过哪些处理才能加载到传统数据库中 A Extract B Compress C Transform D Load

B 元数据最为重要的特征和功能是为数字化信息资源建立一种机

器可理解框架 C 元数据也是数据,可以用类似数据的方法在数据库中进行存储

数据交换方案

数据交换方案R E S U M E REPORT CATALOG DATE ANALYSIS SUMMARY目录CONTENTS •数据交换概述•数据交换技术•数据交换安全•数据交换流程•数据交换案例分析•数据交换的未来发展REPORT CATALOG DATE ANALYSIS SUMMAR Y R E S U M E 01数据交换概述数据交换的定义数据交换是指不同系统、应用程序或组织之间传输和共享数据的过程。

数据交换的必要性随着企业规模的扩大和业务范围的拓展,不同部门、业务系统之间需要进行数据共享和交互,以支持决策分析、业务流程优化等需求。

数据交换能够消除信息孤岛,提高数据一致性和准确性,提升企业运营效率和决策水平。

数据交换的标准和协议常见的标准有XML、JSON、CSV等,协议包括HTTP、FTP、SFTP等。

数据交换的标准和协议是实现不同系统间数据交换的基础,它们规定了数据格式、传输协议、安全控制等方面的规范。

企业可以根据自身需求选择合适的标准和协议,以实现高效、安全的数据交换。

REPORT CATALOG DATE ANALYSIS SUMMAR Y R E S U M E 02数据交换技术简单易用,适用于不同系统之间的数据传输。

文件交换优点数据库交换优点数据结构化,易于管理和查询。

缺点需要数据库连接和访问权限,可能存在安全风险。

API交换优点高效、灵活、可扩展性强。

缺点需要开发和维护API接口,技术门槛较高。

消息队列交换优点异步、解耦、高可用性、高扩展性。

缺点需要消息队列中间件的支持,技术门槛较高。

REPORTCATALOG DATE ANALYSISSUMMAR YR E S U M E03数据交换安全数据加密对称加密使用相同的密钥进行加密和解密,常见的算法有AES、DES等。

非对称加密使用不同的密钥进行加密和解密,常见的算法有RSA、ECC等。

混合加密结合对称加密和非对称加密,以提高数据传输安全性。

AVL台架使用 介绍PO CHN

Puma o pen

2

–系统体系结构

–PUMA 软件应用程序

–PUMA 操作用户界面(POI)–PUMA 资源管理器

–试验程序编辑器(BSQ -SSQ)–参数管理器(PAM)–标准名编辑器(NED)

–Puma Concerto 数据处理软件(PUC)

重要信息

5

6

–数据采集–监视功能

–手动或自动输出设定值–子系统、子设备的控制–公式计算–数据后处理

PUMA 功能简介

8

测量部分

发动机&测功机控制部分

9

-图形显示-字母数字显示

–在同一时间可以激活5个记录仪

–针对通道的采样频率设置

–在线分析

–手动/ 自动操作

–在线更改记录仪设置

–自动(试验程序)–POI 手动

–操作面板

–控制窗口

PUMA Explorer:–易于使用

–易于学习

–用户向导

15

BSQ:

–拖放式编程

–参数库/ 工具条

–流程图式试验程序

16

SSQ:

–以图形方式编辑试验程序步骤

–试验步骤序列可视化

18

19

哪些通道被定义?哪些参数要被保存?

–系统参数

(SYS)–Test Field Param.

(TFP)哪一发动机在何种条件下被测试?

–被测试单元参数(UUT)–试验参数

(TST)

参数从何处装载?

–主机数据库或本地数据库

参数综述

–INIT 公式–记录仪触发–内含的–实时公式解释程序

–参数库功能

–根据需要

23

24。

prom 指标 -回复

prom 指标-回复标题:深入理解Prometheus指标:一种系统监控的强大工具Prometheus,作为一个开源的系统监控和报警工具,已经在众多企业和组织中得到了广泛应用。

其核心特性之一就是其强大的指标系统,使得用户能够对系统的各种状态和行为进行精细的监控和分析。

本文将详细解析Prometheus的指标系统,帮助读者更好地理解和使用这一工具。

一、Prometheus指标的基本概念在Prometheus中,指标(Metric)是系统状态的基本单位。

每个指标都由一个名字和一系列的标签(Label)组成,用于描述特定的测量值。

这些测量值可以是任何可以被量化的事物,如CPU利用率、网络带宽、数据库查询次数等。

二、Prometheus指标的类型Prometheus支持四种主要的指标类型:1. Counter:计数器,表示只增不减的数值,通常用于记录事件的数量,如请求总数、错误总数等。

2. Gauge:仪表盘,表示任意的数值,可以增加也可以减少,通常用于表示瞬时状态,如CPU利用率、内存使用量等。

3. Histogram:直方图,用于记录数据分布的情况,如请求响应时间的分布。

4. Summary:摘要,类似于直方图,但提供更灵活的计算方式,如百分位数。

三、Prometheus指标的命名和标签在Prometheus中,每个指标都有一个唯一的名称,并且可以附带一组标签。

这些标签用于区分同一类型的指标在不同环境或条件下的表现。

例如,一个名为"http_requests_total"的Counter指标,可以带有以下标签:"method"(HTTP方法)、"handler"(处理程序)、"status_code"(HTTP状态码)。

这样,我们就可以分别统计GET、POST等不同HTTP 方法的请求总数,或者200、404等不同状态码的请求总数。

大数据平台技术框架选型分析

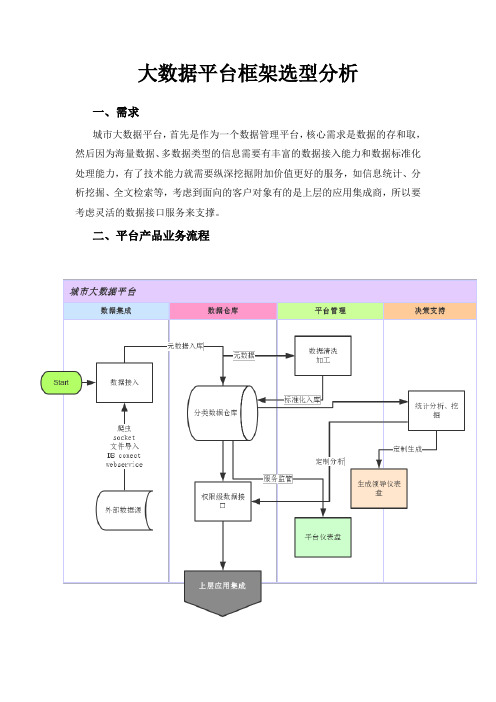

大数据平台框架选型分析一、需求城市大数据平台,首先是作为一个数据管理平台,核心需求是数据的存和取,然后因为海量数据、多数据类型的信息需要有丰富的数据接入能力和数据标准化处理能力,有了技术能力就需要纵深挖掘附加价值更好的服务,如信息统计、分析挖掘、全文检索等,考虑到面向的客户对象有的是上层的应用集成商,所以要考虑灵活的数据接口服务来支撑。

二、平台产品业务流程三、选型思路必要技术组件服务:ETL >非/关系数据仓储>大数据处理引擎>服务协调>分析BI >平台监管四、选型要求1.需要满足我们平台的几大核心功能需求,子功能不设局限性。

如不满足全部,需要对未满足的其它核心功能的开放使用服务支持2.国内外资料及社区尽量丰富,包括组件服务的成熟度流行度较高3.需要对选型平台自身所包含的核心功能有较为深入的理解,易用其API或基于源码开发4.商业服务性价比高,并有空间脱离第三方商业技术服务5.一些非功能性需求的条件标准清晰,如承载的集群节点、处理数据量及安全机制等五、选型需要考虑简单性:亲自试用大数据套件。

这也就意味着:安装它,将它连接到你的Hadoop安装,集成你的不同接口(文件、数据库、B2B等等),并最终建模、部署、执行一些大数据作业。

自己来了解使用大数据套件的容易程度——仅让某个提供商的顾问来为你展示它是如何工作是远远不够的。

亲自做一个概念验证。

广泛性:是否该大数据套件支持广泛使用的开源标准——不只是Hadoop和它的生态系统,还有通过SOAP和REST web服务的数据集成等等。

它是否开源,并能根据你的特定问题易于改变或扩展?是否存在一个含有文档、论坛、博客和交流会的大社区?特性:是否支持所有需要的特性?Hadoop的发行版本(如果你已经使用了某一个)?你想要使用的Hadoop生态系统的所有部分?你想要集成的所有接口、技术、产品?请注意过多的特性可能会大大增加复杂性和费用。

大数据如何在物联网高速公路上驱动分析

大数据如何在物联网高速公路上驱动分析请选中您要保存的内容,粘贴到此文本框大数据时代,快数据(fast data)有望给企业带来新的机遇。

智能手机、传感器和社交媒体产生了上百亿个数据节点,如果你没有能力对这些数据节点以及物联网作出响应,那快数据带来的商机将与你擦肩而过。

对于很多商业分析应用程序,快数据的分析和处理是大数据项目中不可避免的难题。

每当数据科学家从他们的大数据集(静态的)挖掘出新内容时,业务人员立刻就会去想从中赚钱的方法,同样,动态数据中巨大的经济利益也会促使快数据在商业中受到更多的重视,相信未来快数据会在商业中发挥更大的作用。

TIBCO这个公司从字面上可以理解为“有信息总线的IT企业”,它旨在为各种企业系统(如股票市场和交易应用程序)之间提供高速、低延迟的连接。

现在该公司致力于发展物联网(IoT)和快数据相关的技术,并将其作为自己的“两个第二优势”。

TIBCO市场部门高级总监告诉我们:“快数据首先要解决的是数据访问问题,即首先得访问到数据,现在我们正努力捕获所有不在防火墙保护范围内的数据,不管来自社交网络还是其他有API的来源。

例如,零售商使用BusinessWorks(该公司近期公布的旗舰版数据集成平台)可以通过客户的智能手机捕获客户地理位置数据,并且可以基于客户地理数据使用实时商品推荐系统。

“通过了解潜在客户的信息,从他们的大数据中发现用户爱好、特征,然后向客户推荐他们有可能喜欢的牛仔裤品牌以及类似商品,将客户介绍到商店,基于对客户信息的掌握,交易成功率被大大提高了。

当挖掘社交媒体数据以获得分析见解时,速度是至关重要的。

有一篇报道谈到过一个名字叫Blab的公司,该公司从社交媒体数据中提取信息,用以帮助广告商或公关公司作主题预测,判断哪些主题会有较好的传播效果(像病毒一样被传播和扩散)、哪些会石沉大海。

Ugam是另一家物联网公司,准确的说是一家分析应用开发商,这家总部在T exas的公司从物联网和快数据中发现了商机,它通过分析来源于社交网络的免费消费者数据,帮助零售商决定卖什么商品,以及将商品放在货架的什么位置。

体育行业实时数据分析技术手册

体育行业实时数据分析技术手册一、引言在现代社会中,体育行业的发展日益迅猛。

为了更好地了解和分析体育产业,实时数据分析技术已成为了必备的工具。

本文将介绍体育行业实时数据分析技术的基本概念、工具和方法,帮助读者更好地理解和应用这一技术。

二、实时数据分析概述实时数据分析是指通过实时采集、处理和展示数据,以了解当前情况并进行决策。

在体育行业中,实时数据分析可以帮助俱乐部、教练员和运动员更好地管理和训练,提高竞技水平和比赛成绩。

三、实时数据分析技术工具1. 数据采集工具:体育比赛中会产生大量的数据,包括比赛结果、比分、得分、进球等。

为了实现实时数据分析,需要使用专门的数据采集工具,如传感器、摄像头和计分板等。

2. 数据处理工具:采集到的数据需要进行处理和分析,以得出有意义的结论。

常用的数据处理工具包括数据挖掘软件、统计分析软件和机器学习算法等。

3. 数据可视化工具:将处理后的数据以直观的方式展示出来,可以帮助用户更好地理解和分析数据。

常见的数据可视化工具包括表格、图形和地图等。

四、实时数据分析方法1. 目标设定:在进行实时数据分析前,需要明确分析的目标和需求。

例如,俱乐部可能需要分析球队在比赛中的表现,教练员可能需要分析运动员的训练成绩。

2. 数据收集:采集相关数据,包括比赛结果、球员数据和场地数据等。

数据收集可以通过传感器、手动输入或者从数据库中提取等方式进行。

3. 数据处理:对采集到的数据进行清洗、整理和分析。

可以使用统计方法、机器学习算法和模型建立等技术进行数据处理。

4. 数据可视化:将处理好的数据以图表等形式进行可视化展示。

通过直观的数据可视化,用户可以更直观地理解和分析数据,发现规律和问题。

5. 结果分析:根据可视化结果进行数据分析和解读。

通过对结果的分析,可以得出有关比赛成绩、球员表现和训练效果等方面的结论。

六、实时数据分析的应用案例1. 比赛表现分析:通过采集和分析比赛时的实时数据,可以评估球队的整体表现、球员的个人表现和场地的特点。

体育行业中的运动数据分析实践

体育行业中的运动数据分析实践随着科技的发展和数据的广泛应用,运动数据分析在体育行业中的作用日益凸显。

通过对运动员的技术、训练和身体状态等方面的数据进行分析,可以提供有效的指导和决策支持,从而帮助运动员、教练员和管理者等在体育竞技中取得更好的成绩。

本文将介绍体育行业中运动数据分析的基本原理和实践方法,并探讨其在提高运动员竞技水平、优化训练计划和改进比赛策略方面的应用。

一、运动数据的采集与存储在运动数据分析中,首先要解决的问题是如何采集和存储运动数据。

目前,常见的运动数据采集方式包括传感器技术、摄像机技术和智能穿戴设备等。

传感器可以通过测量运动员的运动轨迹、力度、速度和加速度等参数来获取运动数据;摄像机可以通过拍摄运动员的表现来记录运动数据;智能穿戴设备可以通过感知运动员的生理指标如心率、血压和体温等来获取运动数据。

这些获取到的数据需要经过处理和转换后存储在数据库中,以备后续的分析和应用。

二、运动数据的分析与应用1. 运动员状态的评估与监控通过对运动员的运动数据进行分析,可以对运动员的技术、身体状况和心理状态等方面进行评估和监控。

例如,通过分析运动员的运动轨迹和速度等数据,可以评估运动员的技术水平和身体素质;通过分析运动员的心率和运动时间等数据,可以评估运动员的耐力和恢复能力;通过分析运动员的表现和动作等数据,可以评估运动员的专注力和心理状态。

这些评估和监控结果可以为教练员制定训练计划和调整比赛策略提供科学依据。

2. 训练计划的优化与改进通过对运动数据的分析,可以为运动员制定个性化的训练计划,以提高他们的竞技水平。

例如,通过分析运动员的技术数据,可以找出运动员在某个动作或技巧上存在的问题,并针对性地进行训练和改进;通过分析运动员的身体数据,可以判断运动员的力量、速度和柔韧性等方面的短板,并针对性地制定力量训练和柔韧性训练计划;通过分析运动员的心理数据,可以了解运动员在训练和比赛中可能遇到的心理问题,并提供相应的心理辅导和训练。

体育行业运动数据分析技术

体育行业运动数据分析技术运动数据分析技术在体育行业中扮演着重要的角色,通过对运动员、比赛和训练数据的分析,可以帮助教练和运动员优化训练计划、改进战术和提高表现。

本文将介绍体育行业中常用的运动数据分析技术及其应用。

一、运动数据采集与处理技术1. 传感器技术:运动传感器的使用可以实时采集运动员的身体姿势、加速度、速度、角速度等数据。

这些数据可以通过无线传输或存储在传感器中,方便后续的分析和处理。

2. 计算机视觉技术:通过摄像机、图像处理算法和人工智能技术,可以实时跟踪运动员的动作、位置和姿势。

这种技术可以在比赛中提供即时的数据,帮助教练进行实时的决策分析。

3. 生物医学传感器技术:运动员的生理数据如心率、血氧饱和度、体温等可以通过生物医学传感器进行采集。

这些数据的分析可以帮助教练了解运动员的身体状况和疲劳程度,以便制定相应的训练计划。

二、运动数据分析方法1. 统计分析:通过对大量的历史数据进行统计分析,可以揭示出运动员在比赛中的优势和劣势,帮助教练制定针对性的训练计划和战术。

2. 机器学习:借助机器学习算法,可以从海量的运动数据中挖掘出隐藏的规律和模式。

通过分析运动员的训练数据和比赛数据,可以预测他们的表现和潜力。

3. 数据可视化:将运动数据转化为图表、图像、动画等可视化形式,可以更直观地展示数据之间的关系和变化趋势。

这有助于教练和运动员更好地理解和利用数据,做出更明智的决策。

三、运动数据分析的应用领域1. 训练优化:通过对运动员训练数据的分析,可以确定训练目标、制定训练计划和调整训练强度。

同时,还可以监控运动员的训练进度和疲劳状态,及时做出相应的调整。

2. 伤病预防与康复:通过对运动员运动数据的分析,可以发现运动中的异常和风险,提前采取措施进行干预和预防。

此外,运动数据还可以用于康复监测和评估,帮助运动员更快地恢复到正常状态。

3. 战术改进:通过对比赛数据的分析,可以了解对手的表现和战术,找到对方的弱点和漏洞。

intel puma 方案

Intel Puma方案1. 简介Intel Puma方案是Intel开发的一种高性能网络处理器方案,适用于高速宽带家庭网关、有线和无线路由器、高级电缆电视频道调制解调器等应用场景。

本文将详细介绍Intel Puma方案的特点、优势和应用。

2. 特点2.1 高性能处理器Intel Puma方案采用了先进的多核处理器架构,具备强大的计算能力和高效的数据处理能力。

其支持高速数据包的传输和处理,能够满足大规模数据流的需求。

2.2 网络加速技术Intel Puma方案内置多种网络加速技术,包括流量控制、包过滤和协议加速等功能。

这些特色技术能够提升网络处理的效率和速度,减少数据的延迟和丢包,并提供更快的网络响应时间。

Intel Puma方案在网络安全方面进行了深度优化。

通过采用硬件加速技术和高级加密算法,能够保护用户的隐私数据和网络安全。

同时,Puma方案还具备故障自动恢复功能,确保网络的可靠性和稳定性。

2.4 低功耗设计Intel Puma方案以低功耗设计为目标,采用了先进的电源管理技术,能够有效降低功耗并延长设备的续航时间。

这一特点使得Puma方案在节能环保方面具备显著的优势。

3. 优势3.1 高性能与可扩展性Intel Puma方案具备出色的性能和可扩展性。

其多核处理器架构和高速数据传输技术使其能够处理大规模的网络流量,并支持更多的用户和设备同时连接。

因此,Puma方案非常适用于高带宽、高密度的网络环境。

3.2 稳定性与可靠性Puma方案通过高级的网络管理和故障恢复机制,保障网络的稳定性和可靠性。

即使在复杂的网络环境中,Puma方案也能够保持网络连接的稳定,并且能够快速恢复故障。

Puma方案具备高级的网络安全功能,能够有效保护用户的隐私数据和网络安全。

采用硬件加速技术的加密算法,可以对数据进行加密传输,增强网络安全性。

同时,Puma方案还支持各种防火墙和安全协议,增强网络的防护能力。

3.4 低功耗与节能环保Intel Puma方案以低功耗设计为目标,采用了先进的电源管理技术,能够降低设备的能耗和热量产生,确保设备的高效运行。

大数据平台的软件有哪些

大数据平台的软件有哪些?查询引擎一、Phoenix简介:这是一个Java中间层,可以让开发者在Apache HBase上执行SQL查询。

Phoenix完全使用Java编写,代码位于GitHub上,并且提供了一个客户端可嵌入的JDBC驱动。

Phoenix查询引擎会将SQL查询转换为一个或多个HBase scan,并编排执行以生成标准的JDBC 结果集。

直接使用HBase API、协同处理器与自定义过滤器,对于简单查询来说,其性能量级是毫秒,对于百万级别的行数来说,其性能量级是秒。

Phoenix最值得关注的一些特性有:?嵌入式的JDBC驱动,实现了大部分的接口,包括元数据API?可以通过多部行键或是键/值单元对列进行建模?完善的查询支持,可以使用多个谓词以及优化的扫描键?DDL支持:通过CREATE TABLE、DROP TABLE及ALTER TABLE 来添加/删除列?版本化的模式仓库:当写入数据时,快照查询会使用恰当的模式?DML支持:用于逐行插入的UPSERT V ALUES、用于相同或不同表之间大量数据传输的UPSERT ?SELECT、用于删除行的DELETE?通过客户端的批处理实现的有限的事务支持?单表——还没有连接,同时二级索引也在开发当中?紧跟ANSI SQL标准二、Stinger简介:原叫Tez,下一代Hive,Hortonworks主导开发,运行在YARN 上的DAG计算框架。

某些测试下,Stinger能提升10倍左右的性能,同时会让Hive支持更多的SQL,其主要优点包括:?让用户在Hadoop获得更多的查询匹配。

其中包括类似OVER 的字句分析功能,支持WHERE查询,让Hive的样式系统更符合SQL模型。

?优化了Hive请求执行计划,优化后请求时间减少90%。

改动了Hive执行引擎,增加单Hive任务的被秒处理记录数。

?在Hive社区中引入了新的列式文件格式(如ORC文件),提供一种更现代、高效和高性能的方式来储存Hive数据。

大数据分析与高速数据更新

大数据分析与高速数据更新

陈世敏

【期刊名称】《计算机研究与发展》

【年(卷),期】2015(052)002

【摘要】大数据对于数据管理系统平台的主要挑战可以归纳为volume(数据量大)、velocity(数据的产生、获取和更新速度快)和variety(数据种类繁多)3个方面.针对大数据分析系统,尝试解读velocity的重要性和探讨如何应对velocity的挑战.首先比较事物处理、数据流、与数据分析系统对velocity的不同要求.然后从数据更新

与大数据分析系统相互关系的角度出发,讨论两项近期的研究工作:1)MaSM,在数据仓库系统中支持在线数据更新;2)LogKV,在日志处理系统中支持高速流入的日志数

据和高效的基于时间窗口的连接操作.通过分析比较发现,存储数据更新只是最基本

的要求,更重要的是应该把大数据的从更新到分析作为数据的整个生命周期,进行综

合考虑和优化,根据大数据分析的特点,优化高速数据更新的数据组织和数据分布方式,从而保证甚至提高数据分析运算的效率.

【总页数】10页(P333-342)

【作者】陈世敏

【作者单位】计算机体系结构国家重点实验室(中国科学院计算技术研究所) 北京100190

【正文语种】中文

【中图分类】TP311.13

【相关文献】

1.大数据分析与高速数据更新 [J], 李卓然;

2.微机高速缓存系统组织与数据更新探讨 [J], 徐景村;何培斌

3.大数据分析在高速公路收费管理中应用的重要性 [J], 冯明艳

4.简析高速公路收费管理中大数据分析的运用 [J], 梁剑娴;唐玉斌

5.重庆高速公路服务区视频大数据分析应用探析 [J], 陈力云;王卫平;沈翔;王世森因版权原因,仅展示原文概要,查看原文内容请购买。

第七讲网络模型ppt课件

扩展运用

下面网络图中的5个节点表示一个4年期的时间段 内各年的时间点。每个节点表示的是做出坚持或 替代公司电脑决议的时间。假设断定交换电脑设 备,那么同时也要决议新设备要用多久,从节点 0到节点1的弧代表持有现有设备1年并且到年底 交换,从节点0到节点2的弧表示坚持现有设备2 年并且到第2年末交换。弧上的数字表示与交换 设备有关的总本钱。这些本钱包括打折后的购买 价、旧设备换新的折价、运营本钱和维修本钱。 请确定4年内的最小设备交换本钱。

[18,5] 4

3 [10,1]

5 [14,3]

[22,6] [30,2]

7

6 [16,5]

图9-9 戈曼网络——节点6的永久标识以及节点 7的新暂时标识

[0,S] 1

[13,3] 2

[18,5] 4

3 [10,1]

5 [14,3]

[22,6] 7

6 [16,5]

图9-10 戈曼网络——节点4的永久标识

x57<=8 x67<=7

x14<=5 x35<=3

x36<=7

Management Scientist求解结果

2

3

5

16

35

6

最大流 量1小时 714000辆

4

5

5

1 进入辛辛 那提(北)

2

33

5

7

22 2

3

3

1 11

66

3

75

67

5

3

3 5

3

4

7 分开辛辛 那提(南)

练习

高价油公司拥有一个从采集地到几个储存点之间传送石 油的管道网络系统。其部分网络系统如以下图所示:不 同的管道型号,其流量也不同。经过有选择地开关部分 的管道网络,公司可以提供任何贮藏地。

- 1、下载文档前请自行甄别文档内容的完整性,平台不提供额外的编辑、内容补充、找答案等附加服务。

- 2、"仅部分预览"的文档,不可在线预览部分如存在完整性等问题,可反馈申请退款(可完整预览的文档不适用该条件!)。

- 3、如文档侵犯您的权益,请联系客服反馈,我们会尽快为您处理(人工客服工作时间:9:00-18:30)。

Puma2: Pros and Cons

• Pros

▪ ▪

Puma2 code is very simple. Puma2 service is very easy to maintain.

• Cons

▪ ▪ ▪ ▪

“Increment” operation is expensive. Do not support complex aggregations. Hacky implementation of “most frequent elements”. Can cause small data duplicates.

Improvements in Puma2

• Puma2

▪

Batching of requests. Didn‟t work well because of long-tail distribution.

• HBase

▪ ▪ ▪

“Increment” operation optimized by reducing locks. HBase region/HDFS file locality; short-circuited read. Reliability improvements under high load.

• Puma3 needs a lot of memory

▪ ▪

Use 60GB of memory per box for the hashmap SSD can scale to 10x per box.

PTail

Puma3

HBase

• Join

▪ ▪ ▪

Static join table in HBase. Distributed hash lookup in user-defined function (udf). Local cache improves the throughput of the udf a lot. Serving

• HBase as persistent key-value storage.

Serving

Puma3 Architecture

PTail

Puma3

HBase

• Write workflow

▪ ▪

Serving

For each log line, extract the columns for key and value.

▪ ▪ ▪

Checkpoints inserted into the data stream Can roll back to tail from any data checkpoints No data loss/duplicates

Channel Comparison

Push / RPC Latency 1-2 sec Loss/Dups Few Robustness Low Complexity Low Pull / FS 10 sec None High High

Look up in the hashmap and call user-defined aggregation

Puma3 Architecture

PTail

Puma3

HBase

• Checkpoint workflow

▪

Every 5 min, save modified hashmap entries, PTail checkpoint to HBase

▪

Serving Read uncommitted: directly serve from the in-memory hashmap; load from Hbase on miss. Read committed: read from HBase and serve.

▪

Puma3 Architecture

• ~ 1M log lines per second, but light read • Multiple Group-By operations per log line

• The first key in Group By is always time/date-related

• Complex aggregations: Unique user count, most frequent elements

Serving

▪

▪

On startup (after node failure), load from HBase

Get rid of items in memory once the time window has passed

Puma3 ArchitectureFra bibliotekPTail

Puma3

HBase

• Read workflow

▪

How do we reduce per-batch overhead?

Decisions

• Stream Processing wins!

• Data Freeway

▪

Scalable Data Stream Framework

• Puma

▪

Reliable Stream Aggregation Engine

Puma2 / Puma3 comparison

• Puma3 is much better in write throughput

▪ ▪

Use 25% of the boxes to handle the same load. HBase is really good at write throughput.

▪ ▪

Application buckets are application-defined shards. Infrastructure buckets allows log streams from x B/s to x GB/s

• Performance

▪ ▪

Latency: Call sync every 7 seconds Throughput: Easily saturate 1Gbit NIC

MySQL and HBase: one page

Parallel Fail-over Read efficiency Write efficiency Columnar support MySQL Manual sharding Manual master/slave switch High Medium No HBase Automatic load balancing Automatic Low High Yes

Puma2 Architecture

PTail

Puma2

HBase

Serving

• PTail provide parallel data streams • For each log line, Puma2 issue “increment” operations to HBase. Puma2 is symmetric (no sharding). • HBase: single increment on multiple columns

Scribe

Push / RPC Calligraphus

PT ail + ScribeSend

Pull / FS Continuous Copier

Puma real-time aggregation/storage

Overview

Log Stream

Aggregations

Storage

Serving

C1

C2

DataNode

Scribe Clients

Calligraphus Mid-tier

Calligraphus Writers

PTail

HDFS

HDFS PTail (in the plan) Log Consumer

Zookeeper

• 9GB/sec at peak, 10 sec latency, 2500 log categories

Real-time Analytics at Facebook: Data Freeway and Puma

Agenda

1 2 3 4

Analytics and Real-time Data Freeway Puma Future Works

Analytics and Real-time what and why

• Still not good enough!

Puma3 Architecture

PTail

Puma3

HBase

• Puma3 is sharded by aggregation key. • Each shard is a hashmap in memory.

• Each entry in hashmap is a pair of an aggregation key and a user-defined aggregation.

Analytics based on Hadoop/Hive

seconds seconds

Hourly Copier/Loader

Daily Pipeline Jobs

HTTP

Scribe

NFS

Hive Hadoop

MySQL

• 3000-node Hadoop cluster

• Copier/Loader: Map-Reduce hides machine failures • Pipeline Jobs: Hive allows SQL-like syntax • Good scalability, but poor latency! 24 – 48 hours.